Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

After the deployment is added successfully, you can query the deployment for intent and entities predictions from your utterance based on the model you assigned to the deployment. You can query the deployment programmatically through the prediction API or through the client libraries (Azure SDK).

Test deployed model

Once your model is deployed, you can test it by sending prediction requests to evaluate its performance with real utterances. Testing helps you verify that the model accurately identifies intents and extracts entities as expected before integrating it into your production applications. You can test your deployment using either the REST API or the Azure SDK client libraries.

Send a conversational language understanding request

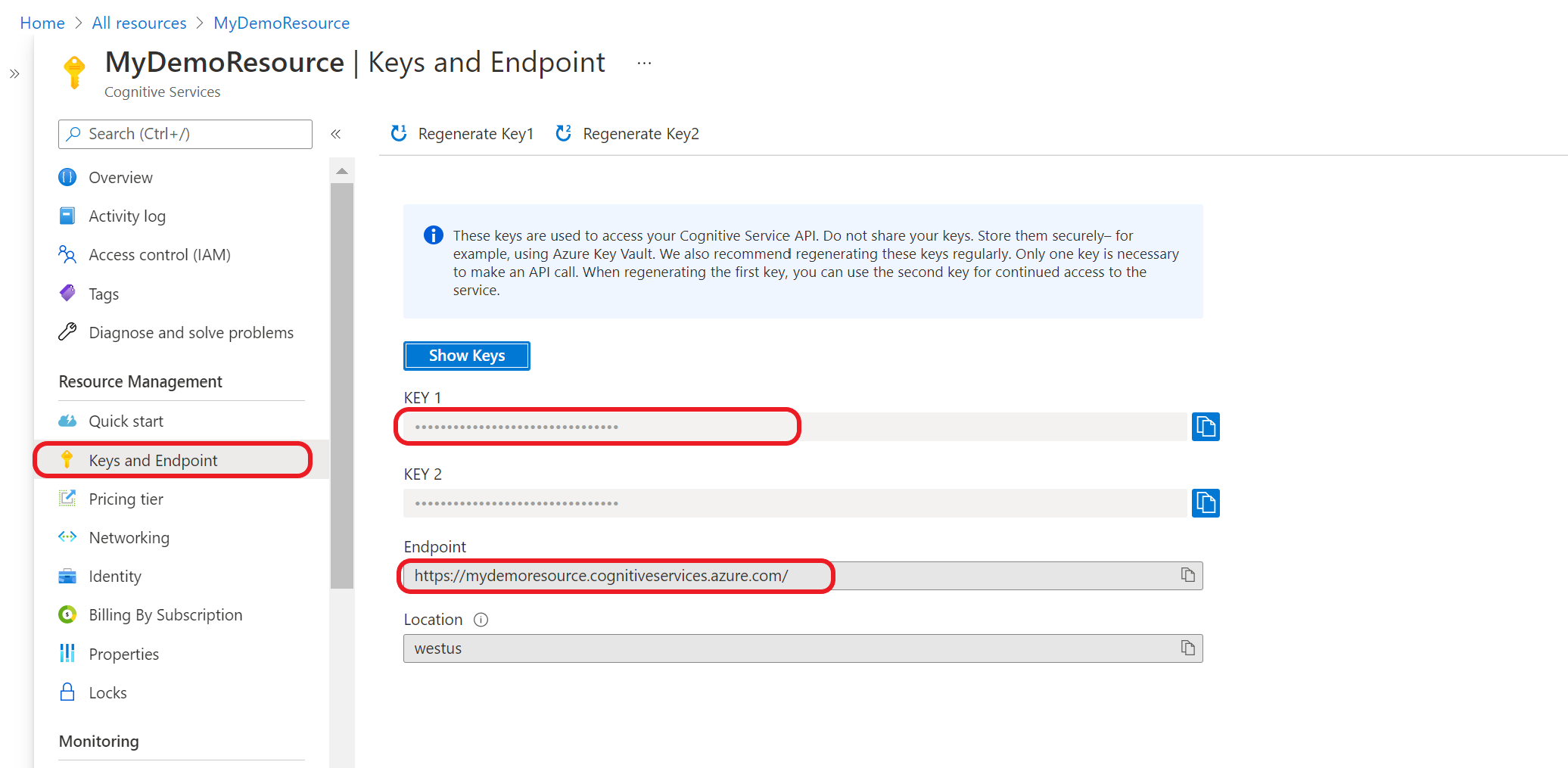

First you need to get your resource key and endpoint:

Go to your resource overview page in the Azure portal. From the menu on the left side, select Keys and Endpoint. You use the endpoint and key for the API requests

Query your model

Create a POST request using the following URL, headers, and JSON body to start testing a conversational language understanding model.

Request URL

{ENDPOINT}/language/:analyze-conversations?api-version={API-VERSION}

| Placeholder | Value | Example |

|---|---|---|

{ENDPOINT} |

The endpoint for authenticating your API request. | https://<your-custom-subdomain>.cognitiveservices.azure.cn |

{API-VERSION} |

The version of the API you're calling. | 2023-04-01 |

Headers

Use the following header to authenticate your request.

| Key | Value |

|---|---|

Ocp-Apim-Subscription-Key |

The key to your resource. Used for authenticating your API requests. |

Request body

{

"kind": "Conversation",

"analysisInput": {

"conversationItem": {

"id": "1",

"participantId": "1",

"text": "Text 1"

}

},

"parameters": {

"projectName": "{PROJECT-NAME}",

"deploymentName": "{DEPLOYMENT-NAME}",

"stringIndexType": "TextElement_V8"

}

}

| Key | Placeholder | Value | Example |

|---|---|---|---|

participantId |

{JOB-NAME} |

"MyJobName |

|

id |

{JOB-NAME} |

"MyJobName |

|

text |

{TEST-UTTERANCE} |

The utterance that you want to predict its intent and extract entities from. | "Read Matt's email |

projectName |

{PROJECT-NAME} |

The name of your project. This value is case-sensitive. | myProject |

deploymentName |

{DEPLOYMENT-NAME} |

The name of your deployment. This value is case-sensitive. | staging |

Once you send the request, you get the following response for the prediction

Response body

{

"kind": "ConversationResult",

"result": {

"query": "Text1",

"prediction": {

"topIntent": "inten1",

"projectKind": "Conversation",

"intents": [

{

"category": "intent1",

"confidenceScore": 1

},

{

"category": "intent2",

"confidenceScore": 0

},

{

"category": "intent3",

"confidenceScore": 0

}

],

"entities": [

{

"category": "entity1",

"text": "text1",

"offset": 29,

"length": 12,

"confidenceScore": 1

}

]

}

}

}

| Key | Sample Value | Description |

|---|---|---|

| query | "Read Matt's email" | the text you submitted for query. |

| topIntent | "Read" | The predicted intent with highest confidence score. |

| intents | [] | A list of all the intents that were predicted for the query text each of them with a confidence score. |

| entities | [] | array containing list of extracted entities from the query text. |

API response for a conversations project

In a conversations project, you'll get predictions for both your intents and entities that are present within your project.

- The intents and entities include a confidence score between 0.0 to 1.0 associated with how confident the model is about predicting a certain element in your project.

- The top scoring intent is contained within its own parameter.

- Only predicted entities show up in your response.

- Entities indicate:

- The text of the entity that was extracted

- Its start location denoted by an offset value

- The length of the entity text denoted by a length value.

You can also use the client libraries provided by the Azure SDK to send requests to your model.

Note

The client library for conversational language understanding is only available for:

- .NET

- Python

Go to your resource overview page in the Azure portal

From the menu on the left side, select Keys and Endpoint. Use endpoint for the API requests and you need the key for

Ocp-Apim-Subscription-Keyheader.Download and install the client library package for your language of choice:

Language Package version .NET 1.0.0 Python 1.0.0 After you install the client library, use the following samples on GitHub to start calling the API.

For more information, see the following reference documentation: