Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

In this overview, you learn about the benefits and capabilities of the text to speech feature of the Speech service, which is part of Azure AI services.

Text to speech enables your applications, tools, or devices to convert text into human like synthesized speech. The text to speech capability is also known as speech synthesis. Use human like standard voices out of the box. For a full list of supported voices, languages, and locales, see Language and voice support for the Speech service.

The Speech service provides standard (neural) voices options:

- Standard voices: High-quality neural voices available out of the box in 100+ languages and locales

For a complete list of available voices and languages, see Language and voice support.

Get started

For more comprehensive tutorials and examples:

- Text to speech quickstart - Complete tutorial with multiple languages

- Speech SDK documentation - Full SDK reference and samples

- REST API reference - HTTP-based integration

- Speech CLI - Command-line tools

Tip

To convert text to speech with a no-code approach, try the Audio Content Creation tool in Speech Studio.

Neural text to speech features

Text to speech uses deep neural networks to make computer voices nearly indistinguishable from human recordings. With clear articulation, neural text to speech reduces listening fatigue during AI interactions.

Key features

| Feature | Summary | Demo |

|---|---|---|

| Standard voice (called Neural on the pricing page) | Highly natural out-of-the-box voices. Create an Azure subscription and Speech resource, and then use the Speech SDK or visit the Speech Studio portal and select standard voices to get started. Check the pricing details. | Check the Voice Gallery and determine the right voice for your business needs. |

Advanced features

- Real-time speech synthesis: Use the Speech SDK or REST API to convert text to speech by using standard voices.

Standard voices: Azure Speech uses deep neural networks to overcome the limits of traditional speech synthesis regarding stress and intonation in spoken language. Prosody prediction and voice synthesis happen simultaneously, which results in more fluid and natural-sounding outputs. Each standard voice model is available at 24 kHz and high-fidelity 48 kHz. You can use neural voices to:

- Make interactions with chatbots and voice assistants more natural and engaging.

- Convert digital texts such as e-books into audiobooks.

- Enhance in-car navigation systems.

For a full list of standard Azure Speech neural voices, see Language and voice support for the Speech service.

Improve text to speech output with SSML: Speech Synthesis Markup Language (SSML) is an XML-based markup language used to customize text to speech outputs. With SSML, you can adjust pitch, add pauses, improve pronunciation, change speaking rate, adjust volume, and attribute multiple voices to a single document.

You can use SSML to define your own lexicons or switch to different speaking styles. With the multilingual voices, you can also adjust the speaking languages via SSML. To improve the voice output for your scenario, see Improve synthesis with Speech Synthesis Markup Language and Speech synthesis with the Audio Content Creation tool.

Visemes: Visemes are the key poses in observed speech, including the position of the lips, jaw, and tongue in producing a particular phoneme. Visemes have a strong correlation with voices and phonemes.

By using viseme events in Speech SDK, you can generate facial animation data. This data can be used to animate faces in lip-reading communication, education, entertainment, and customer service. Viseme is currently supported only for the

en-US(US English) neural voices.

Sample code

Sample code for text to speech is available on GitHub. These samples cover text to speech conversion in most popular programming languages:

Pricing note

Billable characters

When you use the text to speech feature, billing is based on the total number of characters in each successfully processed request. This count includes all characters/letters, numbers, spaces, and punctuation; regardless of whether audio output is produced. Charges apply even if speech is not generated due to a mismatch between the selected voice language and the input text. Here's a list of what's billable:

- Text passed to the text to speech feature in the SSML body of the request

- All markup within the text field of the request body in the SSML format, except for

<speak>and<voice>tags - Letters, punctuation, spaces, tabs, markup, and all white-space characters

- Every code point defined in Unicode

For detailed information, see Speech service pricing.

Important

Each Chinese character is counted as two characters for billing, including kanji used in Japanese, hanja used in Korean, or hanzi used in other languages.

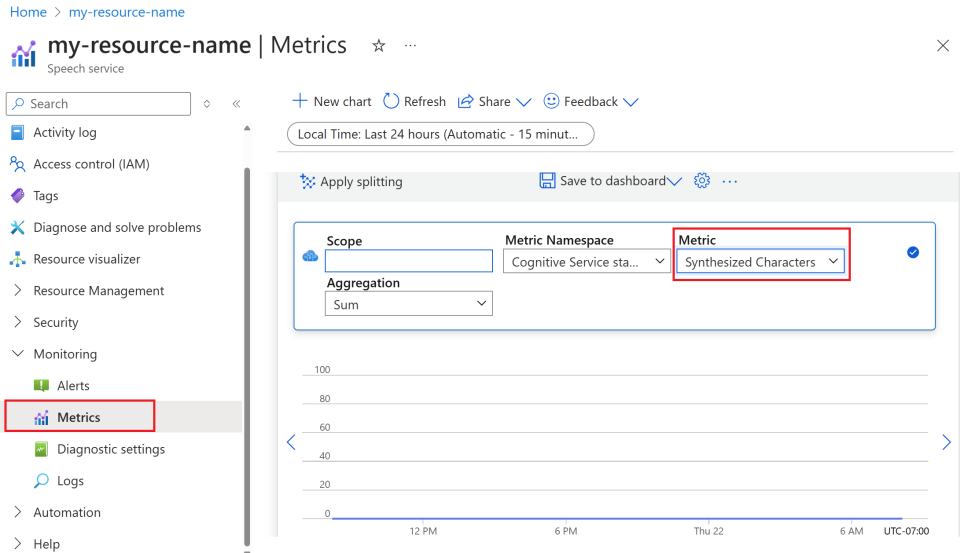

Monitor Azure text to speech metrics

Monitoring key metrics associated with text to speech services is crucial for managing resource usage and controlling costs. This section guides you on how to find usage information in the Azure portal and provide detailed definitions of the key metrics. For more information on Azure monitor metrics, see Azure Monitor Metrics overview.

How to find usage information in the Azure portal

To effectively manage your Azure resources, it's essential to access and review usage information regularly. Here's how to find the usage information:

Go to the Azure portal and sign in with your Azure account.

Navigate to Resources and select your resource you wish to monitor.

Select Metrics under Monitoring from the left-hand menu.

Customize metric views.

You can filter data by resource type, metric type, time range, and other parameters to create custom views that align with your monitoring needs. Additionally, you can save the metric view to dashboards by selecting Save to dashboard for easy access to frequently used metrics.

Set up alerts.

To manage usage more effectively, set up alerts by navigating to the Alerts tab under Monitoring from the left-hand menu. Alerts can notify you when your usage reaches specific thresholds, helping to prevent unexpected costs.

Definition of metrics

Here's a table summarizing the key metrics for Azure text to speech.

| Metric name | Description |

|---|---|

| Synthesized Characters | Tracks the number of characters converted into speech, including standard voice and custom voice. For details on billable characters, see Billable characters. |

| Voice Model Hosting Hours | Tracks the total time in hours that your custom voice model is hosted. |

| Voice Model Training Minutes | Measures the total time in minutes for training your custom voice model. |