Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

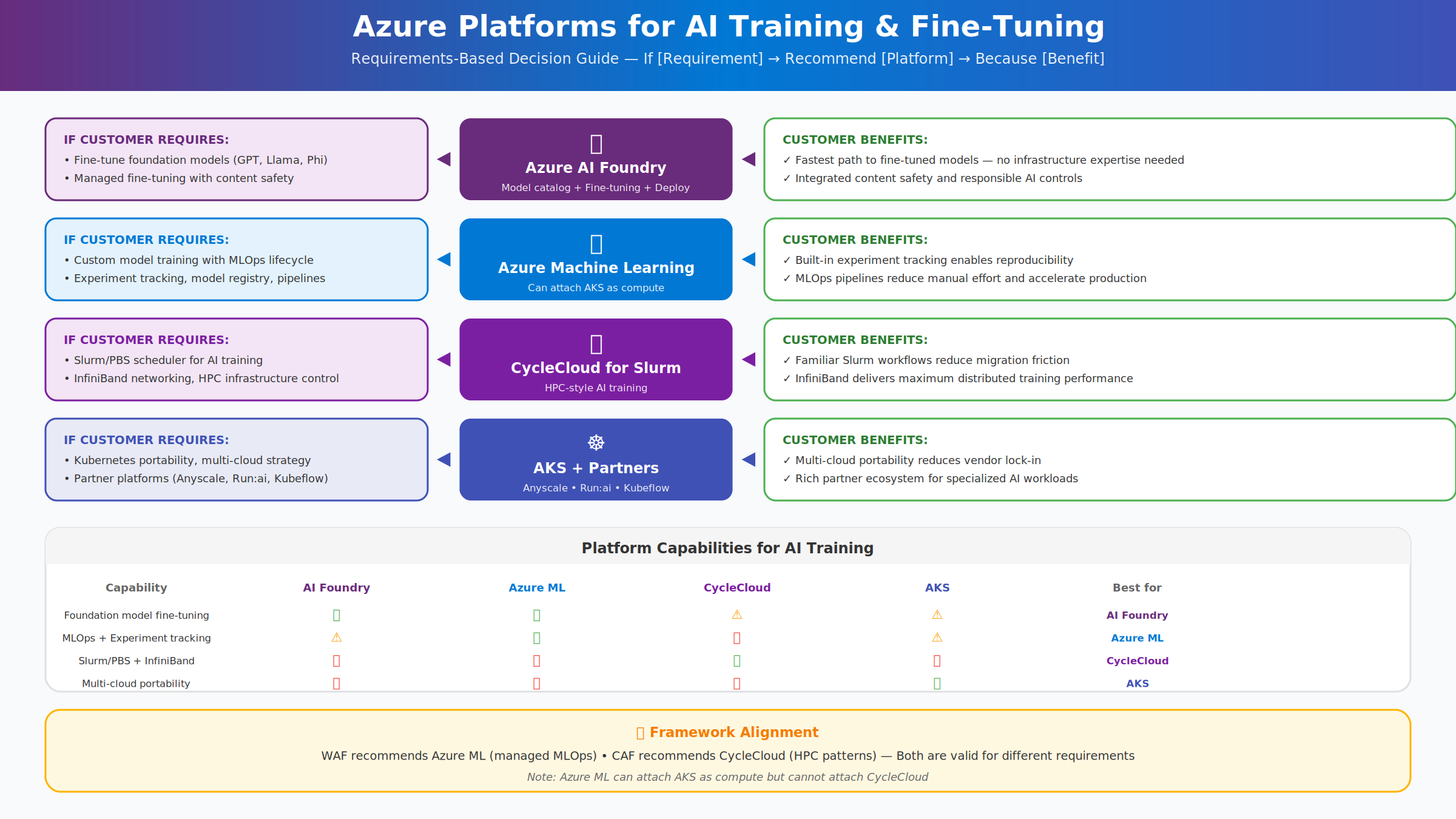

Azure provides multiple platforms for training, fine-tuning, and deploying AI models. Each platform addresses different requirements such as full MLOps lifecycle management, or Kubernetes-native portability.

While the Azure Well-Architected Framework (WAF) commonly recommends Azure Machine Learning for managed training and MLOps. Other platforms such as Azure Kubernetes Service (AKS) are also valid and recommended depending on the scenario.

The platforms overlap in capability, which can create confusion. This guide focuses on decision clarity rather than prescribing a single correct platform and helps choose the appropriate Azure platform for AI workloads based on workload type, operational model, and customer requirements.

Architecture and platform options for AI training and fine-tuning

Azure Machine Learning

Azure Machine Learning is recommended when you need custom model training with full MLOps lifecycle management.

It supports experiment tracking for reproducibility, automated training and deployment pipelines to reduce manual effort, and the flexibility to train any model architecture beyond foundation model fine-tuning.

Azure ML also provides robust model versioning, registration, and governance through its model registry, supporting compliance and audit needs, while offering managed compute so data scientists can focus on modeling rather than infrastructure. It can also attach AKS clusters as compute targets, combining Kubernetes-based training with Azure ML's orchestration and tracking.

AKS with partner solutions

AKS with partner solutions is recommended when you need a Kubernetes‑native AI platform with multicloud portability or access to a rich partner ecosystem.

AKS enables consistent AI training and deployment across Azure, on‑premises, and other clouds, while supporting any custom or emerging ML framework through container‑native workflows.

Partner integrations add significant value on AKS. Anyscale simplifies Ray‑based distributed training at scale while Run:ai optimizes GPU scheduling and utilization to help reduce costs. Kubeflow provides open‑source ML pipelines that avoid proprietary lock‑in. Volcano enables HPC‑style batch scheduling on Kubernetes.

Consider alternatives if you want integrated experiment tracking and MLOps pipelines, where Azure Machine Learning may be a better fit, or if InfiniBand networking is required, in which case CycleCloud is preferred.

Comparing platforms for AI training

The following table compares Azure platforms commonly used for AI training and deployment, highlighting how each aligns with different workload requirements, operational models, and infrastructure preferences.

| Capability | Azure ML | AKS |

|---|---|---|

| Foundation model fine-tuning | Supported | Requires custom setup |

| Custom model training | Native supported | Fully supported |

| Experiment tracking | Built-in | Support via MLflow |

| MLOps pipelines | Native pipelines | Support via Kubeflow |

| Slurm/PBS scheduler | Not supported | Not supported |

| InfiniBand networking | Limited support | Configurable |

| Kubernetes-native | No | Yes |

| Multi-cloud portable | No | Yes |

Decision guide

Custom machine learning with full MLOps

Teams building and training custom machine learning models benefit from Azure Machine Learning, which supports experiment tracking, pipeline automation, and model lifecycle management. When combined with Azure Kubernetes Service (AKS) for inference, this approach enables scalable, production‑ready deployments while maintaining strong MLOps practices.

Portable and Kubernetes‑native AI platforms

Organizations prioritizing portability and platform consistency across environments can adopt AKS with partner solutions such as Anyscale or GPU scheduling platforms. This Kubernetes‑native approach supports multi‑cloud strategies and ecosystem flexibility, while requiring teams to manage more of the operational responsibility compared to fully managed services.

Important

Azure Machine Learning can attach AKS as compute, but can't attach Azure CycleCloud. When you need both Azure ML capabilities and Slurm scheduling, design architectures that use data integration between separate platforms.

Multi-platform architectures

For complex implementations and enterprise architectures, you can combine platforms.

- For custom machine learning workloads that require Kubernetes-based serving, Azure Machine Learning handles training and MLOps while AKS provides scalable, production‑grade inference.

- Organizations pursuing a multi‑cloud AI strategy can use AKS with Anyscale for training and AKS for inference, enabling a portable, Kubernetes‑native architecture across environments.

Platform decisions are trade‑offs, not absolutes, and should be guided by workload demands, scale requirements, and the maturity of the teams supporting them.