Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

To enable your Azure Kubernetes Service (AKS) or Azure Arc-enabled Kubernetes cluster to run training jobs or inference workloads, first deploy the Azure Machine Learning extension. The Azure Machine Learning extension is a Standard cluster extension for AKS and cluster extension for Azure Arc-enabled Kubernetes. You can manage its lifecycle by using Azure CLI k8s-extension.

In this article, you learn about:

- Prerequisites

- Limitations

- Review Azure Machine Learning extension config settings

- Azure Machine Learning extension deployment scenarios

- Verify Azure Machine Learning extension deployment

- Review Azure Machine Learning extension components

- Manage Azure Machine Learning extension

Prerequisites

- An AKS cluster running in Azure. If you didn't previously use cluster extensions, you need to register the KubernetesConfiguration service provider.

- Or an Azure Arc-enabled Kubernetes cluster that's up and running. Follow instructions in connect existing Kubernetes cluster to Azure Arc.

- If the cluster is an Azure RedHat OpenShift (ARO) Service cluster or OpenShift Container Platform (OCP) cluster, you must satisfy other prerequisite steps as documented in the Reference for configuring Kubernetes cluster article.

- For production purposes, the Kubernetes cluster must have a minimum of 4 vCPU cores and 14-GB memory. For more information on resource detail and cluster size recommendations, see Recommended resource planning.

- A cluster running behind an outbound proxy server or firewall needs extra network configurations.

- Install or upgrade Azure CLI to version 2.51.0 or higher.

- Install or upgrade Azure CLI extension

k8s-extensionto version 1.2.3 or higher.

Limitations

- Azure Machine Learning doesn't support using a service principal with AKS. The AKS cluster must use a managed identity instead. Both system-assigned managed identity and user-assigned managed identity are supported. For more information, see Use a managed identity in Azure Kubernetes Service.

- When you convert your AKS cluster from using a service principal to using managed identity, you need to delete and recreate all node pools before installing the extension. You can't directly update the node pools.

- Azure Machine Learning doesn't support disabling local accounts for AKS. When you deploy the AKS cluster, local accounts are enabled by default.

- If your AKS cluster has an Authorized IP range enabled to access the API server, you must enable the Azure Machine Learning control plane IP ranges for the AKS cluster. The Azure Machine Learning control plane is deployed across paired regions. Without access to the API server, the machine learning pods can't be deployed. Use the IP ranges for both the paired regions when enabling the IP ranges in an AKS cluster.

- Azure Machine Learning doesn't support attaching an AKS cluster cross subscription. If you have an AKS cluster in a different subscription, you must first connect it to Azure Arc and specify in the same subscription as your Azure Machine Learning workspace.

- Azure Machine Learning doesn't guarantee support for all preview stage features in AKS. For example, Microsoft Entra pod-managed identity (deprecated) isn't supported.

- If you followed the steps in the Azure Machine Learning AKS v1 document to create or attach your AKS as an inference cluster, use the following link to clean up the legacy azureml-fe related resources before you continue the next step.

Review Azure Machine Learning extension configuration settings

Use the Azure CLI command az k8s-extension create to deploy the Azure Machine Learning extension. The az k8s-extension create command accepts configuration settings as space-separated key=value pairs through the --config or --config-protected parameter. The following table lists the available configuration settings you can specify during deployment.

| Configuration Setting Key Name | Description | Training | Inference | Training and Inference |

|---|---|---|---|---|

enableTraining |

True or False, default False. Must be set to True for Azure Machine Learning extension deployment with Machine Learning model training and batch scoring support. |

✓ | N/A | ✓ |

enableInference |

True or False, default False. Must be set to True for Azure Machine Learning extension deployment with Machine Learning inference support. |

N/A | ✓ | ✓ |

allowInsecureConnections |

True or False, default False. Can be set to True to use inference HTTP endpoints for development or test purposes. |

N/A | Optional | Optional |

inferenceRouterServiceType |

loadBalancer, nodePort, or clusterIP. Required if enableInference=True. |

N/A | ✓ | ✓ |

internalLoadBalancerProvider |

This config is only applicable for Azure Kubernetes Service(AKS) cluster now. Set to azure to allow the inference router using internal load balancer. |

N/A | Optional | Optional |

sslSecret |

The name of the Kubernetes secret in the azureml namespace. This config is used to store cert.pem (PEM-encoded TLS/SSL cert) and key.pem (PEM-encoded TLS/SSL key), which are required for inference HTTPS endpoint support when allowInsecureConnections is set to False. For a sample YAML definition of sslSecret, see Configure sslSecret. Use this config or a combination of sslCertPemFile and sslKeyPemFile protected config settings. |

N/A | Optional | Optional |

sslCname |

An TLS/SSL CNAME is used by inference HTTPS endpoint. Required if allowInsecureConnections=False |

N/A | Optional | Optional |

inferenceRouterHA |

True or False, default True. By default, Azure Machine Learning extension deploys three inference router replicas for high availability, which requires at least three worker nodes in a cluster. Set to False if your cluster has fewer than three worker nodes, in this case only one inference router service is deployed. |

N/A | Optional | Optional |

nodeSelector |

By default, the deployed kubernetes resources and your machine learning workloads are randomly deployed to one or more nodes of the cluster, and DaemonSet resources are deployed to ALL nodes. If you want to restrict the extension deployment and your training/inference workloads to specific nodes with label key1=value1 and key2=value2, use nodeSelector.key1=value1, nodeSelector.key2=value2 correspondingly. |

Optional | Optional | Optional |

installNvidiaDevicePlugin |

True or False, default False. NVIDIA Device Plugin is required for ML workloads on NVIDIA GPU hardware. By default, Azure Machine Learning extension deployment won't install NVIDIA Device Plugin regardless Kubernetes cluster has GPU hardware or not. User can specify this setting to True, to install it, but make sure to fulfill Prerequisites. |

Optional | Optional | Optional |

installPromOp |

True or False, default True. Azure Machine Learning extension needs prometheus operator to manage prometheus. Set to False to reuse the existing prometheus operator. For more information about reusing the existing prometheus operator, see reusing the prometheus operator |

Optional | Optional | Optional |

installVolcano |

True or False, default True. Azure Machine Learning extension needs volcano scheduler to schedule the job. Set to False to reuse existing volcano scheduler. For more information about reusing the existing volcano scheduler, see reusing volcano scheduler |

Optional | N/A | Optional |

installDcgmExporter |

True or False, default False. Dcgm-exporter can expose GPU metrics for Azure Machine Learning workloads, which can be monitored in Azure portal. Set installDcgmExporter to True to install dcgm-exporter. But if you want to utilize your own dcgm-exporter, see DCGM exporter |

Optional | Optional | Optional |

| Configuration Protected Setting Key Name | Description | Training | Inference | Training and Inference |

|---|---|---|---|---|

sslCertPemFile, sslKeyPemFile |

Path to TLS/SSL certificate and key file (PEM-encoded), required for Azure Machine Learning extension deployment with inference HTTPS endpoint support, when allowInsecureConnections is set to False. Note PEM file with pass phrase protected isn't supported |

N/A | Optional | Optional |

As you can see from the configuration settings table, the combinations of different configuration settings allow you to deploy Azure Machine Learning extension for different ML workload scenarios:

- For training job and batch inference workload, specify

enableTraining=True - For inference workload only, specify

enableInference=True - For all kinds of ML workload, specify both

enableTraining=TrueandenableInference=True

If you plan to deploy Azure Machine Learning extension for real-time inference workload and want to specify enableInference=True, pay attention to following configuration settings related to real-time inference workload:

azureml-ferouter service is required for real-time inference support and you need to specifyinferenceRouterServiceTypeconfig setting forazureml-fe.azureml-fecan be deployed with one of followinginferenceRouterServiceType:- Type

loadBalancer. Exposesazureml-feexternally using a cloud provider's load balancer. To specify this value, ensure that your cluster supports load balancer provisioning. Note most on-premises Kubernetes clusters might not support external load balancer. - Type

nodePort. Exposesazureml-feon each Node's IP at a static port. You can contactazureml-fe, from outside of cluster, by requesting<NodeIP>:<NodePort>. UsingnodePortalso allows you to set up your own load balancing solution and TLS/SSL termination forazureml-fe. For more information on how to set up your own ingress, see Integrate other ingress controller with Azure Machine Learning extension over HTTP or HTTPS. - Type

clusterIP. Exposesazureml-feon a cluster-internal IP, and it makesazureml-feonly reachable from within the cluster. Forazureml-feto serve inference requests coming outside of cluster, it requires you to set up your own load balancing solution and TLS/SSL termination forazureml-fe. For more information on how to set up your own ingress, see Integrate other ingress controller with Azure Machine Learning extension over HTTP or HTTPS.

- Type

- To ensure high availability of

azureml-ferouting service, Azure Machine Learning extension deployment by default creates three replicas ofazureml-fefor clusters having three nodes or more. If your cluster has less than 3 nodes, setinferenceRouterHA=False. - You also want to consider using HTTPS to restrict access to model endpoints and secure the data that clients submit. For this purpose, you need to specify either

sslSecretconfig setting or combination ofsslKeyPemFileandsslCertPemFileconfig-protected settings. - By default, Azure Machine Learning extension deployment expects config settings for HTTPS support. For development or testing purposes, HTTP support is conveniently provided through config setting

allowInsecureConnections=True.

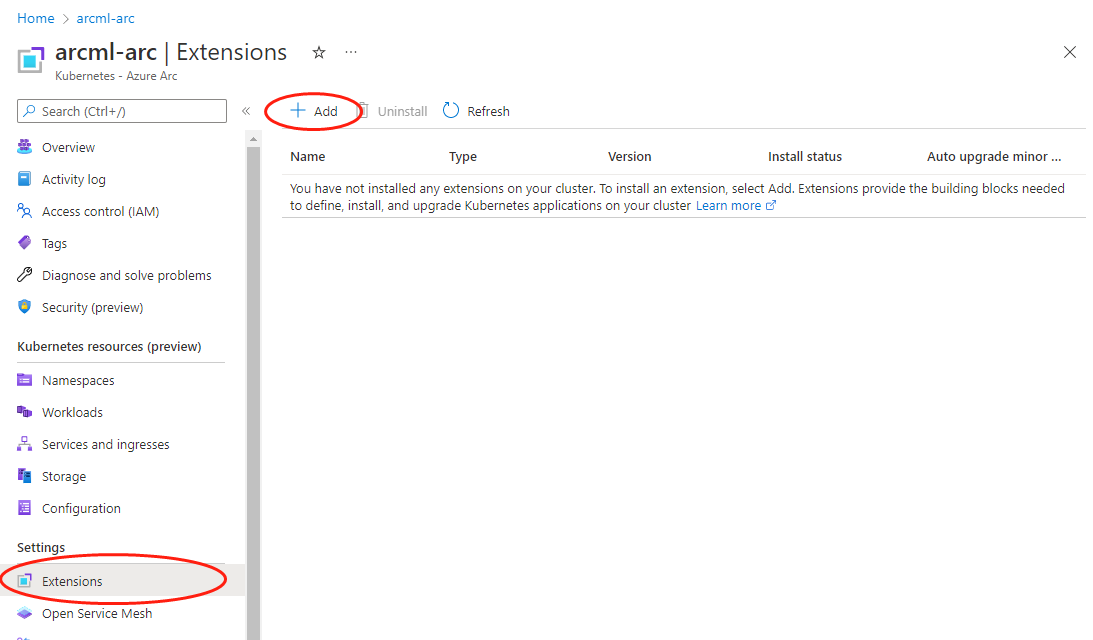

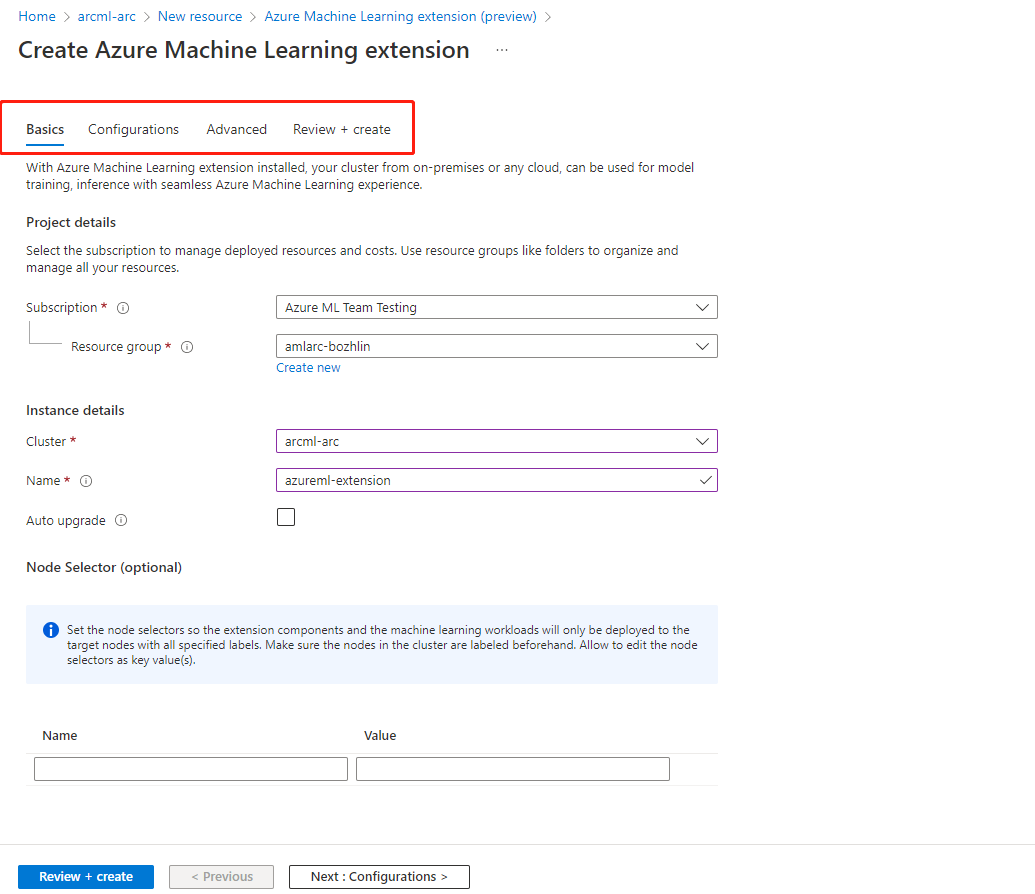

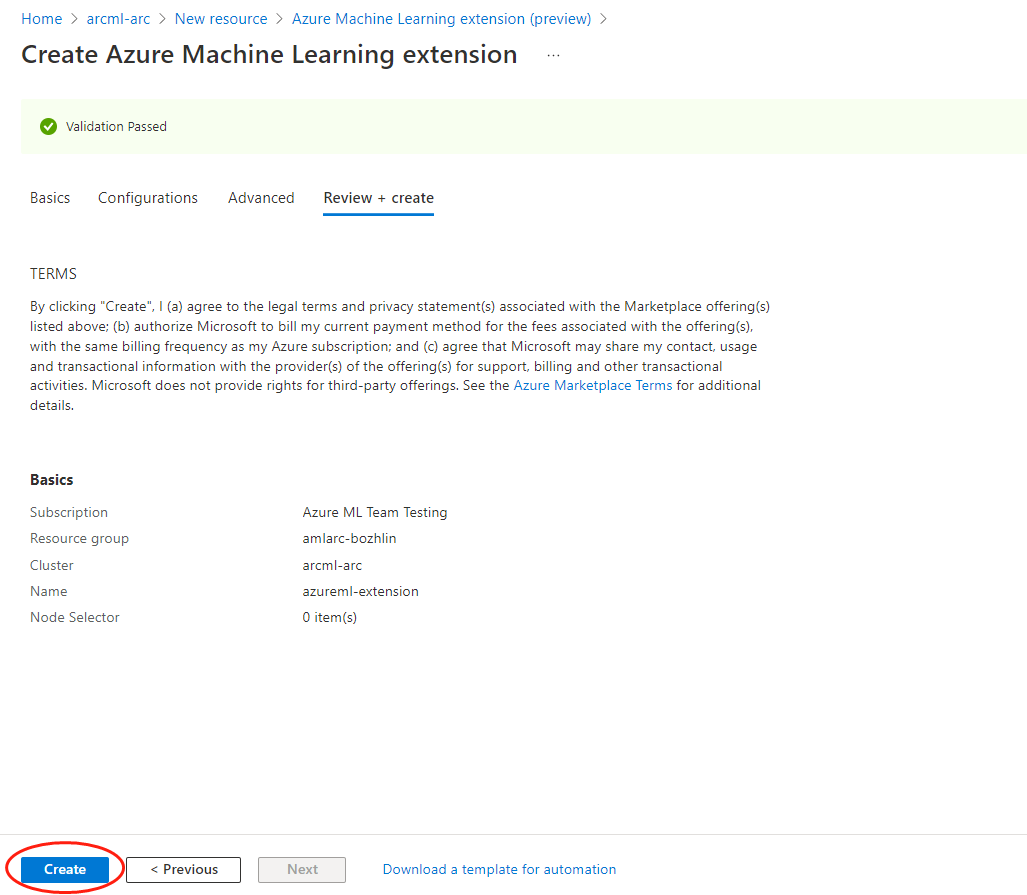

Azure Machine Learning extension deployment - CLI examples and Azure portal

To deploy the Azure Machine Learning extension by using CLI, use the az k8s-extension create command and provide values for the mandatory parameters.

The following list describes four typical extension deployment scenarios. To deploy the extension for your production usage, carefully read the complete list of configuration settings.

Use AKS cluster in Azure for a quick proof of concept to run all kinds of ML workload, for example, to run training jobs or to deploy models as online/batch endpoints

For Azure Machine Learning extension deployment on AKS cluster, specify

managedClustersvalue for--cluster-typeparameter. Run the following Azure CLI command to deploy Azure Machine Learning extension:az k8s-extension create --name <extension-name> --extension-type Microsoft.AzureML.Kubernetes --config enableTraining=True enableInference=True inferenceRouterServiceType=loadBalancer allowInsecureConnections=True inferenceRouterHA=False --cluster-type managedClusters --cluster-name <your-AKS-cluster-name> --resource-group <your-RG-name> --scope clusterUse an Azure Arc-enabled Kubernetes cluster outside of Azure for a quick proof of concept, to run training jobs only

For Azure Machine Learning extension deployment on an Azure Arc-enabled Kubernetes cluster, you would need to specify

connectedClustersvalue for--cluster-typeparameter. Run the following Azure CLI command to deploy Azure Machine Learning extension:az k8s-extension create --name <extension-name> --extension-type Microsoft.AzureML.Kubernetes --config enableTraining=True --cluster-type connectedClusters --cluster-name <your-connected-cluster-name> --resource-group <your-RG-name> --scope clusterEnable an AKS cluster in Azure for production training and inference workload For Azure Machine Learning extension deployment on AKS, specify

managedClustersvalue for--cluster-typeparameter. Assuming your cluster has more than three nodes, and you use an Azure public load balancer and HTTPS for inference workload support. Run the following Azure CLI command to deploy Azure Machine Learning extension:az k8s-extension create --name <extension-name> --extension-type Microsoft.AzureML.Kubernetes --config enableTraining=True enableInference=True inferenceRouterServiceType=loadBalancer sslCname=<ssl cname> --config-protected sslCertPemFile=<file-path-to-cert-PEM> sslKeyPemFile=<file-path-to-cert-KEY> --cluster-type managedClusters --cluster-name <your-AKS-cluster-name> --resource-group <your-RG-name> --scope clusterEnable an Azure Arc-enabled Kubernetes cluster anywhere for production training and inference workload using NVIDIA GPUs

For Azure Machine Learning extension deployment on an Azure Arc-enabled Kubernetes cluster, specify

connectedClustersvalue for--cluster-typeparameter. Assuming your cluster has more than three nodes, you use a NodePort service type and HTTPS for inference workload support, run following Azure CLI command to deploy Azure Machine Learning extension:az k8s-extension create --name <extension-name> --extension-type Microsoft.AzureML.Kubernetes --config enableTraining=True enableInference=True inferenceRouterServiceType=nodePort sslCname=<ssl cname> installNvidiaDevicePlugin=True installDcgmExporter=True --config-protected sslCertPemFile=<file-path-to-cert-PEM> sslKeyPemFile=<file-path-to-cert-KEY> --cluster-type connectedClusters --cluster-name <your-connected-cluster-name> --resource-group <your-RG-name> --scope cluster

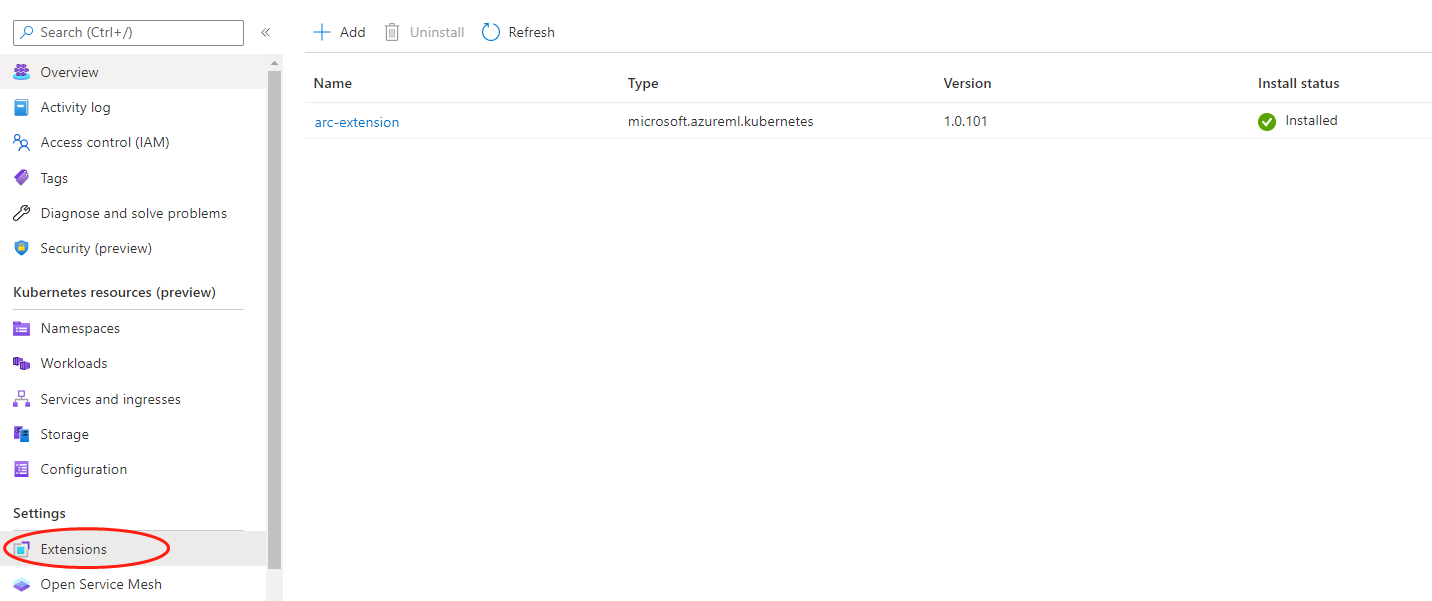

Verify Azure Machine Learning extension deployment

Run the applicable CLI command to check the Azure Machine Learning extension details:

AKS

az k8s-extension show --name <extension-name> --cluster-type managedClusters --cluster-name <your-AKS-cluster-name> --resource-group <resource-group>Azure Arc-enabled Kubernetes

az k8s-extension show --name <extension-name> --cluster-type connectedClusters --cluster-name <your-connected-cluster-name> --resource-group <resource-group>In the response, look for

"name"and"provisioningState": "Succeeded". It might show"provisioningState": "Pending"for the first few minutes.If the provisioningState shows Succeeded, run the following command on your machine with the kubeconfig file pointed to your cluster to check that all pods under

azuremlnamespace are inRunningstate:kubectl get pods -n azureml

Review Azure Machine Learning extension component

When the Azure Machine Learning extension deployment finishes, use kubectl get deployments -n azureml to see the list of resources created in the cluster. The list usually consists of a subset of the following resources, depending on the configuration settings you specify.

| Resource name | Resource type | Training | Inference | Training and Inference | Description | Communication with cloud |

|---|---|---|---|---|---|---|

| relayserver | Kubernetes deployment | ✓ | ✓ | ✓ | The deployment creates the relay server only for Azure Arc-enabled Kubernetes clusters, and not in AKS clusters. Relay server works with Azure Relay to communicate with the cloud services. | Receive the request of job creation, model deployment from cloud service; sync the job status with cloud service. |

| gateway | Kubernetes deployment | ✓ | ✓ | ✓ | The gateway is used to communicate and send data back and forth. | Send nodes and cluster resource information to cloud services. |

| aml-operator | Kubernetes deployment | ✓ | N/A | ✓ | Manage the lifecycle of training jobs. | Token exchange with the cloud token service for authentication and authorization of Azure Container Registry. |

| metrics-controller-manager | Kubernetes deployment | ✓ | ✓ | ✓ | Manage the configuration for Prometheus | N/A |

| {EXTENSION-NAME}-kube-state-metrics | Kubernetes deployment | ✓ | ✓ | ✓ | Export the cluster-related metrics to Prometheus. | N/A |

| {EXTENSION-NAME}-prometheus-operator | Kubernetes deployment | Optional | Optional | Optional | Provide Kubernetes native deployment and management of Prometheus and related monitoring components. | N/A |

| amlarc-identity-controller | Kubernetes deployment | N/A | ✓ | ✓ | Request and renew Azure Blob/Azure Container Registry token through managed identity. | Token exchange with the cloud token service for authentication and authorization of Azure Container Registry and Azure Blob used by inference/model deployment. |

| amlarc-identity-proxy | Kubernetes deployment | N/A | ✓ | ✓ | Request and renew Azure Blob/Azure Container Registry token through managed identity. | Token exchange with the cloud token service for authentication and authorization of Azure Container Registry and Azure Blob used by inference/model deployment. |

| azureml-fe-v2 | Kubernetes deployment | N/A | ✓ | ✓ | The front-end component that routes incoming inference requests to deployed services. | Send service logs to Azure Blob. |

| inference-operator-controller-manager | Kubernetes deployment | N/A | ✓ | ✓ | Manage the lifecycle of inference endpoints. | N/A |

| volcano-admission | Kubernetes deployment | Optional | N/A | Optional | Volcano admission webhook. | N/A |

| volcano-controllers | Kubernetes deployment | Optional | N/A | Optional | Manage the lifecycle of Azure Machine Learning training job pods. | N/A |

| volcano-scheduler | Kubernetes deployment | Optional | N/A | Optional | Used to perform in-cluster job scheduling. | N/A |

| fluent-bit | Kubernetes daemonset | ✓ | ✓ | ✓ | Gather the components' system log. | Upload the components' system log to cloud. |

| {EXTENSION-NAME}-dcgm-exporter | Kubernetes daemonset | Optional | Optional | Optional | dcgm-exporter exposes GPU metrics for Prometheus. | N/A |

| nvidia-device-plugin-daemonset | Kubernetes daemonset | Optional | Optional | Optional | nvidia-device-plugin-daemonset exposes GPUs on each node of your cluster | N/A |

| prometheus-prom-prometheus | Kubernetes statefulset | ✓ | ✓ | ✓ | Gather and send job metrics to cloud. | Send job metrics like cpu/gpu/memory utilization to cloud. |

Important

- The Azure Relay resource is in the same resource group as the Arc cluster resource. It's used to communicate with the Kubernetes cluster. Modifying it breaks attached compute targets.

- By default, the deployment resources are randomly deployed to one or more nodes of the cluster, and daemonset resources are deployed to all nodes. To restrict the extension deployment to specific nodes, use the

nodeSelectorconfiguration setting described in the configuration settings table.

Note

- {EXTENSION-NAME}: is the extension name you specify by using the

az k8s-extension create --nameCLI command.