Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

Important

On September 30, 2025, Basic Load Balancer will be retired. If you are currently using Basic Load Balancer, make sure to upgrade to Standard Load Balancer prior to the retirement date. For guidance on upgrading, visit Upgrading from Basic Load Balancer - Guidance.

Azure Load Balancer is a cloud service that distributes incoming network traffic across backend virtual machines (VMs) or virtual machine scale sets (VMSS). This article explains Azure Load Balancer's key features, architecture, and scenarios, helping you decide if it fits your organization's load balancing needs for scalable, highly available workloads.

Load balancing refers to efficiently distributing incoming network traffic across a group of backend virtual machines (VMs) or virtual machine scale sets (VMSS).

Load balancer overview

Azure Load Balancer operates at layer 4 of the Open Systems Interconnection (OSI) model. It's the single point of contact for clients. The service distributes inbound flows that arrive at the load balancer's frontend to backend pool instances. These flows are distributed according to configured load-balancing rules and health probes. The backend pool instances can be Azure virtual machines (VMs) or virtual machine scale sets.

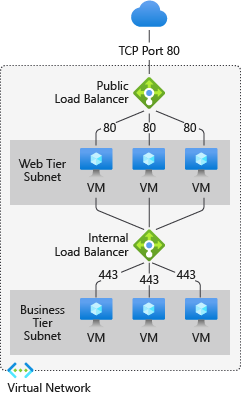

A public load balancer can provide both inbound and outbound connectivity for the VMs inside your virtual network. For inbound traffic scenarios, Azure Load Balancer can load balance internet traffic to your VMs. For outbound traffic scenarios, the service can translate the VMs' private IP addresses to public IP addresses for any outbound connections that originate from your VMs.

Alternatively, an internal (or private) load balancer are used to load balance traffic inside a virtual network. With internal load balancer, you can provide inbound connectivity to your VMs in private network connectivity scenarios, such as accessing a load balancer frontend from an on-premises network in a hybrid scenario.

For more information on the service's individual components, see Azure Load Balancer components.

Azure Load Balancer has three stock-keeping units (SKUs) - Basic, Standard, and Gateway. Each SKU is catered towards a specific scenario and has differences in scale, features, and pricing. For more information, see Azure Load Balancer SKUs.

Why use Azure Load Balancer

With Azure Load Balancer, you can scale your applications and create highly available services. The service supports both inbound and outbound scenarios, provides low latency and high throughput, and scales up to millions of flows for all TCP and UDP applications.

Core capabilities

Azure Load Balancer provides:

- High availability: Distribute resources within and across availability zones

- Scalability: Handle millions of flows for TCP and UDP applications

- Low latency: Use pass-through load balancing for ultralow latency

- Flexibility: Support for multiple ports, multiple IP addresses, or both

- Health monitoring: Use health probes to ensure traffic is only sent to healthy instances

Traffic distribution scenarios

- Load balance internal and external traffic to Azure virtual machines

- Configure outbound connectivity for Azure virtual machines

- Load balance TCP and UDP flow on all ports simultaneously using high-availability ports

- Enable port forwarding to access virtual machines by public IP address and port

Advanced features

- IPv6 support: Enable load balancing of IPv6 traffic

- Gateway load balancer integration: Chain Standard Load Balancer and Gateway Load Balancer

- Global load balancing integration: Distribute traffic across multiple Azure regions for global applications

- Admin State: Override health probe behavior for maintenance and operational management

Monitoring and insights

- Comprehensive metrics: Use multidimensional metrics through Azure Monitor

- Pre-built dashboards: Access Insights for Azure Load Balancer with useful visualizations

- Diagnostics: Review Standard load balancer diagnostics for performance insights

- Health Event Logs: Monitor load balancer health events and status changes for proactive management

- Load Balancer health status: Gain deeper insights into the health of your load balancer through health status monitoring

Security features

Azure Load Balancer implements security through multiple layers:

Zero Trust security model

- Standard Load Balancer is built on the Zero Trust network security model

- Part of your virtual network, which is private and isolated by default

Network access controls

- Standard load balancers and public IP addresses are closed to inbound connections by default

- Network Security Groups (NSGs) must explicitly permit allowed traffic

- Traffic is blocked if no NSG exists on a subnet or NIC

Data protection

- Azure Load Balancer doesn't store customer data

- All traffic processing happens in real-time without data persistence

Important

Basic Load Balancer is open to the internet by default and will be retired on September 30, 2025. Migrate to Standard Load Balancer for enhanced security.

To learn about NSGs and how to apply them to your scenario, see Network security groups.

Pricing and SLA

For Standard Load Balancer pricing information, see Load Balancer pricing. For service-level agreements (SLAs), see the Microsoft licensing information for online services.

Basic Load Balancer is offered at no charge and has no SLA. It was retired on September 30, 2025.

What's new?

Subscribe to the RSS feed and view the latest Azure Load Balancer updates on the Azure Updates page.