APPLIES TO:

Azure CLI ml extension v2 (current)

Azure CLI ml extension v2 (current)

Python SDK azure-ai-ml v2 (current)

Python SDK azure-ai-ml v2 (current)

In this article, you learn to deploy your model to an online endpoint for use in real-time inferencing. You begin by deploying a model on your local machine to debug any errors. Then, you deploy and test the model in Azure, view the deployment logs, and monitor the service-level agreement (SLA). By the end of this article, you have a scalable HTTPS/REST endpoint that you can use for real-time inference.

Online endpoints are endpoints that are used for real-time inferencing. There are two types of online endpoints: managed online endpoints and Kubernetes online endpoints. For more information about the differences, see Managed online endpoints vs. Kubernetes online endpoints.

Managed online endpoints help to deploy your machine learning models in a turnkey manner. Managed online endpoints work with powerful CPU and GPU machines in Azure in a scalable, fully managed way. Managed online endpoints take care of serving, scaling, securing, and monitoring your models. This assistance frees you from the overhead of setting up and managing the underlying infrastructure.

The main example in this article uses managed online endpoints for deployment. To use Kubernetes instead, see the notes in this document that are inline with the managed online endpoint discussion.

Prerequisites

APPLIES TO:  Azure CLI ml extension v2 (current)

Azure CLI ml extension v2 (current)

The Azure CLI and the ml extension to the Azure CLI, installed and configured. For more information, see Install and set up the CLI (v2).

A Bash shell or a compatible shell, for example, a shell on a Linux system or Windows Subsystem for Linux. The Azure CLI examples in this article assume that you use this type of shell.

An Azure Machine Learning workspace. For instructions to create a workspace, see Set up.

Azure role-based access control (Azure RBAC) is used to grant access to operations in Azure Machine Learning. To perform the steps in this article, your user account must be assigned the Owner or Contributor role for the Azure Machine Learning workspace, or a custom role must allow Microsoft.MachineLearningServices/workspaces/onlineEndpoints/*. If you use Azure Machine Learning studio to create and manage online endpoints or deployments, you need the extra permission Microsoft.Resources/deployments/write from the resource group owner. For more information, see Manage access to Azure Machine Learning workspaces.

(Optional) To deploy locally, you must install Docker Engine on your local computer. We highly recommend this option, which makes it easier to debug issues.

APPLIES TO:  Python SDK azure-ai-ml v2 (current)

Python SDK azure-ai-ml v2 (current)

An Azure Machine Learning workspace. For steps for creating a workspace, see Create the workspace.

The Azure Machine Learning SDK for Python v2. To install the SDK, use the following command:

pip install azure-ai-ml azure-identity

To update an existing installation of the SDK to the latest version, use the following command:

pip install --upgrade azure-ai-ml azure-identity

For more information, see Azure Machine Learning Package client library for Python.

Azure RBAC is used to grant access to operations in Azure Machine Learning. To perform the steps in this article, your user account must be assigned the Owner or Contributor role for the Azure Machine Learning workspace, or a custom role must allow Microsoft.MachineLearningServices/workspaces/onlineEndpoints/*. For more information, see Manage access to Azure Machine Learning workspaces.

(Optional) To deploy locally, you must install Docker Engine on your local computer. We highly recommend this option, which makes it easier to debug issues.

Before you follow the steps in this article, make sure that you have the following prerequisites:

The Azure CLI and the CLI extension for machine learning are used in these steps, but they're not the main focus. They're used more as utilities to pass templates to Azure and check the status of template deployments.

The Azure CLI and the ml extension to the Azure CLI, installed and configured. For more information, see Install and set up the CLI (v2).

A Bash shell or a compatible shell, for example, a shell on a Linux system or Windows Subsystem for Linux. The Azure CLI examples in this article assume that you use this type of shell.

An Azure Machine Learning workspace. For instructions to create a workspace, see Set up.

- Azure RBAC is used to grant access to operations in Azure Machine Learning. To perform the steps in this article, your user account must be assigned the Owner or Contributor role for the Azure Machine Learning workspace, or a custom role must allow

Microsoft.MachineLearningServices/workspaces/onlineEndpoints/*. For more information, see Manage access to an Azure Machine Learning workspace.

Ensure that you have enough virtual machine (VM) quota allocated for deployment. Azure Machine Learning reserves 20% of your compute resources for performing upgrades on some VM versions. For example, if you request 10 instances in a deployment, you must have a quota of 12 for each number of cores for the VM version. Failure to account for the extra compute resources results in an error. Some VM versions are exempt from the extra quota reservation. For more information on quota allocation, see Virtual machine quota allocation for deployment.

Alternatively, you could use quota from the Azure Machine Learning shared quota pool for a limited time. Azure Machine Learning provides a shared quota pool from which users across various regions can access quota to perform testing for a limited time, depending upon availability.

When you use the studio to deploy Llama-2, Phi, Nemotron, Mistral, Dolly, and Deci-DeciLM models from the model catalog to a managed online endpoint, Azure Machine Learning allows you to access its shared quota pool for a short time so that you can perform testing. For more information on the shared quota pool, see Azure Machine Learning shared quota.

Prepare your system

Set environment variables

If you haven't already set the defaults for the Azure CLI, save your default settings. To avoid passing in the values for your subscription, workspace, and resource group multiple times, run this code:

az account set --subscription <subscription ID>

az configure --defaults workspace=<Azure Machine Learning workspace name> group=<resource group>

Clone the examples repository

To follow along with this article, first clone the azureml-examples repository, and then change into the repository's azureml-examples/cli directory:

git clone --depth 1 https://github.com/Azure/azureml-examples

cd azureml-examples/cli

Use --depth 1 to clone only the latest commit to the repository, which reduces the time to complete the operation.

The commands in this tutorial are in the files deploy-local-endpoint.sh and deploy-managed-online-endpoint.sh in the cli directory. The YAML configuration files are in the endpoints/online/managed/sample/ subdirectory.

Note

The YAML configuration files for Kubernetes online endpoints are in the endpoints/online/kubernetes/ subdirectory.

Clone the examples repository

To run the training examples, first clone the azureml-examples repository, and then change into the azureml-examples/sdk/python/endpoints/online/managed directory:

git clone --depth 1 https://github.com/Azure/azureml-examples

cd azureml-examples/sdk/python/endpoints/online/managed

Use --depth 1 to clone only the latest commit to the repository, which reduces the time to complete the operation.

The information in this article is based on the online-endpoints-simple-deployment.ipynb notebook. It contains the same content as this article, although the order of the codes is slightly different.

Connect to Azure Machine Learning workspace

The workspace is the top-level resource for Azure Machine Learning. It provides a centralized place to work with all the artifacts you create when you use Azure Machine Learning. In this section, you connect to the workspace in which you perform deployment tasks. To follow along, open your online-endpoints-simple-deployment.ipynb notebook.

Import the required libraries:

# import required libraries

from azure.ai.ml import MLClient

from azure.ai.ml.entities import (

ManagedOnlineEndpoint,

ManagedOnlineDeployment,

Model,

Environment,

CodeConfiguration

)

from azure.identity import DefaultAzureCredential

Note

If you use the Kubernetes online endpoint, import the KubernetesOnlineEndpoint and KubernetesOnlineDeployment class from the azure.ai.ml.entities library.

Configure workspace details and get a handle to the workspace.

To connect to a workspace, you need these identifier parameters: a subscription, resource group, and workspace name. You use these details in MLClient from azure.ai.ml to get a handle to the required Azure Machine Learning workspace. This example uses the default Azure authentication.

# enter details of your Azure Machine Learning workspace

subscription_id = "<subscription ID>"

resource_group = "<resource group>"

workspace = "<workspace name>"

# get a handle to the workspace

ml_client = MLClient(

DefaultAzureCredential(), subscription_id, resource_group, workspace

)

If you have Git installed on your local machine, you can follow the instructions to clone the examples repository. Otherwise, follow the instructions to download files from the examples repository.

Clone the examples repository

To follow along with this article, first clone the azureml-examples repository, and then change into the azureml-examples/cli/endpoints/online/model-1 directory.

git clone --depth 1 https://github.com/Azure/azureml-examples

cd azureml-examples/cli/endpoints/online/model-1

Use --depth 1 to clone only the latest commit to the repository, which reduces the time to complete the operation.

Download files from the examples repository

If you cloned the examples repo, your local machine already has copies of the files for this example, and you can skip to the next section. If you didn't clone the repo, download it to your local machine.

- Go to the examples repository (azureml-examples).

- Go to the <> Code button on the page, and then on the Local tab, select Download ZIP.

- Locate the folder /cli/endpoints/online/model-1/model and the file /cli/endpoints/online/model-1/onlinescoring/score.py.

Set environment variables

Set the following environment variables so that you can use them in the examples in this article. Replace the values with your Azure subscription ID, the Azure region where your workspace is located, the resource group that contains the workspace, and the workspace name:

export SUBSCRIPTION_ID="<subscription ID>"

export LOCATION="<your region>"

export RESOURCE_GROUP="<resource group>"

export WORKSPACE="<workspace name>"

A couple of the template examples require you to upload files to Azure Blob Storage for your workspace. The following steps query the workspace and store this information in environment variables used in the examples:

Get an access token:

TOKEN=$(az account get-access-token --query accessToken -o tsv)

Set the REST API version:

API_VERSION="2022-05-01"

Get the storage information:

# Get values for storage account

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/datastores?api-version=$API_VERSION&isDefault=true" \

--header "Authorization: Bearer $TOKEN")

AZUREML_DEFAULT_DATASTORE=$(echo $response | jq -r '.value[0].name')

AZUREML_DEFAULT_CONTAINER=$(echo $response | jq -r '.value[0].properties.containerName')

export AZURE_STORAGE_ACCOUNT=$(echo $response | jq -r '.value[0].properties.accountName')

Clone the examples repository

To follow along with this article, first clone the azureml-examples repository, and then change into the azureml-examples directory:

git clone --depth 1 https://github.com/Azure/azureml-examples

cd azureml-examples

Use --depth 1 to clone only the latest commit to the repository, which reduces the time to complete the operation.

Define the endpoint

To define an online endpoint, specify the endpoint name and authentication mode. For more information on managed online endpoints, see Online endpoints.

Set an endpoint name

To set your endpoint name, run the following command. Replace <YOUR_ENDPOINT_NAME> with a name that's unique in the Azure region. For more information on the naming rules, see Endpoint limits.

export ENDPOINT_NAME="<YOUR_ENDPOINT_NAME>"

The following snippet shows the endpoints/online/managed/sample/endpoint.yml file:

$schema: https://azuremlschemas.azureedge.net/latest/managedOnlineEndpoint.schema.json

name: my-endpoint

auth_mode: key

The reference for the endpoint YAML format is described in the following table. To learn how to specify these attributes, see the online endpoint YAML reference. For information about limits related to managed endpoints, see Azure Machine Learning online endpoints and batch endpoints.

| Key |

Description |

$schema |

(Optional) The YAML schema. To see all available options in the YAML file, you can view the schema in the preceding code snippet in a browser. |

name |

The name of the endpoint. |

auth_mode |

Use key for key-based authentication.

Use aml_token for Azure Machine Learning token-based authentication.

Use aad_token for Microsoft Entra token-based authentication. For production workloads on managed online endpoints, this is the most secure option.

For more information on authenticating, see Authenticate clients for online endpoints. |

First define the name of the online endpoint, and then configure the endpoint.

Replace <YOUR_ENDPOINT_NAME> with a name that's unique in the Azure region, or use the example method to define a random name. Be sure to delete the method that you don't use. For more information on the naming rules, see Endpoint limits.

# method 1: define an endpoint name

endpoint_name = "<YOUR_ENDPOINT_NAME>"

# method 2: example way to define a random name

import datetime

endpoint_name = "endpt-" + datetime.datetime.now().strftime("%m%d%H%M%f")

# create an online endpoint

endpoint = ManagedOnlineEndpoint(

name = endpoint_name,

description="this is a sample endpoint",

auth_mode="key"

)

The previous code uses key for key-based authentication. To use Azure Machine Learning token-based authentication, use aml_token. To use Microsoft Entra token-based authentication, use aad_token. For more information on authenticating, see Authenticate clients for online endpoints.

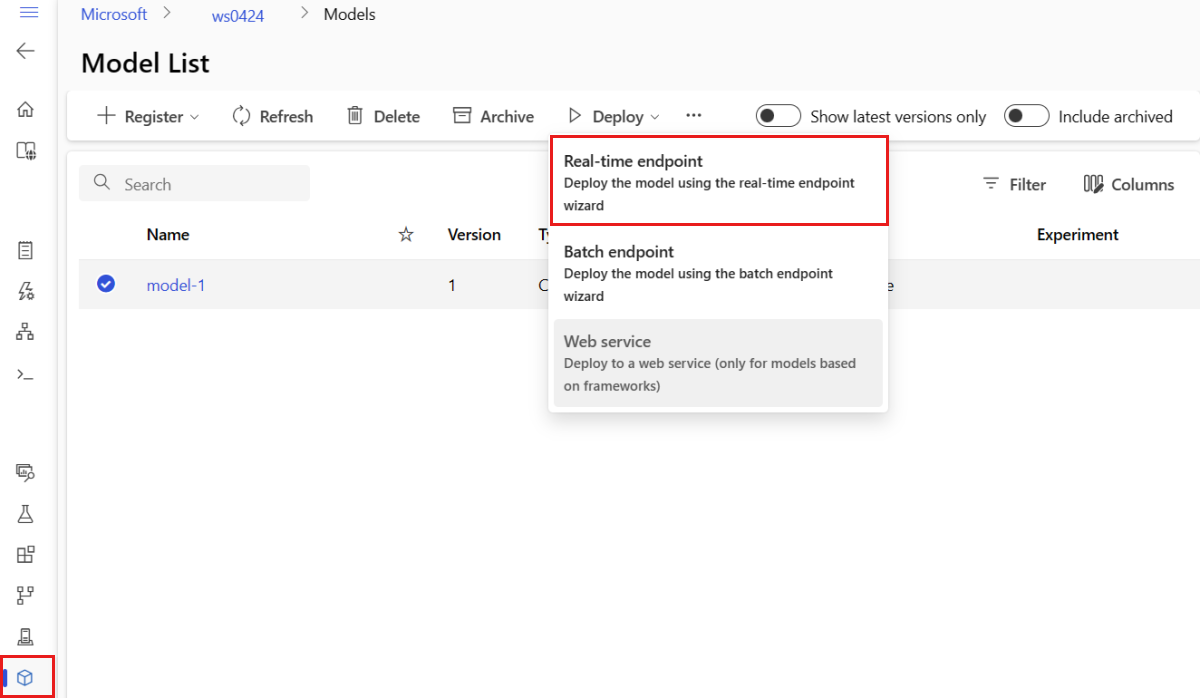

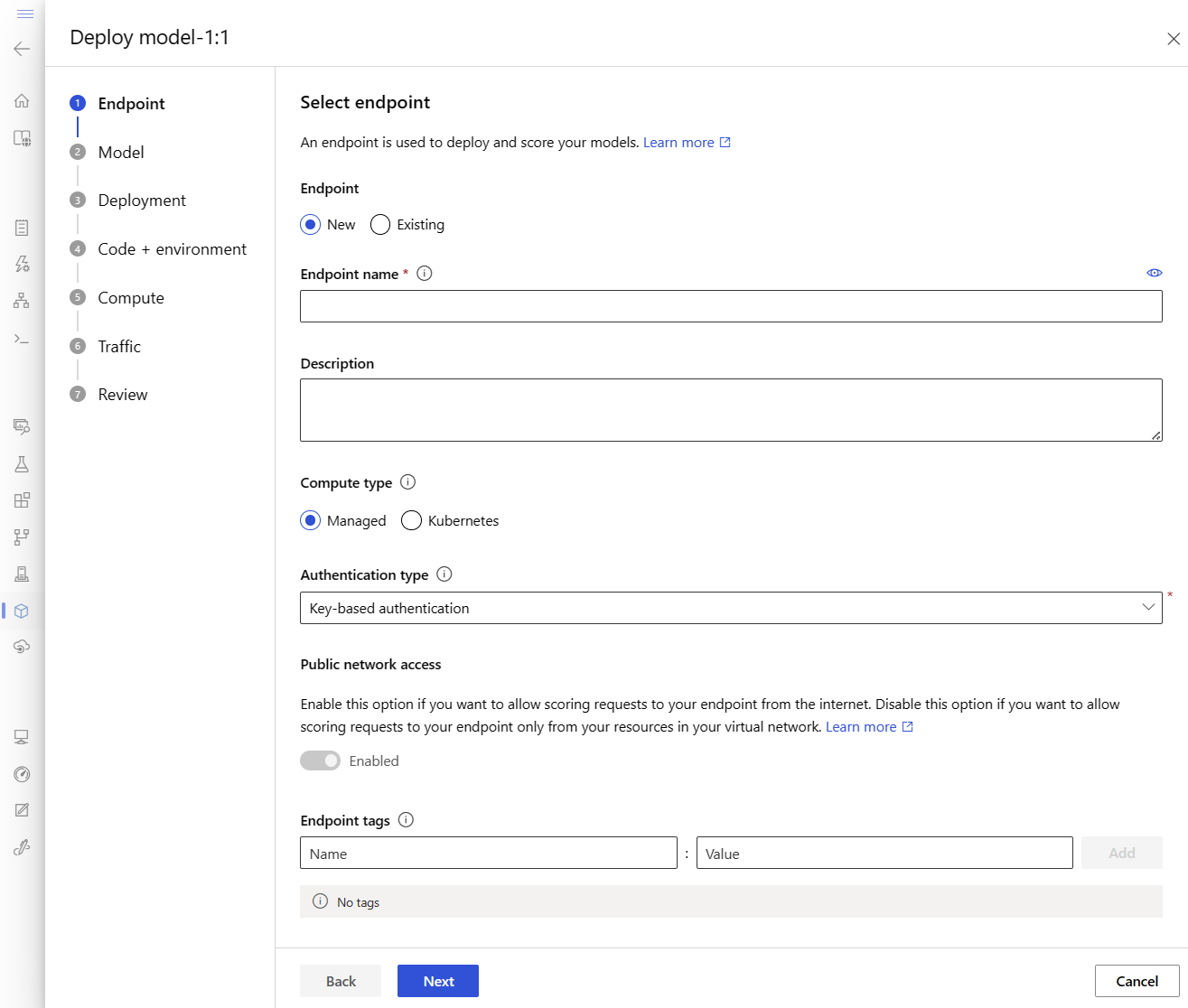

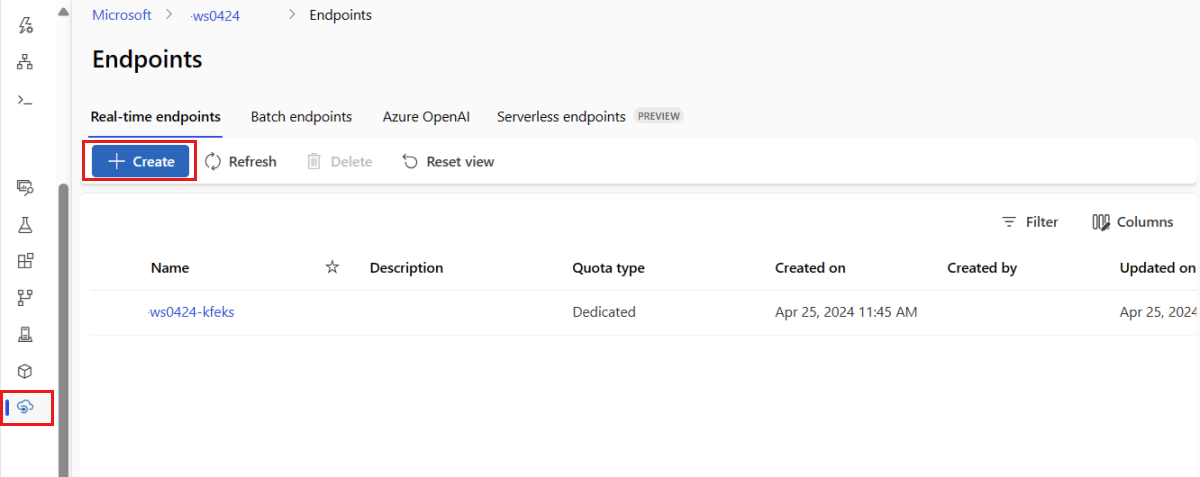

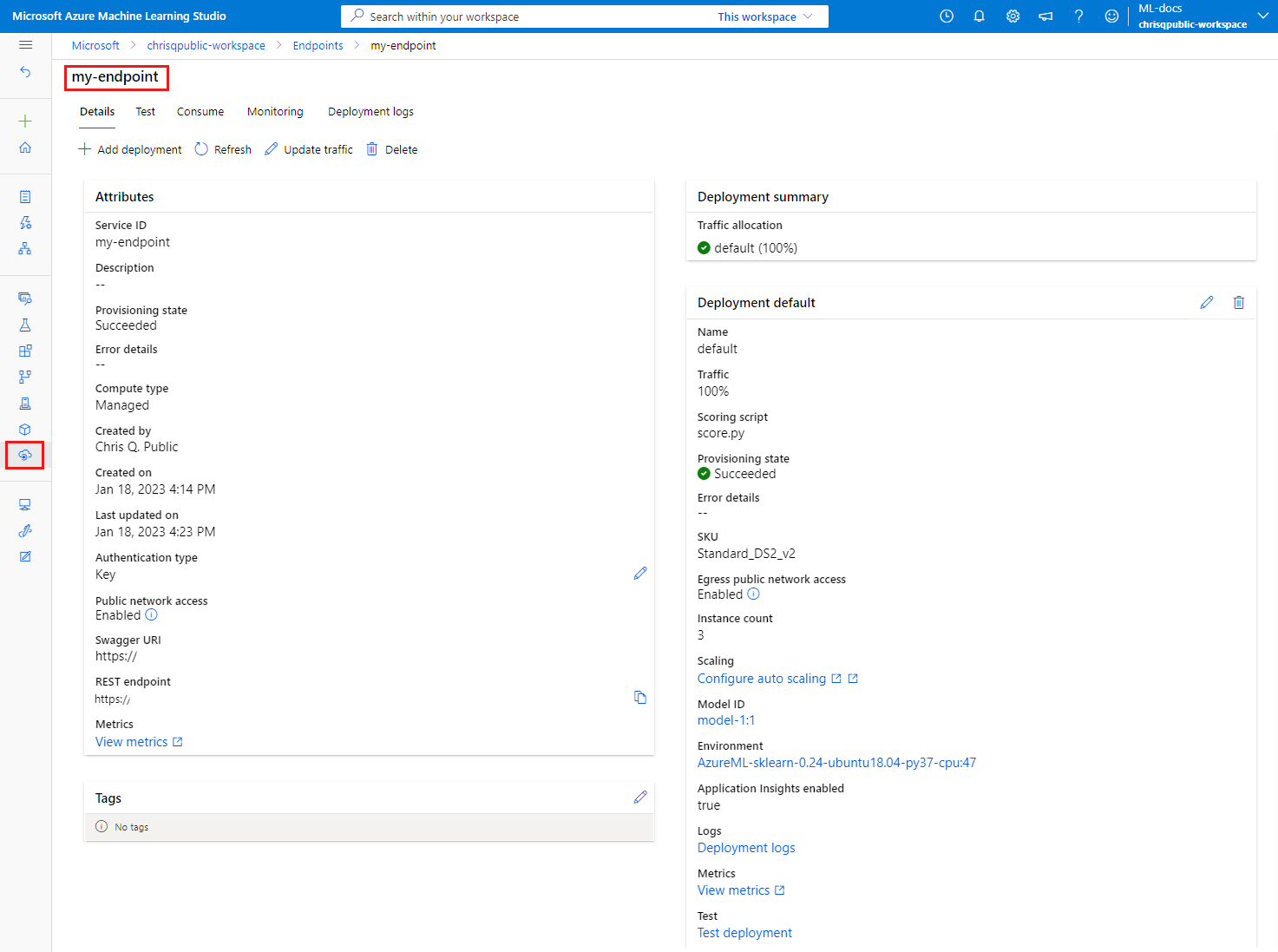

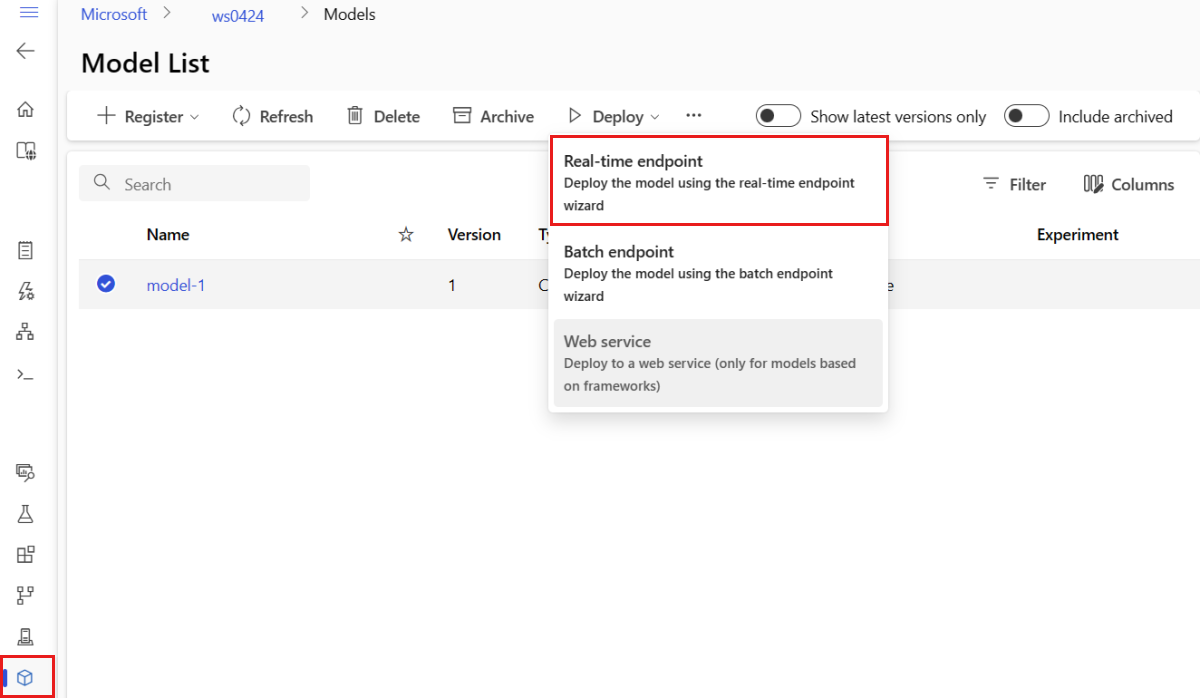

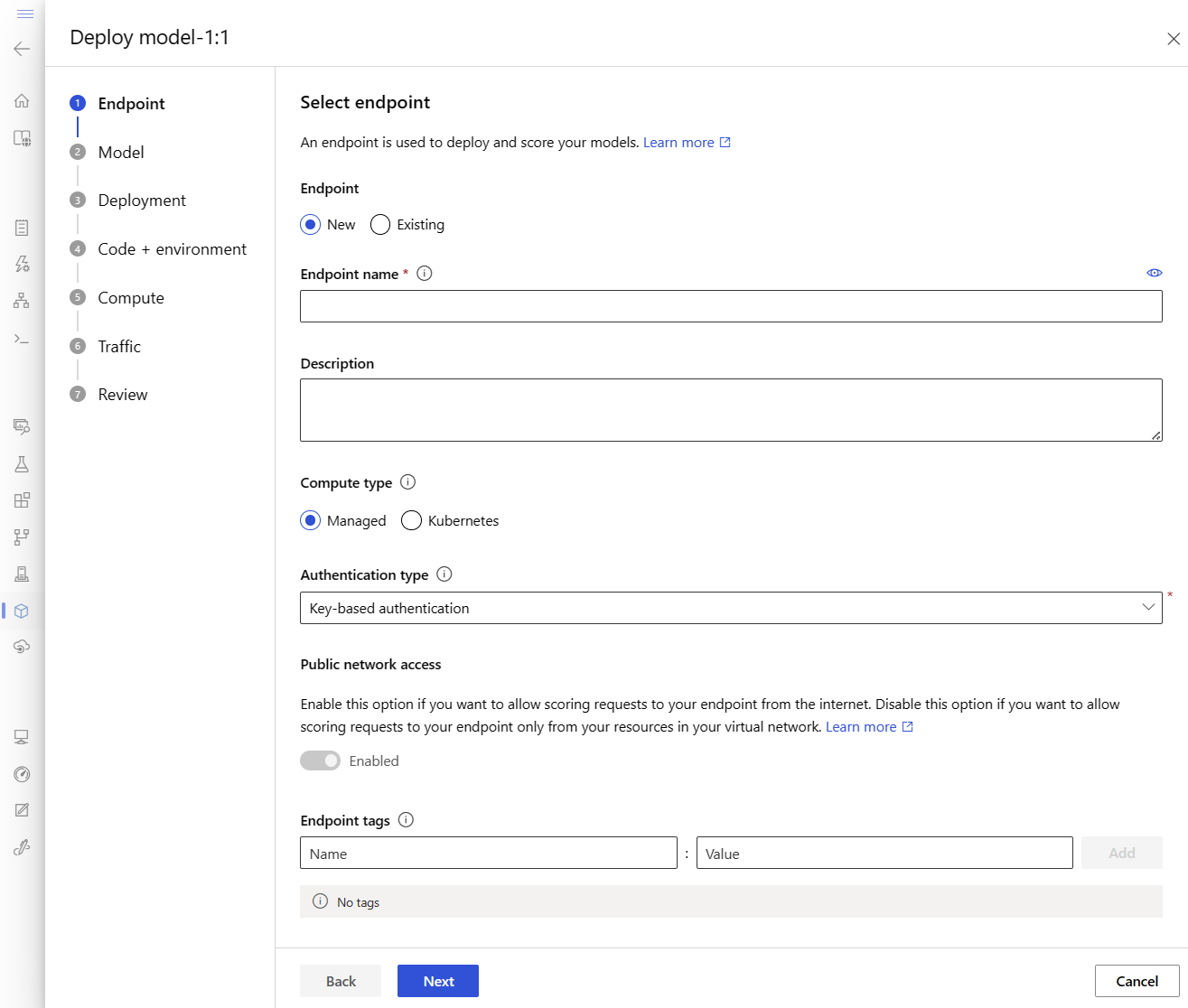

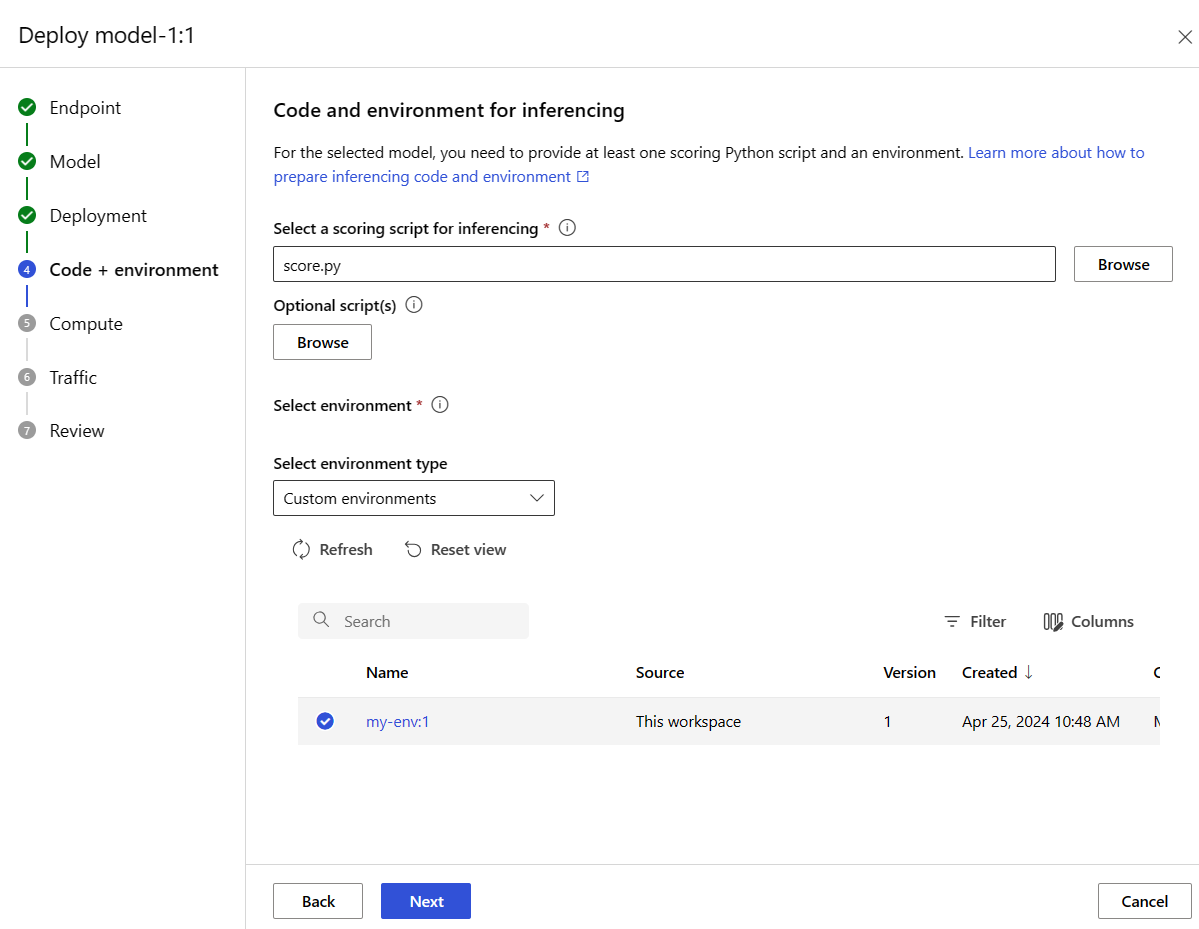

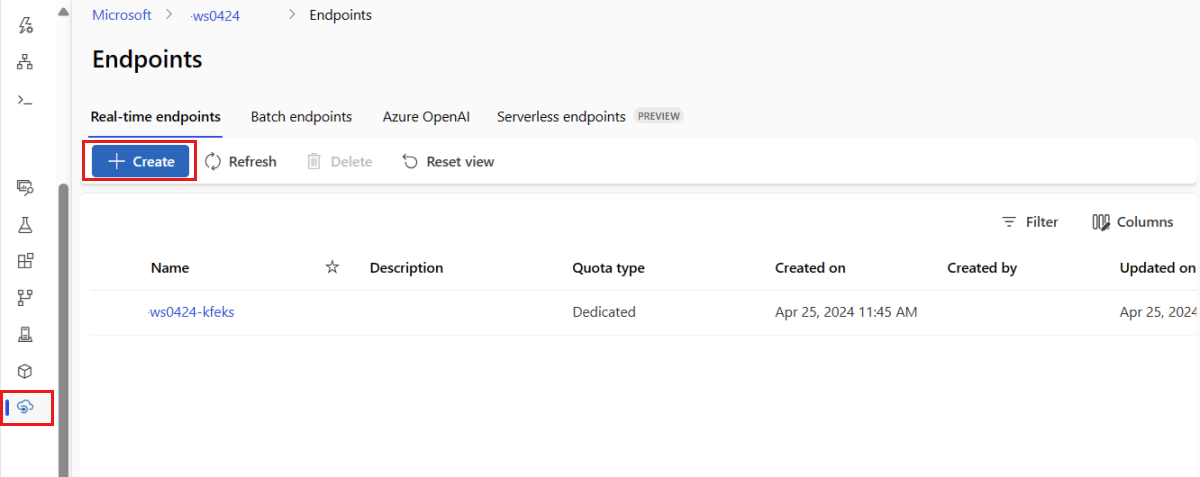

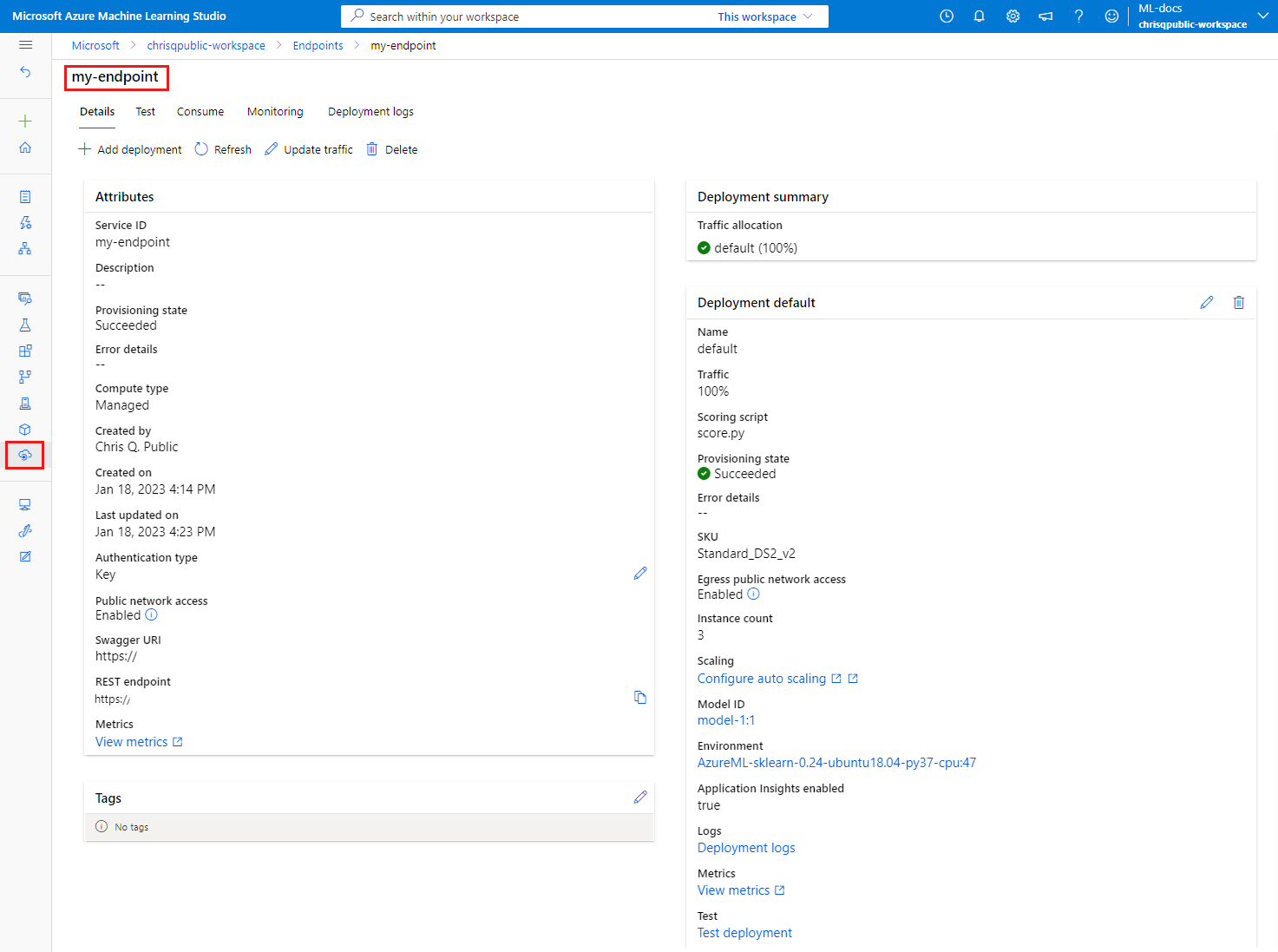

When you deploy to Azure from the studio, you create an endpoint and a deployment to add to it. At that time, you're prompted to provide names for the endpoint and deployment.

Set an endpoint name

To set your endpoint name, run the following command to generate a random name. It must be unique in the Azure region. For more information on the naming rules, see Endpoint limits.

export ENDPOINT_NAME="<YOUR_ENDPOINT_NAME>"

To define the endpoint and deployment, this article uses the Azure Resource Manager templates (ARM templates) online-endpoint.json and online-endpoint-deployment.json. To use the templates for defining an online endpoint and deployment, see the Deploy to Azure section.

Define the deployment

A deployment is a set of resources required for hosting the model that does the actual inferencing. For this example, you deploy a scikit-learn model that does regression and use a scoring script score.py to run the model on a specific input request.

To learn about the key attributes of a deployment, see Online deployments.

Your deployment configuration uses the location of the model that you want to deploy.

The following snippet shows the endpoints/online/managed/sample/blue-deployment.yml file, with all the required inputs to configure a deployment:

$schema: https://azuremlschemas.azureedge.net/latest/managedOnlineDeployment.schema.json

name: blue

endpoint_name: my-endpoint

model:

path: ../../model-1/model/

code_configuration:

code: ../../model-1/onlinescoring/

scoring_script: score.py

environment:

conda_file: ../../model-1/environment/conda.yaml

image: mcr.microsoft.com/azureml/openmpi4.1.0-ubuntu20.04:latest

instance_type: Standard_DS3_v2

instance_count: 1

The blue-deployment.yml file specifies the following deployment attributes:

model: Specifies the model properties inline by using the path parameter (where to upload files from). The CLI automatically uploads the model files and registers the model with an autogenerated name.environment: Uses inline definitions that include where to upload files from. The CLI automatically uploads the conda.yaml file and registers the environment. Later, to build the environment, the deployment uses the image parameter for the base image. In this example, it's mcr.microsoft.com/azureml/openmpi4.1.0-ubuntu20.04:latest. The conda_file dependencies are installed on top of the base image.code_configuration: Uploads the local files, such as the Python source for the scoring model, from the development environment during deployment.

For more information about the YAML schema, see the online endpoint YAML reference.

Note

To use Kubernetes endpoints instead of managed online endpoints as a compute target:

- Create and attach your Kubernetes cluster as a compute target to your Azure Machine Learning workspace by using Azure Machine Learning studio.

- Use the endpoint YAML to target Kubernetes instead of the managed endpoint YAML. You need to edit the YAML to change the value of

compute to the name of your registered compute target. You can use this deployment.yaml that has other properties that apply to a Kubernetes deployment.

All the commands that are used in this article for managed online endpoints also apply to Kubernetes endpoints, except for the following capabilities that don't apply to Kubernetes endpoints:

Use the following code to configure a deployment:

model = Model(path="../model-1/model/sklearn_regression_model.pkl")

env = Environment(

conda_file="../model-1/environment/conda.yaml",

image="mcr.microsoft.com/azureml/openmpi4.1.0-ubuntu22.04:latest",

)

blue_deployment = ManagedOnlineDeployment(

name="blue",

endpoint_name=endpoint_name,

model=model,

environment=env,

code_configuration=CodeConfiguration(

code="../model-1/onlinescoring", scoring_script="score.py"

),

instance_type="Standard_DS3_v2",

instance_count=1,

)

Model: Specifies the model properties inline by using the path parameter (where to upload files from). The SDK automatically uploads the model files and registers the model with an autogenerated name.Environment: Uses inline definitions that include where to upload files from. The SDK automatically uploads the conda.yaml file and registers the environment. Later, to build the environment, the deployment uses the image parameter for the base image. In this example, it's mcr.microsoft.com/azureml/openmpi4.1.0-ubuntu22.04:latest. The conda_file dependencies are installed on top of the base image.CodeConfiguration: Uploads the local files, such as the Python source for the scoring model, from the development environment during deployment.

For more information about online deployment definition, see OnlineDeployment Class.

When you deploy to Azure, you create an endpoint and a deployment to add to it. At that time, you're prompted to provide names for the endpoint and deployment.

Understand the scoring script

The format of the scoring script for online endpoints is the same format that's used in the preceding version of the CLI and in the Python SDK.

The scoring script specified in code_configuration.scoring_script must have an init() function and a run() function.

The scoring script must have an init() function and a run() function.

The scoring script must have an init() function and a run() function.

The scoring script must have an init() function and a run() function. This article uses the score.py file.

When you use a template for deployment, you must first upload the scoring file to Blob Storage, and then register it:

- The following code uses the Azure CLI command

az storage blob upload-batch to upload the scoring file:

set -x

#<get_access_token>

TOKEN=$(az account get-access-token --query accessToken -o tsv)

#</get_access_token>

# <create_variables>

SUBSCRIPTION_ID=$(az account show --query id -o tsv)

LOCATION=$(az ml workspace show --query location -o tsv)

RESOURCE_GROUP=$(az group show --query name -o tsv)

WORKSPACE=$(az configure -l --query "[?name=='workspace'].value" -o tsv)

#</create_variables>

# <set_endpoint_name>

export ENDPOINT_NAME=endpoint-`echo $RANDOM`

# </set_endpoint_name>

#<api_version>

API_VERSION="2022-05-01"

#</api_version>

echo -e "Using:\nSUBSCRIPTION_ID=$SUBSCRIPTION_ID\nLOCATION=$LOCATION\nRESOURCE_GROUP=$RESOURCE_GROUP\nWORKSPACE=$WORKSPACE"

# define how to wait

wait_for_completion () {

operation_id=$1

status="unknown"

if [[ $operation_id == "" || -z $operation_id || $operation_id == "null" ]]; then

echo "operation id cannot be empty"

exit 1

fi

while [[ $status != "Succeeded" && $status != "Failed" ]]

do

echo "Getting operation status from: $operation_id"

operation_result=$(curl --location --request GET $operation_id --header "Authorization: Bearer $TOKEN")

# TODO error handling here

status=$(echo $operation_result | jq -r '.status')

echo "Current operation status: $status"

sleep 5

done

if [[ $status == "Failed" ]]

then

error=$(echo $operation_result | jq -r '.error')

echo "Error: $error"

fi

}

# <get_storage_details>

# Get values for storage account

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/datastores?api-version=$API_VERSION&isDefault=true" \

--header "Authorization: Bearer $TOKEN")

AZUREML_DEFAULT_DATASTORE=$(echo $response | jq -r '.value[0].name')

AZUREML_DEFAULT_CONTAINER=$(echo $response | jq -r '.value[0].properties.containerName')

export AZURE_STORAGE_ACCOUNT=$(echo $response | jq -r '.value[0].properties.accountName')

# </get_storage_details>

# <upload_code>

az storage blob upload-batch -d $AZUREML_DEFAULT_CONTAINER/score -s cli/endpoints/online/model-1/onlinescoring --account-name $AZURE_STORAGE_ACCOUNT

# </upload_code>

# <create_code>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/code-version.json \

--parameters \

workspaceName=$WORKSPACE \

codeAssetName="score-sklearn" \

codeUri="https://$AZURE_STORAGE_ACCOUNT.blob.core.chinacloudapi.cn/$AZUREML_DEFAULT_CONTAINER/score"

# </create_code>

# <upload_model>

az storage blob upload-batch -d $AZUREML_DEFAULT_CONTAINER/model -s cli/endpoints/online/model-1/model --account-name $AZURE_STORAGE_ACCOUNT

# </upload_model>

# <create_model>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/model-version.json \

--parameters \

workspaceName=$WORKSPACE \

modelAssetName="sklearn" \

modelUri="azureml://subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/workspaces/$WORKSPACE/datastores/$AZUREML_DEFAULT_DATASTORE/paths/model/sklearn_regression_model.pkl"

# </create_model>

# <read_condafile>

CONDA_FILE=$(cat cli/endpoints/online/model-1/environment/conda.yaml)

# </read_condafile>

# <create_environment>

ENV_VERSION=$RANDOM

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/environment-version.json \

--parameters \

workspaceName=$WORKSPACE \

environmentAssetName=sklearn-env \

environmentAssetVersion=$ENV_VERSION \

dockerImage=mcr.microsoft.com/azureml/openmpi3.1.2-ubuntu18.04:20210727.v1 \

condaFile="$CONDA_FILE"

# </create_environment>

# <create_endpoint>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/online-endpoint.json \

--parameters \

workspaceName=$WORKSPACE \

onlineEndpointName=$ENDPOINT_NAME \

identityType=SystemAssigned \

authMode=AMLToken \

location=$LOCATION

# </create_endpoint>

# <get_endpoint>

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN")

operation_id=$(echo $response | jq -r '.properties.properties.AzureAsyncOperationUri')

wait_for_completion $operation_id

# </get_endpoint>

# <create_deployment>

resourceScope="/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices"

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/online-endpoint-deployment.json \

--parameters \

workspaceName=$WORKSPACE \

location=$LOCATION \

onlineEndpointName=$ENDPOINT_NAME \

onlineDeploymentName=blue \

codeId="$resourceScope/workspaces/$WORKSPACE/codes/score-sklearn/versions/1" \

scoringScript=score.py \

environmentId="$resourceScope/workspaces/$WORKSPACE/environments/sklearn-env/versions/$ENV_VERSION" \

model="$resourceScope/workspaces/$WORKSPACE/models/sklearn/versions/1" \

endpointComputeType=Managed \

skuName=Standard_F2s_v2 \

skuCapacity=1

# </create_deployment>

# <get_deployment>

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/deployments/blue?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN")

operation_id=$(echo $response | jq -r '.properties.properties.AzureAsyncOperationUri')

wait_for_completion $operation_id

scoringUri=$(echo $response | jq -r '.properties.scoringUri')

# </get_endpoint>

# <get_endpoint_access_token>

response=$(curl -H "Content-Length: 0" --location --request POST "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/token?api-version=$API_VERSION" \

--header "Authorization: Bearer $TOKEN")

accessToken=$(echo $response | jq -r '.accessToken')

# </get_endpoint_access_token>

# <score_endpoint>

curl --location --request POST $scoringUri \

--header "Authorization: Bearer $accessToken" \

--header "Content-Type: application/json" \

--data-raw @cli/endpoints/online/model-1/sample-request.json

# </score_endpoint>

# <get_deployment_logs>

curl --location --request POST "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/deployments/blue/getLogs?api-version=$API_VERSION" \

--header "Authorization: Bearer $TOKEN" \

--header "Content-Type: application/json" \

--data-raw "{ \"tail\": 100 }"

# </get_deployment_logs>

# <delete_endpoint>

curl --location --request DELETE "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN" || true

# </delete_endpoint>

- The following code uses a template to register the code:

set -x

#<get_access_token>

TOKEN=$(az account get-access-token --query accessToken -o tsv)

#</get_access_token>

# <create_variables>

SUBSCRIPTION_ID=$(az account show --query id -o tsv)

LOCATION=$(az ml workspace show --query location -o tsv)

RESOURCE_GROUP=$(az group show --query name -o tsv)

WORKSPACE=$(az configure -l --query "[?name=='workspace'].value" -o tsv)

#</create_variables>

# <set_endpoint_name>

export ENDPOINT_NAME=endpoint-`echo $RANDOM`

# </set_endpoint_name>

#<api_version>

API_VERSION="2022-05-01"

#</api_version>

echo -e "Using:\nSUBSCRIPTION_ID=$SUBSCRIPTION_ID\nLOCATION=$LOCATION\nRESOURCE_GROUP=$RESOURCE_GROUP\nWORKSPACE=$WORKSPACE"

# define how to wait

wait_for_completion () {

operation_id=$1

status="unknown"

if [[ $operation_id == "" || -z $operation_id || $operation_id == "null" ]]; then

echo "operation id cannot be empty"

exit 1

fi

while [[ $status != "Succeeded" && $status != "Failed" ]]

do

echo "Getting operation status from: $operation_id"

operation_result=$(curl --location --request GET $operation_id --header "Authorization: Bearer $TOKEN")

# TODO error handling here

status=$(echo $operation_result | jq -r '.status')

echo "Current operation status: $status"

sleep 5

done

if [[ $status == "Failed" ]]

then

error=$(echo $operation_result | jq -r '.error')

echo "Error: $error"

fi

}

# <get_storage_details>

# Get values for storage account

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/datastores?api-version=$API_VERSION&isDefault=true" \

--header "Authorization: Bearer $TOKEN")

AZUREML_DEFAULT_DATASTORE=$(echo $response | jq -r '.value[0].name')

AZUREML_DEFAULT_CONTAINER=$(echo $response | jq -r '.value[0].properties.containerName')

export AZURE_STORAGE_ACCOUNT=$(echo $response | jq -r '.value[0].properties.accountName')

# </get_storage_details>

# <upload_code>

az storage blob upload-batch -d $AZUREML_DEFAULT_CONTAINER/score -s cli/endpoints/online/model-1/onlinescoring --account-name $AZURE_STORAGE_ACCOUNT

# </upload_code>

# <create_code>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/code-version.json \

--parameters \

workspaceName=$WORKSPACE \

codeAssetName="score-sklearn" \

codeUri="https://$AZURE_STORAGE_ACCOUNT.blob.core.chinacloudapi.cn/$AZUREML_DEFAULT_CONTAINER/score"

# </create_code>

# <upload_model>

az storage blob upload-batch -d $AZUREML_DEFAULT_CONTAINER/model -s cli/endpoints/online/model-1/model --account-name $AZURE_STORAGE_ACCOUNT

# </upload_model>

# <create_model>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/model-version.json \

--parameters \

workspaceName=$WORKSPACE \

modelAssetName="sklearn" \

modelUri="azureml://subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/workspaces/$WORKSPACE/datastores/$AZUREML_DEFAULT_DATASTORE/paths/model/sklearn_regression_model.pkl"

# </create_model>

# <read_condafile>

CONDA_FILE=$(cat cli/endpoints/online/model-1/environment/conda.yaml)

# </read_condafile>

# <create_environment>

ENV_VERSION=$RANDOM

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/environment-version.json \

--parameters \

workspaceName=$WORKSPACE \

environmentAssetName=sklearn-env \

environmentAssetVersion=$ENV_VERSION \

dockerImage=mcr.microsoft.com/azureml/openmpi3.1.2-ubuntu18.04:20210727.v1 \

condaFile="$CONDA_FILE"

# </create_environment>

# <create_endpoint>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/online-endpoint.json \

--parameters \

workspaceName=$WORKSPACE \

onlineEndpointName=$ENDPOINT_NAME \

identityType=SystemAssigned \

authMode=AMLToken \

location=$LOCATION

# </create_endpoint>

# <get_endpoint>

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN")

operation_id=$(echo $response | jq -r '.properties.properties.AzureAsyncOperationUri')

wait_for_completion $operation_id

# </get_endpoint>

# <create_deployment>

resourceScope="/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices"

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/online-endpoint-deployment.json \

--parameters \

workspaceName=$WORKSPACE \

location=$LOCATION \

onlineEndpointName=$ENDPOINT_NAME \

onlineDeploymentName=blue \

codeId="$resourceScope/workspaces/$WORKSPACE/codes/score-sklearn/versions/1" \

scoringScript=score.py \

environmentId="$resourceScope/workspaces/$WORKSPACE/environments/sklearn-env/versions/$ENV_VERSION" \

model="$resourceScope/workspaces/$WORKSPACE/models/sklearn/versions/1" \

endpointComputeType=Managed \

skuName=Standard_F2s_v2 \

skuCapacity=1

# </create_deployment>

# <get_deployment>

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/deployments/blue?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN")

operation_id=$(echo $response | jq -r '.properties.properties.AzureAsyncOperationUri')

wait_for_completion $operation_id

scoringUri=$(echo $response | jq -r '.properties.scoringUri')

# </get_endpoint>

# <get_endpoint_access_token>

response=$(curl -H "Content-Length: 0" --location --request POST "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/token?api-version=$API_VERSION" \

--header "Authorization: Bearer $TOKEN")

accessToken=$(echo $response | jq -r '.accessToken')

# </get_endpoint_access_token>

# <score_endpoint>

curl --location --request POST $scoringUri \

--header "Authorization: Bearer $accessToken" \

--header "Content-Type: application/json" \

--data-raw @cli/endpoints/online/model-1/sample-request.json

# </score_endpoint>

# <get_deployment_logs>

curl --location --request POST "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/deployments/blue/getLogs?api-version=$API_VERSION" \

--header "Authorization: Bearer $TOKEN" \

--header "Content-Type: application/json" \

--data-raw "{ \"tail\": 100 }"

# </get_deployment_logs>

# <delete_endpoint>

curl --location --request DELETE "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN" || true

# </delete_endpoint>

This example uses the score.py file from the repo that you cloned or downloaded earlier:

import os

import logging

import json

import numpy

import joblib

def init():

"""

This function is called when the container is initialized/started, typically after create/update of the deployment.

You can write the logic here to perform init operations like caching the model in memory

"""

global model

# AZUREML_MODEL_DIR is an environment variable created during deployment.

# It is the path to the model folder (./azureml-models/$MODEL_NAME/$VERSION)

# Please provide your model's folder name if there is one

model_path = os.path.join(

os.getenv("AZUREML_MODEL_DIR"), "model/sklearn_regression_model.pkl"

)

# deserialize the model file back into a sklearn model

model = joblib.load(model_path)

logging.info("Init complete")

def run(raw_data):

"""

This function is called for every invocation of the endpoint to perform the actual scoring/prediction.

In the example we extract the data from the json input and call the scikit-learn model's predict()

method and return the result back

"""

logging.info("model 1: request received")

data = json.loads(raw_data)["data"]

data = numpy.array(data)

result = model.predict(data)

logging.info("Request processed")

return result.tolist()

The init() function is called when the container is initialized or started. Initialization typically occurs shortly after the deployment is created or updated. The init function is the place to write logic for global initialization operations like caching the model in memory (as shown in this score.py file).

The run() function is called every time the endpoint is invoked. It does the actual scoring and prediction. In this score.py file, the run() function extracts data from a JSON input, calls the scikit-learn model's predict() method, and then returns the prediction result.

Deploy and debug locally by using a local endpoint

We highly recommend that you test run your endpoint locally to validate and debug your code and configuration before you deploy to Azure. The Azure CLI and Python SDK support local endpoints and deployments, but Azure Machine Learning studio and ARM templates don't.

To deploy locally, Docker Engine must be installed and running. Docker Engine typically starts when the computer starts. If it doesn't, you can troubleshoot Docker Engine.

You can use the Azure Machine Learning inference HTTP server Python package to debug your scoring script locally without Docker Engine. Debugging with the inference server helps you to debug the scoring script before you deploy to local endpoints so that you can debug without being affected by the deployment container configurations.

For more information on debugging online endpoints locally before you deploy to Azure, see Online endpoint debugging.

Deploy the model locally

First, create an endpoint. Optionally, for a local endpoint, you can skip this step. You can create the deployment directly (next step), which in turn creates the required metadata. Deploying models locally is useful for development and testing purposes.

az ml online-endpoint create --local -n $ENDPOINT_NAME -f endpoints/online/managed/sample/endpoint.yml

ml_client.online_endpoints.begin_create_or_update(endpoint, local=True)

The studio doesn't support local endpoints. For steps to test the endpoint locally, see the Azure CLI or Python tabs.

The template doesn't support local endpoints. For steps to test the endpoint locally, see the Azure CLI or Python tabs.

Now, create a deployment named blue under the endpoint.

az ml online-deployment create --local -n blue --endpoint $ENDPOINT_NAME -f endpoints/online/managed/sample/blue-deployment.yml

The --local flag directs the CLI to deploy the endpoint in the Docker environment.

ml_client.online_deployments.begin_create_or_update(

deployment=blue_deployment, local=True

)

The local=True flag directs the SDK to deploy the endpoint in the Docker environment.

The studio doesn't support local endpoints. For steps to test the endpoint locally, see the Azure CLI or Python tabs.

The template doesn't support local endpoints. For steps to test the endpoint locally, see the Azure CLI or Python tabs.

Verify that the local deployment succeeded

Check the deployment status to see whether the model was deployed without error:

az ml online-endpoint show -n $ENDPOINT_NAME --local

The output should appear similar to the following JSON. The provisioning_state parameter is Succeeded.

{

"auth_mode": "key",

"location": "local",

"name": "docs-endpoint",

"properties": {},

"provisioning_state": "Succeeded",

"scoring_uri": "http://localhost:49158/score",

"tags": {},

"traffic": {}

}

ml_client.online_endpoints.get(name=endpoint_name, local=True)

The method returns ManagedOnlineEndpoint entity. The provisioning_state parameter is Succeeded.

ManagedOnlineEndpoint({'public_network_access': None, 'provisioning_state': 'Succeeded', 'scoring_uri': 'http://localhost:49158/score', 'swagger_uri': None, 'name': 'endpt-10061534497697', 'description': 'this is a sample endpoint', 'tags': {}, 'properties': {}, 'id': None, 'Resource__source_path': None, 'base_path': '/path/to/your/working/directory', 'creation_context': None, 'serialize': <msrest.serialization.Serializer object at 0x7ffb781bccd0>, 'auth_mode': 'key', 'location': 'local', 'identity': None, 'traffic': {}, 'mirror_traffic': {}, 'kind': None})

The studio doesn't support local endpoints. For steps to test the endpoint locally, see the Azure CLI or Python tabs.

The template doesn't support local endpoints. For steps to test the endpoint locally, see the Azure CLI or Python tabs.

The following table contains the possible values for provisioning_state:

| Value |

Description |

Creating |

The resource is being created. |

Updating |

The resource is being updated. |

Deleting |

The resource is being deleted. |

Succeeded |

The create or update operation succeeded. |

Failed |

The create, update, or delete operation failed. |

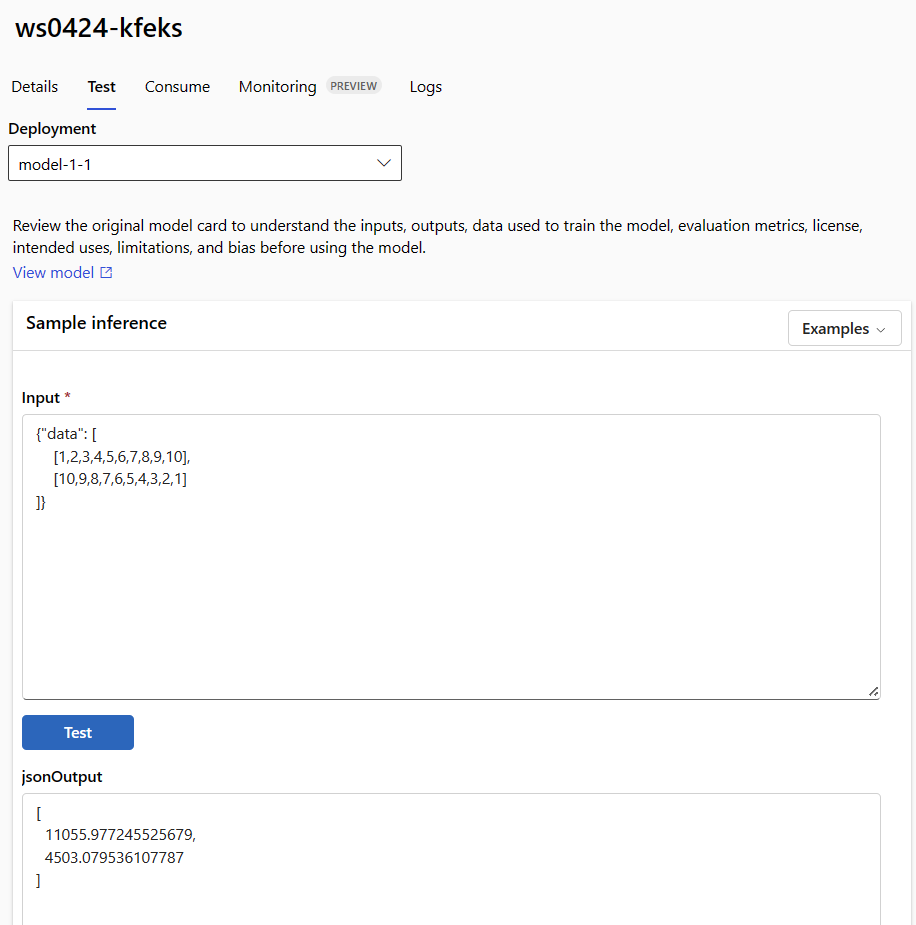

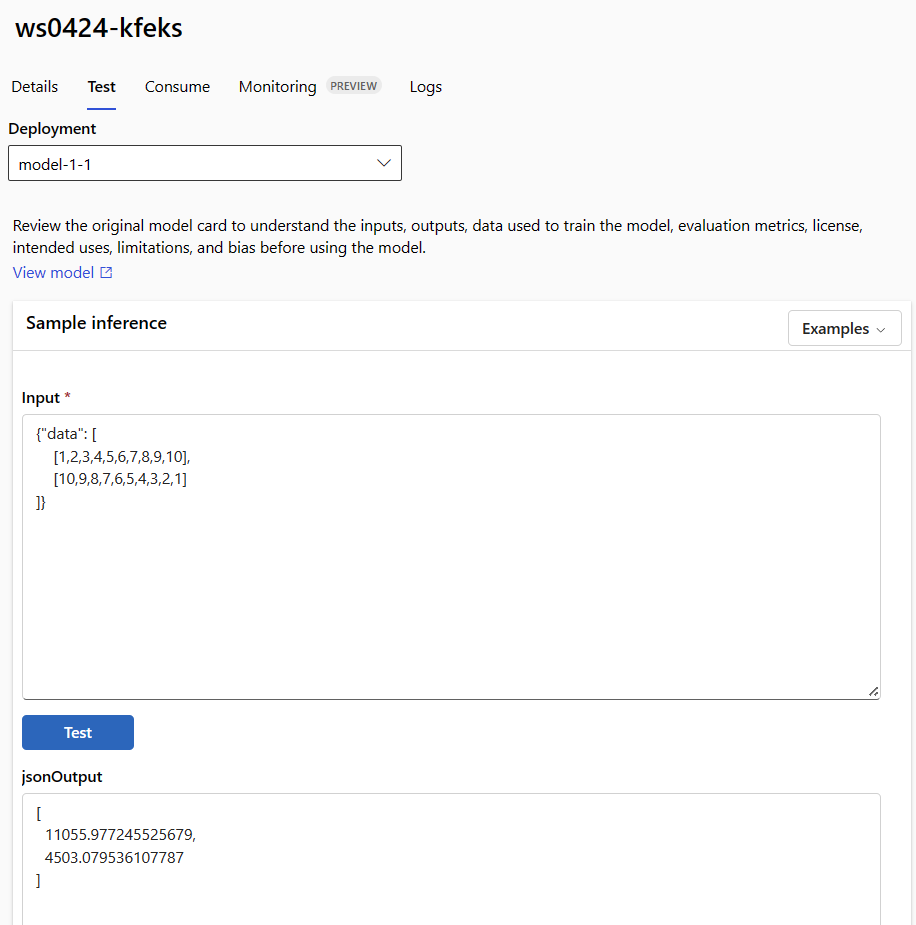

Invoke the local endpoint to score data by using your model

Invoke the endpoint to score the model by using the invoke command and passing query parameters that are stored in a JSON file:

az ml online-endpoint invoke --local --name $ENDPOINT_NAME --request-file endpoints/online/model-1/sample-request.json

If you want to use a REST client (like curl), you must have the scoring URI. To get the scoring URI, run az ml online-endpoint show --local -n $ENDPOINT_NAME. In the returned data, find the scoring_uri attribute.

Invoke the endpoint to score the model by using the invoke command and passing query parameters that are stored in a JSON file.

ml_client.online_endpoints.invoke(

endpoint_name=endpoint_name,

request_file="../model-1/sample-request.json",

local=True,

)

If you want to use a REST client (like curl), you must have the scoring URI. To get the scoring URI, run the following code. In the returned data, find the scoring_uri attribute.

endpoint = ml_client.online_endpoints.get(endpoint_name, local=True)

scoring_uri = endpoint.scoring_uri

The studio doesn't support local endpoints. For steps to test the endpoint locally, see the Azure CLI or Python tabs.

The template doesn't support local endpoints. For steps to test the endpoint locally, see the Azure CLI or Python tabs.

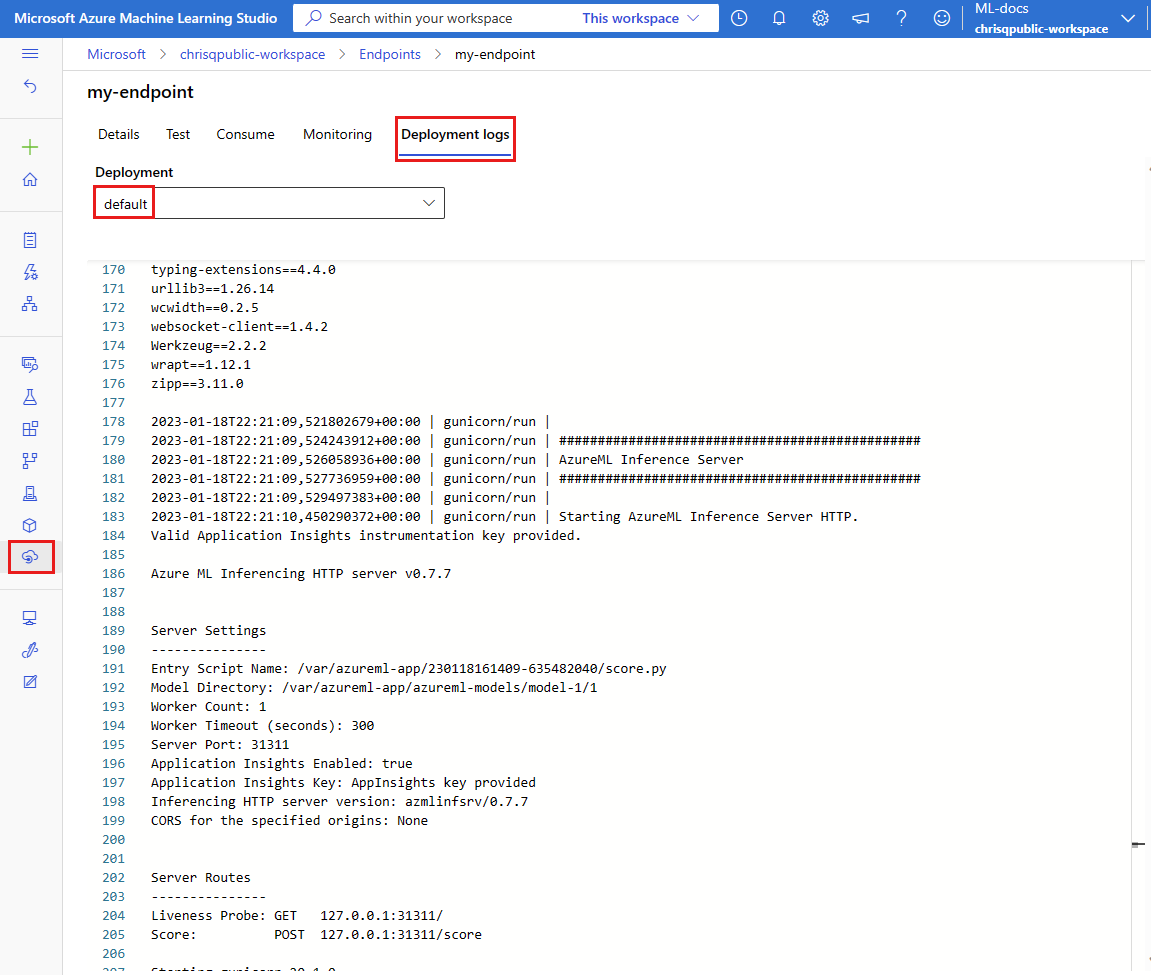

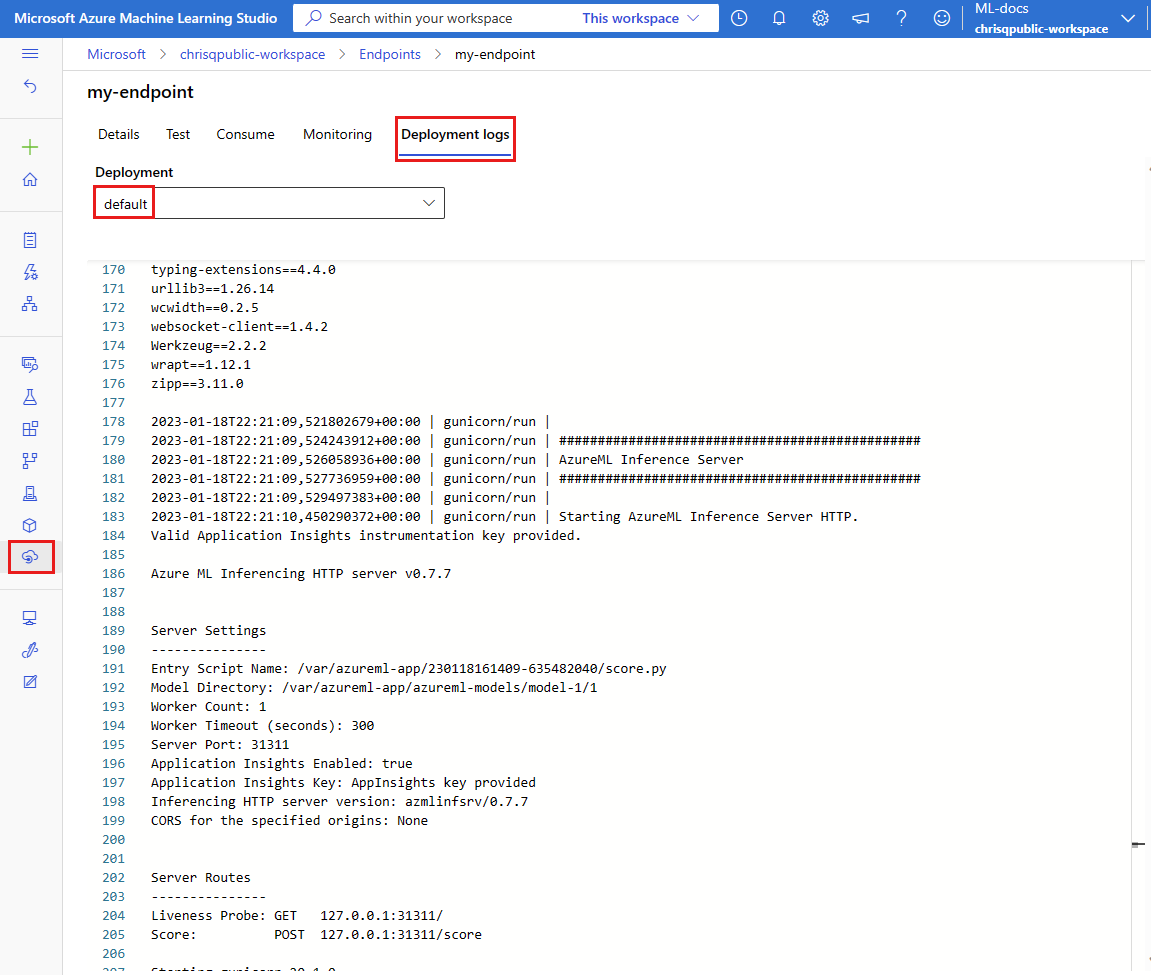

Review the logs for output from the invoke operation

In the example score.py file, the run() method logs some output to the console.

You can view this output by using the get-logs command:

az ml online-deployment get-logs --local -n blue --endpoint $ENDPOINT_NAME

You can view this output by using the get_logs method:

ml_client.online_deployments.get_logs(

name="blue", endpoint_name=endpoint_name, local=True, lines=50

)

The studio doesn't support local endpoints. For steps to test the endpoint locally, see the Azure CLI or Python tabs.

The template doesn't support local endpoints. For steps to test the endpoint locally, see the Azure CLI or Python tabs.

Deploy your online endpoint to Azure

Next, deploy your online endpoint to Azure. As a best practice for production, we recommend that you register the model and environment that you use in your deployment.

Register your model and environment

We recommend that you register your model and environment before deployment to Azure so that you can specify their registered names and versions during deployment. After you register your assets, you can reuse them without the need to upload them every time you create deployments. This practice increases reproducibility and traceability.

Unlike deployment to Azure, local deployment doesn't support using registered models and environments. Instead, local deployment uses local model files and uses environments with local files only.

For deployment to Azure, you can use either local or registered assets (models and environments). In this section of the article, the deployment to Azure uses registered assets, but you have the option of using local assets instead. For an example of a deployment configuration that uploads local files to use for local deployment, see Configure a deployment.

To register the model and environment, use the form model: azureml:my-model:1 or environment: azureml:my-env:1.

For registration, you can extract the YAML definitions of model and environment into separate YAML files in the endpoints/online/managed/sample folder, and use the commands az ml model create and az ml environment create. To learn more about these commands, run az ml model create -h and az ml environment create -h.

Create a YAML definition for the model. Name the file model.yml:

$schema: https://azuremlschemas.azureedge.net/latest/model.schema.json

name: my-model

path: ../../model-1/model/

Register the model:

az ml model create -n my-model -v 1 -f endpoints/online/managed/sample/model.yml

Create a YAML definition for the environment. Name the file environment.yml:

$schema: https://azuremlschemas.azureedge.net/latest/environment.schema.json

name: my-env

image: mcr.microsoft.com/azureml/openmpi4.1.0-ubuntu22.04:latest

conda_file: ../../model-1/environment/conda.yaml

Register the environment:

az ml environment create -n my-env -v 1 -f endpoints/online/managed/sample/environment.yml

For more information on how to register your model as an asset, see Register a model by using the Azure CLI or Python SDK. For more information on creating an environment, see Create a custom environment.

Register a model:

from azure.ai.ml.entities import Model

from azure.ai.ml.constants import AssetTypes

file_model = Model(

path="../model-1/model/",

type=AssetTypes.CUSTOM_MODEL,

name="my-model",

description="Model created from local file.",

)

ml_client.models.create_or_update(file_model)

Register the environment:

from azure.ai.ml.entities import Environment

env_docker_conda = Environment(

image="mcr.microsoft.com/azureml/openmpi4.1.0-ubuntu22.04:latest",

conda_file="../model-1/environment/conda.yaml",

name="my-env",

description="Environment created from a Docker image plus Conda environment.",

)

ml_client.environments.create_or_update(env_docker_conda)

To learn how to register your model as an asset so that you can specify its registered name and version during deployment, see Register a model by using the Azure CLI or Python SDK.

For more information on creating an environment, see Create a custom environment.

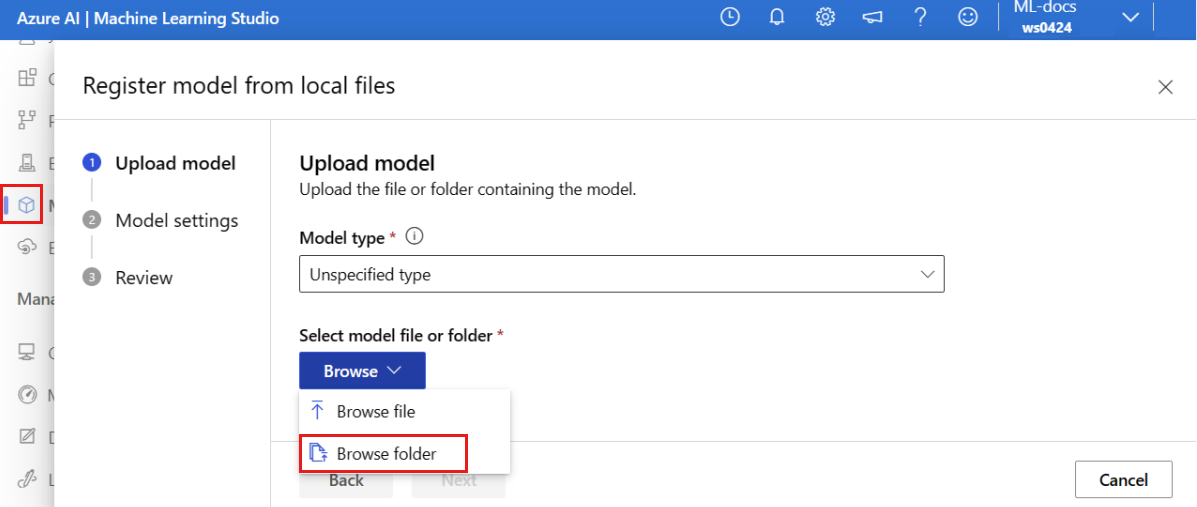

Register the model

A model registration is a logical entity in the workspace that can contain a single model file or a directory of multiple files. As a best practice for production, register the model and environment. Before you create the endpoint and deployment in this article, register the model folder that contains the model.

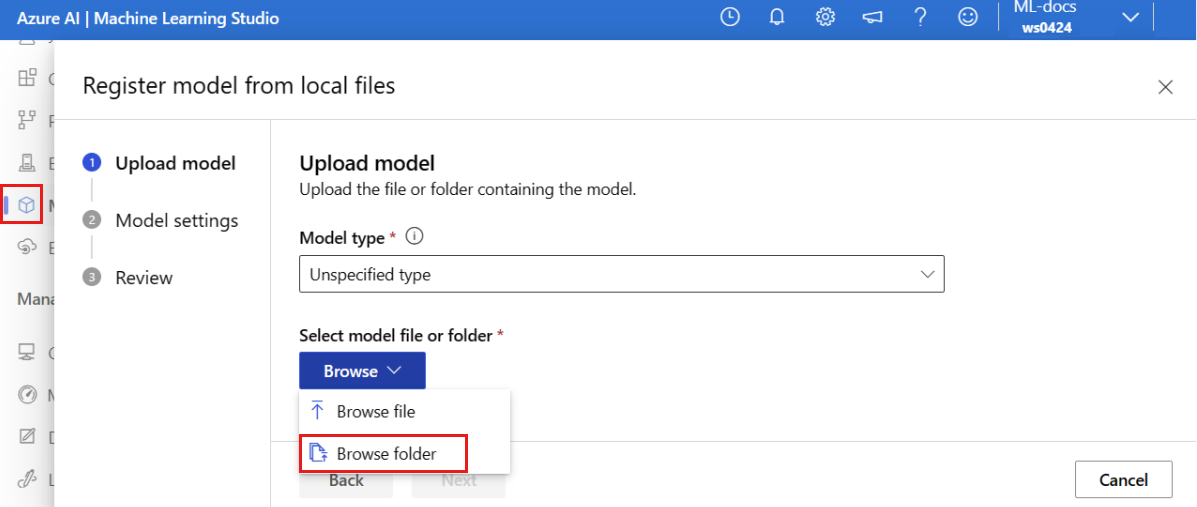

To register the example model, follow these steps:

Go to Azure Machine Learning studio.

On the left pane, select the Models page.

Select Register, and then choose From local files.

Select Unspecified type for the Model type.

Select Browse, and choose Browse folder.

Select the \azureml-examples\cli\endpoints\online\model-1\model folder from the local copy of the repo that you cloned or downloaded earlier. When you're prompted, select Upload and wait for the upload to finish.

Select Next.

Enter a friendly name for the model. The steps in this article assume that the model is named model-1.

Select Next, and then select Register to finish registration.

For more information on how to work with registered models, see Work with registered models.

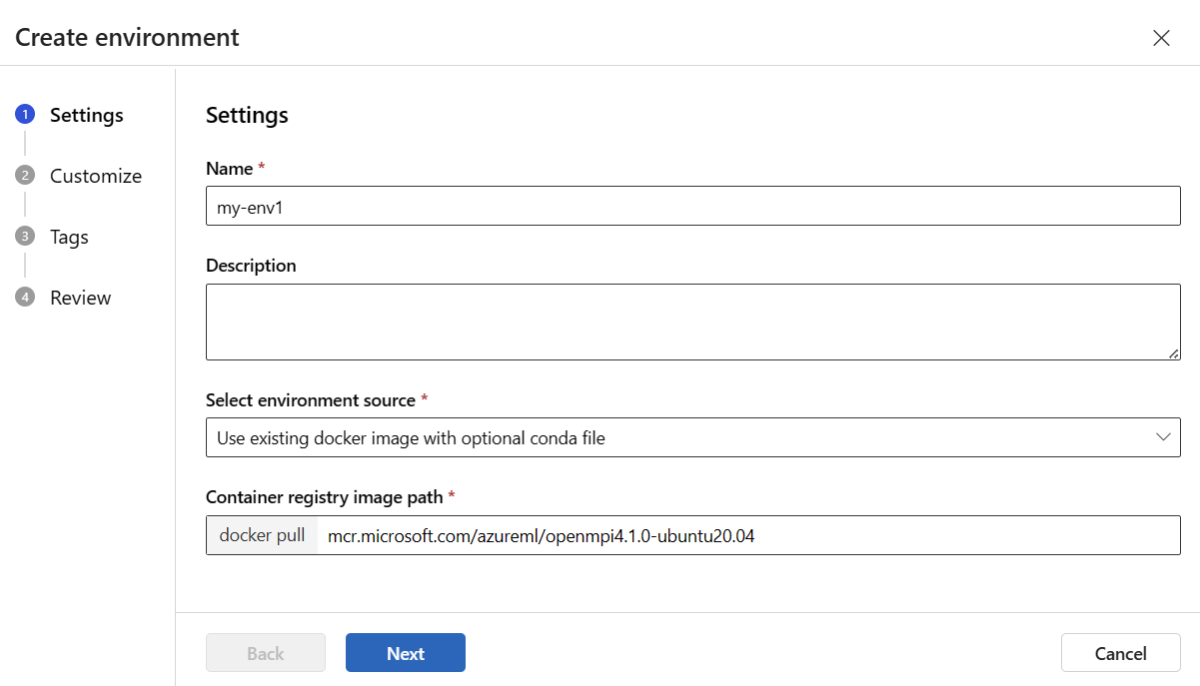

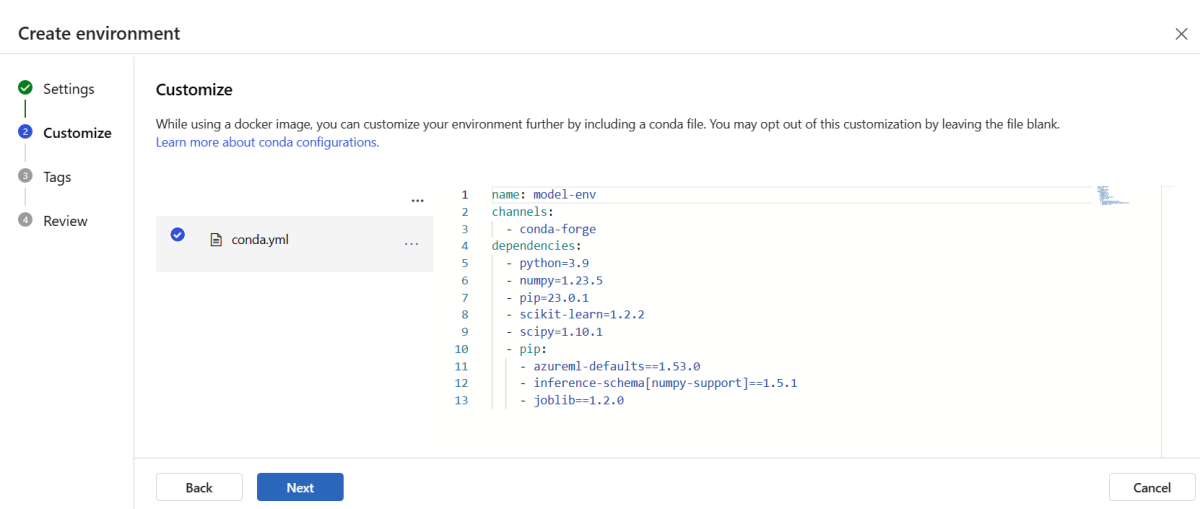

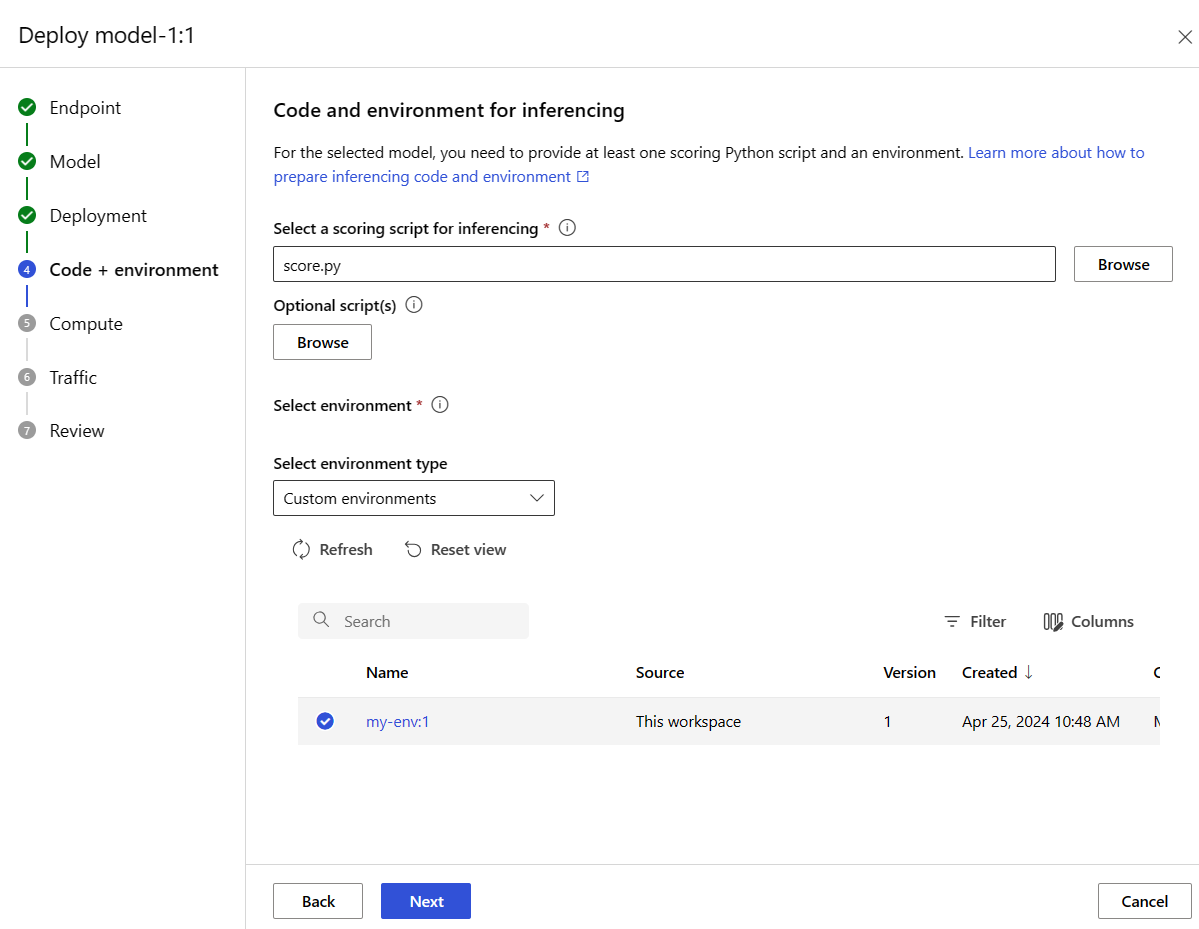

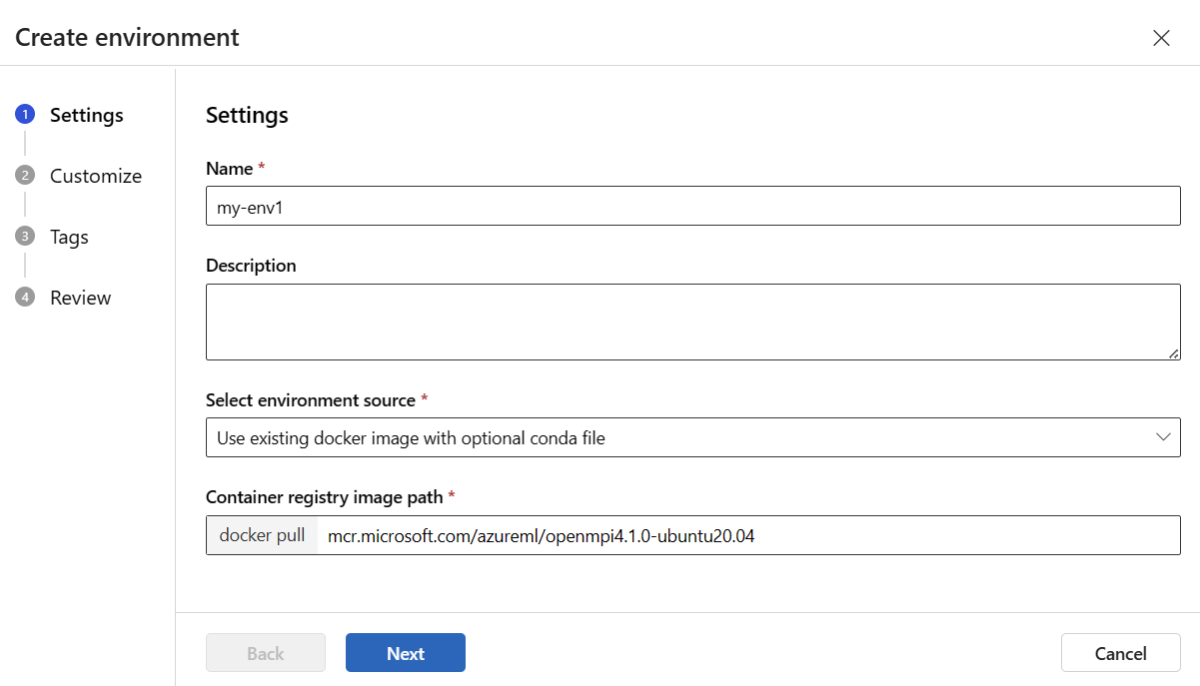

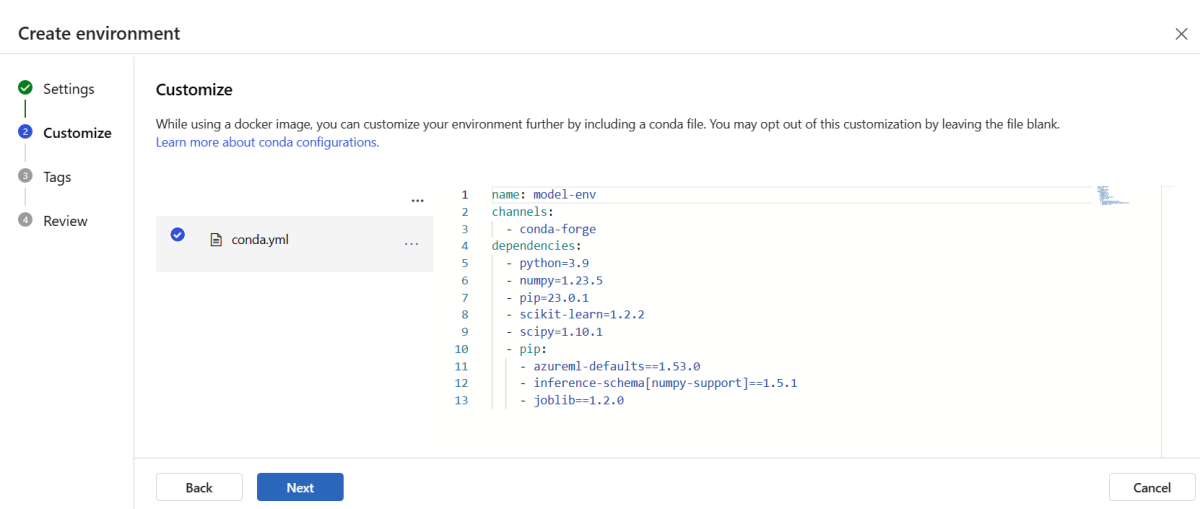

Create and register the environment

On the left pane, select the Environments page.

Select the Custom environments tab, and then choose Create.

On the Settings page, enter a name, such as my-env for the environment.

For Select environment source, choose Use existing docker image with optional conda source.

Select Next to go to the Customize page.

Copy the contents of the \azureml-examples\cli\endpoints\online\model-1\environment\conda.yaml file from the repo that you cloned or downloaded earlier.

Paste the contents into the text box.

Select Next until you get to the Create page, and then select Create.

For more information on how to create an environment in the studio, see Create an environment.

- To register the model by using a template, you must first upload the model file to Blob Storage. The following example uses the

az storage blob upload-batch command to upload a file to the default storage for your workspace:

set -x

#<get_access_token>

TOKEN=$(az account get-access-token --query accessToken -o tsv)

#</get_access_token>

# <create_variables>

SUBSCRIPTION_ID=$(az account show --query id -o tsv)

LOCATION=$(az ml workspace show --query location -o tsv)

RESOURCE_GROUP=$(az group show --query name -o tsv)

WORKSPACE=$(az configure -l --query "[?name=='workspace'].value" -o tsv)

#</create_variables>

# <set_endpoint_name>

export ENDPOINT_NAME=endpoint-`echo $RANDOM`

# </set_endpoint_name>

#<api_version>

API_VERSION="2022-05-01"

#</api_version>

echo -e "Using:\nSUBSCRIPTION_ID=$SUBSCRIPTION_ID\nLOCATION=$LOCATION\nRESOURCE_GROUP=$RESOURCE_GROUP\nWORKSPACE=$WORKSPACE"

# define how to wait

wait_for_completion () {

operation_id=$1

status="unknown"

if [[ $operation_id == "" || -z $operation_id || $operation_id == "null" ]]; then

echo "operation id cannot be empty"

exit 1

fi

while [[ $status != "Succeeded" && $status != "Failed" ]]

do

echo "Getting operation status from: $operation_id"

operation_result=$(curl --location --request GET $operation_id --header "Authorization: Bearer $TOKEN")

# TODO error handling here

status=$(echo $operation_result | jq -r '.status')

echo "Current operation status: $status"

sleep 5

done

if [[ $status == "Failed" ]]

then

error=$(echo $operation_result | jq -r '.error')

echo "Error: $error"

fi

}

# <get_storage_details>

# Get values for storage account

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/datastores?api-version=$API_VERSION&isDefault=true" \

--header "Authorization: Bearer $TOKEN")

AZUREML_DEFAULT_DATASTORE=$(echo $response | jq -r '.value[0].name')

AZUREML_DEFAULT_CONTAINER=$(echo $response | jq -r '.value[0].properties.containerName')

export AZURE_STORAGE_ACCOUNT=$(echo $response | jq -r '.value[0].properties.accountName')

# </get_storage_details>

# <upload_code>

az storage blob upload-batch -d $AZUREML_DEFAULT_CONTAINER/score -s cli/endpoints/online/model-1/onlinescoring --account-name $AZURE_STORAGE_ACCOUNT

# </upload_code>

# <create_code>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/code-version.json \

--parameters \

workspaceName=$WORKSPACE \

codeAssetName="score-sklearn" \

codeUri="https://$AZURE_STORAGE_ACCOUNT.blob.core.chinacloudapi.cn/$AZUREML_DEFAULT_CONTAINER/score"

# </create_code>

# <upload_model>

az storage blob upload-batch -d $AZUREML_DEFAULT_CONTAINER/model -s cli/endpoints/online/model-1/model --account-name $AZURE_STORAGE_ACCOUNT

# </upload_model>

# <create_model>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/model-version.json \

--parameters \

workspaceName=$WORKSPACE \

modelAssetName="sklearn" \

modelUri="azureml://subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/workspaces/$WORKSPACE/datastores/$AZUREML_DEFAULT_DATASTORE/paths/model/sklearn_regression_model.pkl"

# </create_model>

# <read_condafile>

CONDA_FILE=$(cat cli/endpoints/online/model-1/environment/conda.yaml)

# </read_condafile>

# <create_environment>

ENV_VERSION=$RANDOM

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/environment-version.json \

--parameters \

workspaceName=$WORKSPACE \

environmentAssetName=sklearn-env \

environmentAssetVersion=$ENV_VERSION \

dockerImage=mcr.microsoft.com/azureml/openmpi3.1.2-ubuntu18.04:20210727.v1 \

condaFile="$CONDA_FILE"

# </create_environment>

# <create_endpoint>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/online-endpoint.json \

--parameters \

workspaceName=$WORKSPACE \

onlineEndpointName=$ENDPOINT_NAME \

identityType=SystemAssigned \

authMode=AMLToken \

location=$LOCATION

# </create_endpoint>

# <get_endpoint>

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN")

operation_id=$(echo $response | jq -r '.properties.properties.AzureAsyncOperationUri')

wait_for_completion $operation_id

# </get_endpoint>

# <create_deployment>

resourceScope="/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices"

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/online-endpoint-deployment.json \

--parameters \

workspaceName=$WORKSPACE \

location=$LOCATION \

onlineEndpointName=$ENDPOINT_NAME \

onlineDeploymentName=blue \

codeId="$resourceScope/workspaces/$WORKSPACE/codes/score-sklearn/versions/1" \

scoringScript=score.py \

environmentId="$resourceScope/workspaces/$WORKSPACE/environments/sklearn-env/versions/$ENV_VERSION" \

model="$resourceScope/workspaces/$WORKSPACE/models/sklearn/versions/1" \

endpointComputeType=Managed \

skuName=Standard_F2s_v2 \

skuCapacity=1

# </create_deployment>

# <get_deployment>

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/deployments/blue?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN")

operation_id=$(echo $response | jq -r '.properties.properties.AzureAsyncOperationUri')

wait_for_completion $operation_id

scoringUri=$(echo $response | jq -r '.properties.scoringUri')

# </get_endpoint>

# <get_endpoint_access_token>

response=$(curl -H "Content-Length: 0" --location --request POST "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/token?api-version=$API_VERSION" \

--header "Authorization: Bearer $TOKEN")

accessToken=$(echo $response | jq -r '.accessToken')

# </get_endpoint_access_token>

# <score_endpoint>

curl --location --request POST $scoringUri \

--header "Authorization: Bearer $accessToken" \

--header "Content-Type: application/json" \

--data-raw @cli/endpoints/online/model-1/sample-request.json

# </score_endpoint>

# <get_deployment_logs>

curl --location --request POST "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/deployments/blue/getLogs?api-version=$API_VERSION" \

--header "Authorization: Bearer $TOKEN" \

--header "Content-Type: application/json" \

--data-raw "{ \"tail\": 100 }"

# </get_deployment_logs>

# <delete_endpoint>

curl --location --request DELETE "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN" || true

# </delete_endpoint>

- After you upload the file, use the template to create a model registration. In the following example, the

modelUri parameter contains the path to the model:

set -x

#<get_access_token>

TOKEN=$(az account get-access-token --query accessToken -o tsv)

#</get_access_token>

# <create_variables>

SUBSCRIPTION_ID=$(az account show --query id -o tsv)

LOCATION=$(az ml workspace show --query location -o tsv)

RESOURCE_GROUP=$(az group show --query name -o tsv)

WORKSPACE=$(az configure -l --query "[?name=='workspace'].value" -o tsv)

#</create_variables>

# <set_endpoint_name>

export ENDPOINT_NAME=endpoint-`echo $RANDOM`

# </set_endpoint_name>

#<api_version>

API_VERSION="2022-05-01"

#</api_version>

echo -e "Using:\nSUBSCRIPTION_ID=$SUBSCRIPTION_ID\nLOCATION=$LOCATION\nRESOURCE_GROUP=$RESOURCE_GROUP\nWORKSPACE=$WORKSPACE"

# define how to wait

wait_for_completion () {

operation_id=$1

status="unknown"

if [[ $operation_id == "" || -z $operation_id || $operation_id == "null" ]]; then

echo "operation id cannot be empty"

exit 1

fi

while [[ $status != "Succeeded" && $status != "Failed" ]]

do

echo "Getting operation status from: $operation_id"

operation_result=$(curl --location --request GET $operation_id --header "Authorization: Bearer $TOKEN")

# TODO error handling here

status=$(echo $operation_result | jq -r '.status')

echo "Current operation status: $status"

sleep 5

done

if [[ $status == "Failed" ]]

then

error=$(echo $operation_result | jq -r '.error')

echo "Error: $error"

fi

}

# <get_storage_details>

# Get values for storage account

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/datastores?api-version=$API_VERSION&isDefault=true" \

--header "Authorization: Bearer $TOKEN")

AZUREML_DEFAULT_DATASTORE=$(echo $response | jq -r '.value[0].name')

AZUREML_DEFAULT_CONTAINER=$(echo $response | jq -r '.value[0].properties.containerName')

export AZURE_STORAGE_ACCOUNT=$(echo $response | jq -r '.value[0].properties.accountName')

# </get_storage_details>

# <upload_code>

az storage blob upload-batch -d $AZUREML_DEFAULT_CONTAINER/score -s cli/endpoints/online/model-1/onlinescoring --account-name $AZURE_STORAGE_ACCOUNT

# </upload_code>

# <create_code>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/code-version.json \

--parameters \

workspaceName=$WORKSPACE \

codeAssetName="score-sklearn" \

codeUri="https://$AZURE_STORAGE_ACCOUNT.blob.core.chinacloudapi.cn/$AZUREML_DEFAULT_CONTAINER/score"

# </create_code>

# <upload_model>

az storage blob upload-batch -d $AZUREML_DEFAULT_CONTAINER/model -s cli/endpoints/online/model-1/model --account-name $AZURE_STORAGE_ACCOUNT

# </upload_model>

# <create_model>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/model-version.json \

--parameters \

workspaceName=$WORKSPACE \

modelAssetName="sklearn" \

modelUri="azureml://subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/workspaces/$WORKSPACE/datastores/$AZUREML_DEFAULT_DATASTORE/paths/model/sklearn_regression_model.pkl"

# </create_model>

# <read_condafile>

CONDA_FILE=$(cat cli/endpoints/online/model-1/environment/conda.yaml)

# </read_condafile>

# <create_environment>

ENV_VERSION=$RANDOM

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/environment-version.json \

--parameters \

workspaceName=$WORKSPACE \

environmentAssetName=sklearn-env \

environmentAssetVersion=$ENV_VERSION \

dockerImage=mcr.microsoft.com/azureml/openmpi3.1.2-ubuntu18.04:20210727.v1 \

condaFile="$CONDA_FILE"

# </create_environment>

# <create_endpoint>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/online-endpoint.json \

--parameters \

workspaceName=$WORKSPACE \

onlineEndpointName=$ENDPOINT_NAME \

identityType=SystemAssigned \

authMode=AMLToken \

location=$LOCATION

# </create_endpoint>

# <get_endpoint>

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN")

operation_id=$(echo $response | jq -r '.properties.properties.AzureAsyncOperationUri')

wait_for_completion $operation_id

# </get_endpoint>

# <create_deployment>

resourceScope="/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices"

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/online-endpoint-deployment.json \

--parameters \

workspaceName=$WORKSPACE \

location=$LOCATION \

onlineEndpointName=$ENDPOINT_NAME \

onlineDeploymentName=blue \

codeId="$resourceScope/workspaces/$WORKSPACE/codes/score-sklearn/versions/1" \

scoringScript=score.py \

environmentId="$resourceScope/workspaces/$WORKSPACE/environments/sklearn-env/versions/$ENV_VERSION" \

model="$resourceScope/workspaces/$WORKSPACE/models/sklearn/versions/1" \

endpointComputeType=Managed \

skuName=Standard_F2s_v2 \

skuCapacity=1

# </create_deployment>

# <get_deployment>

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/deployments/blue?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN")

operation_id=$(echo $response | jq -r '.properties.properties.AzureAsyncOperationUri')

wait_for_completion $operation_id

scoringUri=$(echo $response | jq -r '.properties.scoringUri')

# </get_endpoint>

# <get_endpoint_access_token>

response=$(curl -H "Content-Length: 0" --location --request POST "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/token?api-version=$API_VERSION" \

--header "Authorization: Bearer $TOKEN")

accessToken=$(echo $response | jq -r '.accessToken')

# </get_endpoint_access_token>

# <score_endpoint>

curl --location --request POST $scoringUri \

--header "Authorization: Bearer $accessToken" \

--header "Content-Type: application/json" \

--data-raw @cli/endpoints/online/model-1/sample-request.json

# </score_endpoint>

# <get_deployment_logs>

curl --location --request POST "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/deployments/blue/getLogs?api-version=$API_VERSION" \

--header "Authorization: Bearer $TOKEN" \

--header "Content-Type: application/json" \

--data-raw "{ \"tail\": 100 }"

# </get_deployment_logs>

# <delete_endpoint>

curl --location --request DELETE "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN" || true

# </delete_endpoint>

- Part of the environment is a conda file that specifies the model dependencies that are needed to host the model. The following example demonstrates how to read the contents of the conda file into environment variables:

set -x

#<get_access_token>

TOKEN=$(az account get-access-token --query accessToken -o tsv)

#</get_access_token>

# <create_variables>

SUBSCRIPTION_ID=$(az account show --query id -o tsv)

LOCATION=$(az ml workspace show --query location -o tsv)

RESOURCE_GROUP=$(az group show --query name -o tsv)

WORKSPACE=$(az configure -l --query "[?name=='workspace'].value" -o tsv)

#</create_variables>

# <set_endpoint_name>

export ENDPOINT_NAME=endpoint-`echo $RANDOM`

# </set_endpoint_name>

#<api_version>

API_VERSION="2022-05-01"

#</api_version>

echo -e "Using:\nSUBSCRIPTION_ID=$SUBSCRIPTION_ID\nLOCATION=$LOCATION\nRESOURCE_GROUP=$RESOURCE_GROUP\nWORKSPACE=$WORKSPACE"

# define how to wait

wait_for_completion () {

operation_id=$1

status="unknown"

if [[ $operation_id == "" || -z $operation_id || $operation_id == "null" ]]; then

echo "operation id cannot be empty"

exit 1

fi

while [[ $status != "Succeeded" && $status != "Failed" ]]

do

echo "Getting operation status from: $operation_id"

operation_result=$(curl --location --request GET $operation_id --header "Authorization: Bearer $TOKEN")

# TODO error handling here

status=$(echo $operation_result | jq -r '.status')

echo "Current operation status: $status"

sleep 5

done

if [[ $status == "Failed" ]]

then

error=$(echo $operation_result | jq -r '.error')

echo "Error: $error"

fi

}

# <get_storage_details>

# Get values for storage account

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/datastores?api-version=$API_VERSION&isDefault=true" \

--header "Authorization: Bearer $TOKEN")

AZUREML_DEFAULT_DATASTORE=$(echo $response | jq -r '.value[0].name')

AZUREML_DEFAULT_CONTAINER=$(echo $response | jq -r '.value[0].properties.containerName')

export AZURE_STORAGE_ACCOUNT=$(echo $response | jq -r '.value[0].properties.accountName')

# </get_storage_details>

# <upload_code>

az storage blob upload-batch -d $AZUREML_DEFAULT_CONTAINER/score -s cli/endpoints/online/model-1/onlinescoring --account-name $AZURE_STORAGE_ACCOUNT

# </upload_code>

# <create_code>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/code-version.json \

--parameters \

workspaceName=$WORKSPACE \

codeAssetName="score-sklearn" \

codeUri="https://$AZURE_STORAGE_ACCOUNT.blob.core.chinacloudapi.cn/$AZUREML_DEFAULT_CONTAINER/score"

# </create_code>

# <upload_model>

az storage blob upload-batch -d $AZUREML_DEFAULT_CONTAINER/model -s cli/endpoints/online/model-1/model --account-name $AZURE_STORAGE_ACCOUNT

# </upload_model>

# <create_model>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/model-version.json \

--parameters \

workspaceName=$WORKSPACE \

modelAssetName="sklearn" \

modelUri="azureml://subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/workspaces/$WORKSPACE/datastores/$AZUREML_DEFAULT_DATASTORE/paths/model/sklearn_regression_model.pkl"

# </create_model>

# <read_condafile>

CONDA_FILE=$(cat cli/endpoints/online/model-1/environment/conda.yaml)

# </read_condafile>

# <create_environment>

ENV_VERSION=$RANDOM

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/environment-version.json \

--parameters \

workspaceName=$WORKSPACE \

environmentAssetName=sklearn-env \

environmentAssetVersion=$ENV_VERSION \

dockerImage=mcr.microsoft.com/azureml/openmpi3.1.2-ubuntu18.04:20210727.v1 \

condaFile="$CONDA_FILE"

# </create_environment>

# <create_endpoint>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/online-endpoint.json \

--parameters \

workspaceName=$WORKSPACE \

onlineEndpointName=$ENDPOINT_NAME \

identityType=SystemAssigned \

authMode=AMLToken \

location=$LOCATION

# </create_endpoint>

# <get_endpoint>

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN")

operation_id=$(echo $response | jq -r '.properties.properties.AzureAsyncOperationUri')

wait_for_completion $operation_id

# </get_endpoint>

# <create_deployment>

resourceScope="/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices"

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/online-endpoint-deployment.json \

--parameters \

workspaceName=$WORKSPACE \

location=$LOCATION \

onlineEndpointName=$ENDPOINT_NAME \

onlineDeploymentName=blue \

codeId="$resourceScope/workspaces/$WORKSPACE/codes/score-sklearn/versions/1" \

scoringScript=score.py \

environmentId="$resourceScope/workspaces/$WORKSPACE/environments/sklearn-env/versions/$ENV_VERSION" \

model="$resourceScope/workspaces/$WORKSPACE/models/sklearn/versions/1" \

endpointComputeType=Managed \

skuName=Standard_F2s_v2 \

skuCapacity=1

# </create_deployment>

# <get_deployment>

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/deployments/blue?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN")

operation_id=$(echo $response | jq -r '.properties.properties.AzureAsyncOperationUri')

wait_for_completion $operation_id

scoringUri=$(echo $response | jq -r '.properties.scoringUri')

# </get_endpoint>

# <get_endpoint_access_token>

response=$(curl -H "Content-Length: 0" --location --request POST "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/token?api-version=$API_VERSION" \

--header "Authorization: Bearer $TOKEN")

accessToken=$(echo $response | jq -r '.accessToken')

# </get_endpoint_access_token>

# <score_endpoint>

curl --location --request POST $scoringUri \

--header "Authorization: Bearer $accessToken" \

--header "Content-Type: application/json" \

--data-raw @cli/endpoints/online/model-1/sample-request.json

# </score_endpoint>

# <get_deployment_logs>

curl --location --request POST "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME/deployments/blue/getLogs?api-version=$API_VERSION" \

--header "Authorization: Bearer $TOKEN" \

--header "Content-Type: application/json" \

--data-raw "{ \"tail\": 100 }"

# </get_deployment_logs>

# <delete_endpoint>

curl --location --request DELETE "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/onlineEndpoints/$ENDPOINT_NAME?api-version=$API_VERSION" \

--header "Content-Type: application/json" \

--header "Authorization: Bearer $TOKEN" || true

# </delete_endpoint>

- The following example demonstrates how to use the template to register the environment. The contents of the conda file from the previous step are passed to the template by using the

condaFile parameter:

set -x

#<get_access_token>

TOKEN=$(az account get-access-token --query accessToken -o tsv)

#</get_access_token>

# <create_variables>

SUBSCRIPTION_ID=$(az account show --query id -o tsv)

LOCATION=$(az ml workspace show --query location -o tsv)

RESOURCE_GROUP=$(az group show --query name -o tsv)

WORKSPACE=$(az configure -l --query "[?name=='workspace'].value" -o tsv)

#</create_variables>

# <set_endpoint_name>

export ENDPOINT_NAME=endpoint-`echo $RANDOM`

# </set_endpoint_name>

#<api_version>

API_VERSION="2022-05-01"

#</api_version>

echo -e "Using:\nSUBSCRIPTION_ID=$SUBSCRIPTION_ID\nLOCATION=$LOCATION\nRESOURCE_GROUP=$RESOURCE_GROUP\nWORKSPACE=$WORKSPACE"

# define how to wait

wait_for_completion () {

operation_id=$1

status="unknown"

if [[ $operation_id == "" || -z $operation_id || $operation_id == "null" ]]; then

echo "operation id cannot be empty"

exit 1

fi

while [[ $status != "Succeeded" && $status != "Failed" ]]

do

echo "Getting operation status from: $operation_id"

operation_result=$(curl --location --request GET $operation_id --header "Authorization: Bearer $TOKEN")

# TODO error handling here

status=$(echo $operation_result | jq -r '.status')

echo "Current operation status: $status"

sleep 5

done

if [[ $status == "Failed" ]]

then

error=$(echo $operation_result | jq -r '.error')

echo "Error: $error"

fi

}

# <get_storage_details>

# Get values for storage account

response=$(curl --location --request GET "https://management.chinacloudapi.cn/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.MachineLearningServices/workspaces/$WORKSPACE/datastores?api-version=$API_VERSION&isDefault=true" \

--header "Authorization: Bearer $TOKEN")

AZUREML_DEFAULT_DATASTORE=$(echo $response | jq -r '.value[0].name')

AZUREML_DEFAULT_CONTAINER=$(echo $response | jq -r '.value[0].properties.containerName')

export AZURE_STORAGE_ACCOUNT=$(echo $response | jq -r '.value[0].properties.accountName')

# </get_storage_details>

# <upload_code>

az storage blob upload-batch -d $AZUREML_DEFAULT_CONTAINER/score -s cli/endpoints/online/model-1/onlinescoring --account-name $AZURE_STORAGE_ACCOUNT

# </upload_code>

# <create_code>

az deployment group create -g $RESOURCE_GROUP \

--template-file arm-templates/code-version.json \

--parameters \

workspaceName=$WORKSPACE \

codeAssetName="score-sklearn" \

codeUri="https://$AZURE_STORAGE_ACCOUNT.blob.core.chinacloudapi.cn/$AZUREML_DEFAULT_CONTAINER/score"

# </create_code>

# <upload_model>

az storage blob upload-batch -d $AZUREML_DEFAULT_CONTAINER/model -s cli/endpoints/online/model-1/model --account-name $AZURE_STORAGE_ACCOUNT

# </upload_model>

# <create_model>

az deployment group create -g $RESOURCE_GROUP \