本文介绍如何解决 Azure Monitor 中日志搜索警报的常见问题。 它还提供了有关日志警报功能和配置的常见问题的解决方法。

可以使用日志警报,通过使用 Log Analytics 查询来按设定的频率评估资源日志,并触发基于结果的警报。 规则可以使用动作组来触发一个或多个动作。 若要详细了解日志搜索警报的功能和术语,请参阅 Azure Monitor 中的日志警报。

注意

本文不讨论触发警报规则的情况,你可以在 Azure 门户中看到它,但系统未发送通知。 有关此类情况,请参阅排查警报问题。

日志搜索警报在应当触发时未触发

如果日志搜索警报在应当触发时未触发,请检查以下项:

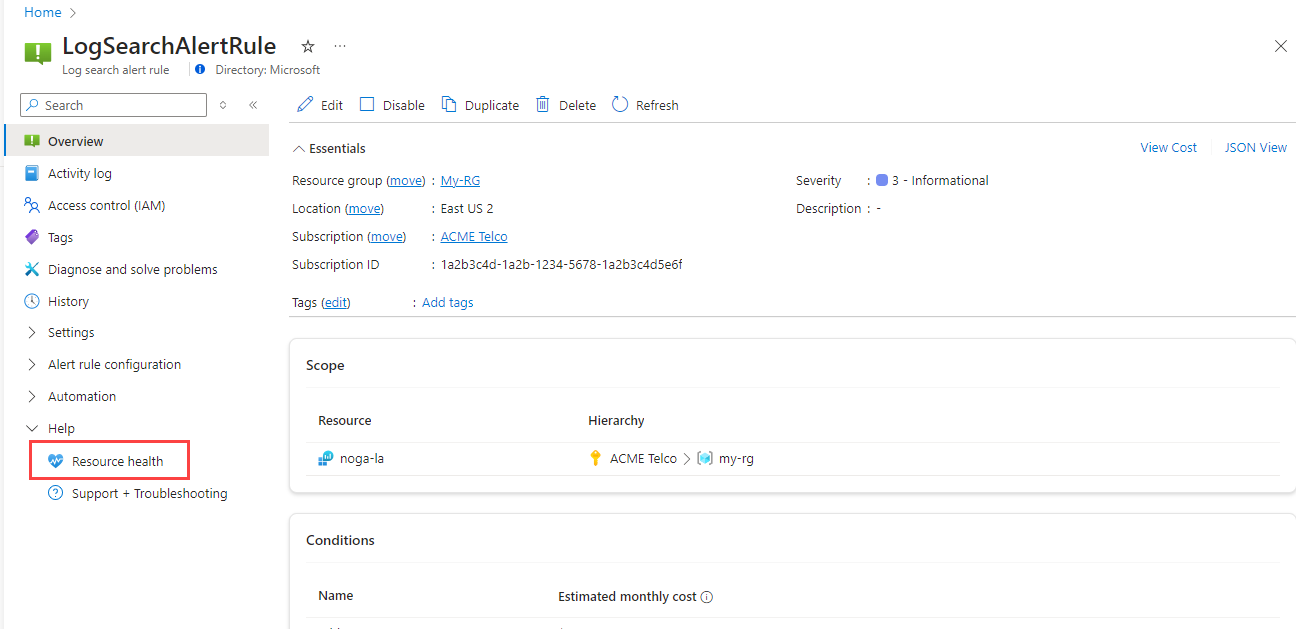

警报规则是否处于降级或不可用运行状况?

查看日志搜索警报规则的运行状况:

在门户中,选择“监视”,然后选择“警报”。

在命令栏中,选择“警报规则”。 该页显示所有订阅上的所有预警规则。

选择要监视的日志搜索警报规则。

在左侧窗格的“帮助”下,选择“资源健康状况”。

检查日志引入延迟。

Azure Monitor 处理来自世界各地的数 TB 的客户日志,这可能导致日志引入延迟。

日志是半结构化的数据,本质上比指标更加隐性。 如果遇到在触发警报时延迟超过 4 分钟的情况,应考虑使用指标警报。 你可以使用日志的指标警报将数据从日志发送到指标存储。

为了缓解延迟,系统会多次重试警报评估。 在数据到达后,该警报会触发,这在大多数情况下并不等于日志记录时间。

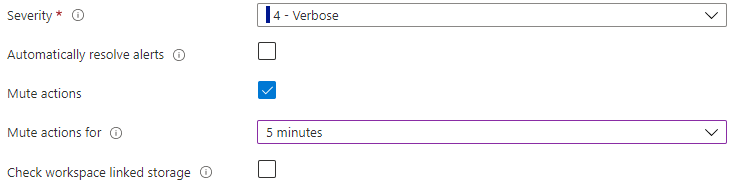

操作是否已静音,或者警报规则是否配置为自动解决?

一个常见的问题是你认为警报未触发,但规则已配置为不触发警报。 请参阅日志搜索警报规则的高级选项,以验证是否未选择以下两项:

- “将操作静音”复选框:允许在设置的时间段内将触发的警报操作静音。

- 自动解决警报:将警报配置为仅在满足每个条件后触发一次。

是否已移动或删除警报规则资源?

如果移动、重命名或删除了警报规则目标资源,则引用该资源的所有日志警报规则都将中断。 若要解决此问题,需要使用范围的有效目标资源重新创建警报规则。

警报规则是否使用系统分配的托管标识?

使用系统分配的托管标识创建日志警报规则时,将在没有任何权限的情况下创建标识。 创建规则后,需要将相应的角色分配给规则的标识,以便它可以访问要查询的数据。 例如,可能需要为相关 Log Analytics 工作区授予读取者角色,或者为相关 ADX 群集授予读取者角色和数据库查看者角色。 有关在日志警报中使用托管标识的详细信息,请参阅托管标识。

日志搜索警报规则中使用的查询是否有效?

创建日志预警规则后,将验证查询的语法是否正确。 但有时,日志警报规则中提供的查询可能会开始失败。 下面是一些常见原因:

- 规则是通过 API 创建的,而用户跳过了验证。

- 查询在多个资源上运行,并且已删除或移动了一个或多个资源。

-

查询失败,因为:

- 数据停止流向查询中的表已超过 30 天。

- 由于数据流尚未启动,因此尚未创建自定义日志表。

- 查询语言中的更改包括用于命令和函数的修改后的格式,因此,先前提供的查询不再有效。

Azure 资源运行状况监视云资源的健康状况,包括日志搜索警报规则。 当日志搜索警报规则正常时,规则将运行并且查询将成功执行。

日志搜索警报规则是否已禁用?

如果日志搜索警报规则查询在一周内连续评估失败,Azure Monitor 会自动禁用它。

此外,有一个在禁用规则时提交的活动日志事件示例。

禁用规则时的活动日志示例

{

"caller": "Microsoft.Insights/ScheduledQueryRules",

"channels": "Operation",

"claims": {

"http://schemas.xmlsoap.org/ws/2005/05/identity/claims/spn": "Microsoft.Insights/ScheduledQueryRules"

},

"correlationId": "abcdefg-4d12-1234-4256-21233554aff",

"description": "Alert: test-bad-alerts is disabled by the System due to : Alert has been failing consistently with the same exception for the past week",

"eventDataId": "f123e07-bf45-1234-4565-123a123455b",

"eventName": {

"value": "",

"localizedValue": ""

},

"category": {

"value": "Administrative",

"localizedValue": "Administrative"

},

"eventTimestamp": "2019-03-22T04:18:22.8569543Z",

"id": "/SUBSCRIPTIONS/<subscriptionId>/RESOURCEGROUPS/<ResourceGroup>/PROVIDERS/MICROSOFT.INSIGHTS/SCHEDULEDQUERYRULES/TEST-BAD-ALERTS",

"level": "Informational",

"operationId": "",

"operationName": {

"value": "Microsoft.Insights/ScheduledQueryRules/disable/action",

"localizedValue": "Microsoft.Insights/ScheduledQueryRules/disable/action"

},

"resourceGroupName": "<Resource Group>",

"resourceProviderName": {

"value": "MICROSOFT.INSIGHTS",

"localizedValue": "Microsoft Insights"

},

"resourceType": {

"value": "MICROSOFT.INSIGHTS/scheduledqueryrules",

"localizedValue": "MICROSOFT.INSIGHTS/scheduledqueryrules"

},

"resourceId": "/SUBSCRIPTIONS/<subscriptionId>/RESOURCEGROUPS/<ResourceGroup>/PROVIDERS/MICROSOFT.INSIGHTS/SCHEDULEDQUERYRULES/TEST-BAD-ALERTS",

"status": {

"value": "Succeeded",

"localizedValue": "Succeeded"

},

"subStatus": {

"value": "",

"localizedValue": ""

},

"submissionTimestamp": "2019-03-22T04:18:22.8569543Z",

"subscriptionId": "<SubscriptionId>",

"properties": {

"resourceId": "/SUBSCRIPTIONS/<subscriptionId>/RESOURCEGROUPS/<ResourceGroup>/PROVIDERS/MICROSOFT.INSIGHTS/SCHEDULEDQUERYRULES/TEST-BAD-ALERTS",

"subscriptionId": "<SubscriptionId>",

"resourceGroup": "<ResourceGroup>",

"eventDataId": "12e12345-12dd-1234-8e3e-12345b7a1234",

"eventTimeStamp": "03/22/2019 04:18:22",

"issueStartTime": "03/22/2019 04:18:22",

"operationName": "Microsoft.Insights/ScheduledQueryRules/disable/action",

"status": "Succeeded",

"reason": "Alert has been failing consistently with the same exception for the past week"

},

"relatedEvents": []

}

日志搜索警报在不应当触发时却触发

Azure Monitor 中配置的日志预警规则可能会意外触发该规则。 以下部分描述了某些常见原因。

警报是否因延迟问题而触发?

Azure Monitor 在全球范围内处理 TB 量级的客户日志,这可能会导致日志引入延迟。 有内置功能可以防止错误警报,但它们仍然可以在高延迟数据(约 30 分钟以上)和延迟峰值数据上发生。

日志是半结构化的数据,本质上比指标更加隐性。 如果触发警报出现很多误触发,请考虑使用指标警报。 你可以使用日志的指标警报将数据从日志发送到指标存储。

当你尝试检测日志中的特定数据时,日志搜索警报效果最佳。 当你尝试检测日志中是否缺少数据(例如虚拟机检测信号警报)时,它们的效率较低。

配置日志搜索警报规则时出现错误消息

有关特定错误消息及其解决方法,请参阅以下部分。

由于需要日志权限,无法验证查询

如果在创建或编辑警报规则查询时收到此错误消息,请确保你有权读取目标资源日志。

- 在工作区上下文访问模式下读取日志所需的权限:

Microsoft.OperationalInsights/workspaces/query/read。 - 在资源上下文访问模式下读取日志所需的权限(包括基于工作区的 Application Insights 资源):

Microsoft.Insights/logs/tableName/read。

请参阅管理对 Log Analytics 工作区的访问权限来了解有关权限的详细信息。

此查询不支持一分钟频率

使用一分钟警报规则频率存在一些限制。 将警报规则频率设置为一分钟时,将执行内部操作来优化查询。 如果此操作包含不受支持的操作,则可能会导致查询失败。

有关不支持的方案列表,请参阅此说明。

无法解析名为 <> 的标量表达式

在以下情况下,创建或编辑警报规则查询时可能会返回此错误消息:

- 正在引用表架构中不存在的列。

- 你正在引用查询的先前项目子句中未使用的列。

若要缓解此问题,可以将该列添加到上一项目子句中,也可以使用 columnifexists 运算符。

已达到警报规则服务限制

有关每个订阅的日志搜索警报规则数和资源的最大限制的详细信息,请参阅 Azure Monitor 服务限制。 请参阅检查正在使用的日志警报规则总数,了解当前正在使用的指标警报规则数量。 如果已达到配额限制,以下步骤可能会有助于解决此问题。

删除或禁用不再使用的日志搜索警报规则。

使用按维度拆分来减少规则数量。 使用“按维度拆分”时,一个规则可以监视许多资源。

如果需要提高配额限制,请继续创建支持请求,并提供以下信息:

- 需要提高配额限制的订阅 ID 和资源 ID

- 配额增加的原因

- 请求的配额限制

ARG 和 ADX 查询中的不完整时间筛选

在日志搜索警报中使用 Azure 数据资源管理器 (ADX) 或 Azure Resource Graph (ARG) 查询时,可能会遇到“聚合粒度”设置没有对查询应用时间筛选器的问题。 这可能会导致意外的结果和潜在的性能问题,因为查询会返回全部 30 天的数据,而不是预期时间范围内的数据。

若要解决此问题,需要在 ARG 和 ADX 查询中明确应用时间筛选器。 下面是确保这一点的步骤:

正确的时间筛选:确定时间范围:确定要查询的特定时间范围。 例如,如果要查询过去 24 小时内的数据,则需要在查询中指定此时间范围。

修改查询:在 ARG 或 ADX 查询中添加时间筛选器,将返回的数据限制在所需的时间范围内。 下面是如何修改查询的示例:

// Original query without time filter

resourcechanges

| where type == "microsoft.compute/virtualmachines"

// Modified query with time filter

resourcechanges

| where type == "microsoft.compute/virtualmachines"

| where properties.changeAttributes.timestamp > ago(1d)

- 测试查询:运行修改后的查询,以确保它返回在指定时间范围内的预期结果。

- 更新警报:更新日志搜索警报,以便使用修改后包含明确时间筛选器的查询。 这样可确保警报基于正确的数据,而不会包含不必要的历史数据。 通过在 ARG 和 ADX 查询中应用明确的时间筛选器,可以避免检索过多数据的问题,并确保日志搜索警报准确高效。

后续步骤

- 了解 Azure 中的日志警报。

- 了解有关配置日志警报的详细信息。

- 了解有关日志查询的详细信息。