适用于: Python SDK azure-ai-ml v2(当前版本)

Python SDK azure-ai-ml v2(当前版本)

本教程介绍 Azure 机器学习服务的一些最常用的功能。 创建、注册和部署模型。 本教程将帮助你熟悉 Azure 机器学习的核心概念及其最常见的用法。

在本快速入门中,你将使用 Azure 机器学习训练、注册和部署机器学习模型,所有这些模型都来自 Python 笔记本。 最后,你将有一个用于进行预测的工作端点。

你将学会如何:

- 在可缩放的云计算上运行训练作业

- 注册已训练的模型

- 将模型部署为联机终结点

- 使用示例数据测试终结点

你将创建一个训练脚本来处理数据准备、训练和注册模型。 训练模型后,将其部署为 终结点,然后调用终结点进行 推理。

可采取以下步骤:

- 设置 Azure 机器学习工作区的句柄

- 创建训练脚本

- 创建一个可缩放的计算资源,即计算群集

- 创建并运行一个命令作业,该作业在配置了适当作业环境的计算集群上运行训练脚本。

- 查看训练脚本的输出

- 将新训练的模型部署为终结点

- 调用 Azure 机器学习终结点进行推理

先决条件

-

要使用 Azure 机器学习,你需要一个工作区。 如果没有工作区,请完成创建开始使用所需的资源以创建工作区并详细了解如何使用它。

重要

如果 Azure 机器学习工作区配置了托管虚拟网络,则可能需要添加出站规则以允许访问公共 Python 包存储库。 有关详细信息,请参阅应用场景:访问公共机器学习包。

-

登录到工作室,选择工作区(如果尚未打开)。

-

在工作区中打开或创建一个笔记本:

设置内核并在 Visual Studio Code (VS Code) 中打开

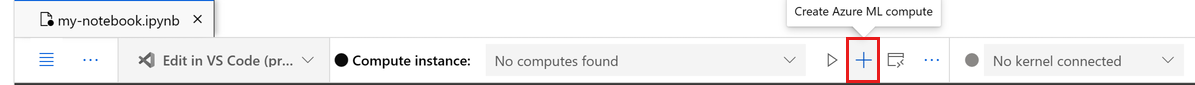

在打开的笔记本上方的顶部栏中,创建一个计算实例(如果还没有计算实例)。

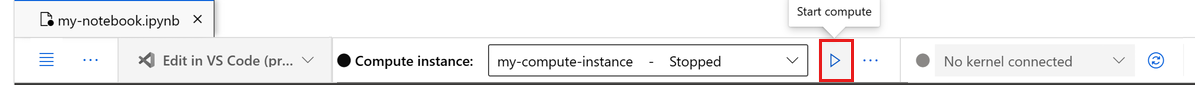

如果计算实例已停止,请选择“启动计算”,并等待它运行。

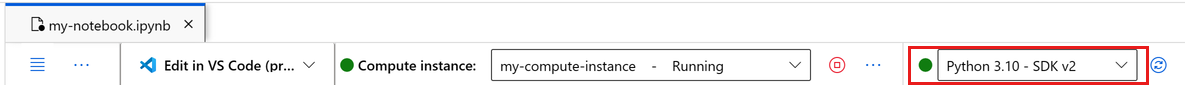

等待计算实例运行。 然后确保右上角的内核为

Python 3.10 - SDK v2。 如果不是,请使用下拉列表选择该内核。如果没有看到该内核,请验证计算实例是否正在运行。 如果它正在运行,请选择笔记本右上角的“刷新”按钮。

如果看到一个横幅,提示你需要进行身份验证,请选择“身份验证”。

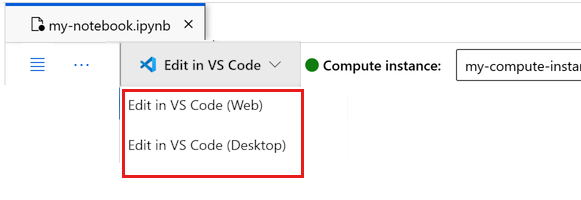

可在此处运行笔记本,或者在 VS Code 中将其打开,以获得具有 Azure 机器学习资源强大功能的完整集成开发环境 (IDE)。 选择“在 VS Code 中打开”,然后选择 Web 或桌面选项。 以这种方式启动时,VS Code 将附加到计算实例、内核和工作区文件系统。

重要

本教程的其余部分包含教程笔记本的单元格。 将其复制并粘贴到新笔记本中,或者立即切换到该笔记本(如果已克隆该笔记本)。

创建工作区句柄

在开始编写代码之前,您需要一种方法来标识您的工作区。 工作区是 Azure 机器学习的顶级资源,为使用 Azure 机器学习时创建的所有项目提供了一个集中的处理位置。

将 ml_client 创建为工作区的句柄,该客户端用于管理所有资源和作业。

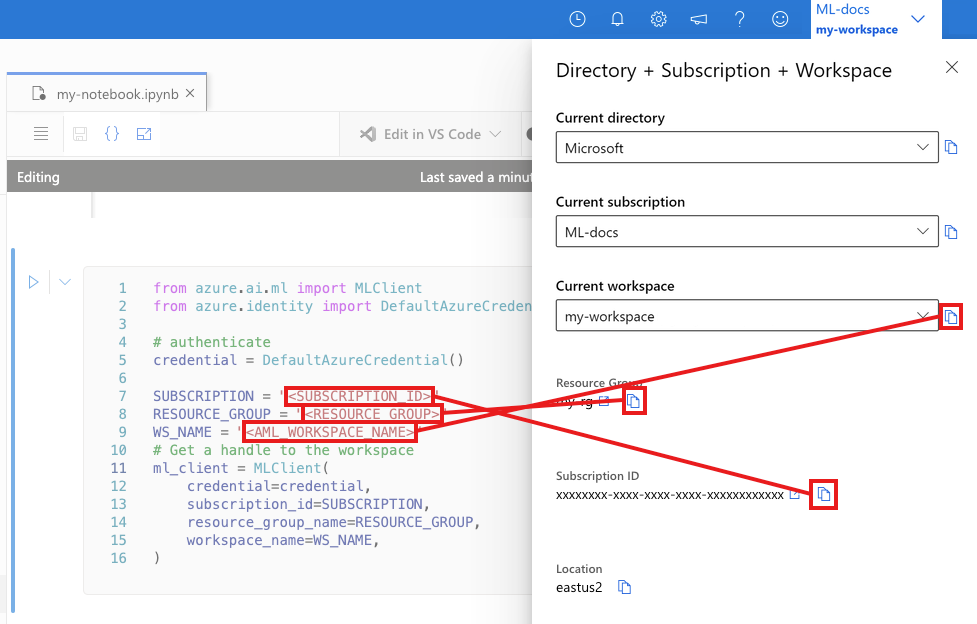

在下一个单元中,输入你的订阅 ID、资源组名称和工作区名称。 若要查找这些值:

- 在右上方的 Azure 机器学习工作室工具栏中,选择你的工作区名称。

- 将工作区、资源组和订阅 ID 的值复制到代码中。

- 复制一个值,关闭区域,然后将其粘贴。 然后返回下一个值。

from azure.ai.ml import MLClient

from azure.identity import DefaultAzureCredential

# authenticate

credential = DefaultAzureCredential()

SUBSCRIPTION = "<SUBSCRIPTION_ID>"

RESOURCE_GROUP = "<RESOURCE_GROUP>"

WS_NAME = "<AML_WORKSPACE_NAME>"

# Get a handle to the workspace

ml_client = MLClient(

credential=credential,

subscription_id=SUBSCRIPTION,

resource_group_name=RESOURCE_GROUP,

workspace_name=WS_NAME,

)

注意

创建“MLClient”不会连接到工作区。 客户端初始化不会立即进行, 它会等到首次需要调用时。 此操作发生在下一个代码单元格中。

# Verify that the handle works correctly.

# If you ge an error here, modify your SUBSCRIPTION, RESOURCE_GROUP, and WS_NAME in the previous cell.

ws = ml_client.workspaces.get(WS_NAME)

print(ws.location, ":", ws.resource_group)

创建训练脚本

创建训练脚本,即 main.py Python 文件。

首先,为脚本创建源文件夹:

import os

train_src_dir = "./src"

os.makedirs(train_src_dir, exist_ok=True)

此脚本预处理数据并将其拆分为测试和训练数据集。 它使用此数据训练基于树的模型,并返回输出模型。

在管道运行期间,使用 MLFlow 记录参数和指标。

以下单元格使用 IPython magic 将训练脚本写入刚刚创建的目录中。

%%writefile {train_src_dir}/main.py

import os

import argparse

import pandas as pd

import mlflow

import mlflow.sklearn

from sklearn.ensemble import GradientBoostingClassifier

from sklearn.metrics import classification_report

from sklearn.model_selection import train_test_split

def main():

"""Main function of the script."""

# input and output arguments

parser = argparse.ArgumentParser()

parser.add_argument("--data", type=str, help="path to input data")

parser.add_argument("--test_train_ratio", type=float, required=False, default=0.25)

parser.add_argument("--n_estimators", required=False, default=100, type=int)

parser.add_argument("--learning_rate", required=False, default=0.1, type=float)

parser.add_argument("--registered_model_name", type=str, help="model name")

args = parser.parse_args()

# Start Logging

mlflow.start_run()

# enable autologging

mlflow.sklearn.autolog()

###################

#<prepare the data>

###################

print(" ".join(f"{k}={v}" for k, v in vars(args).items()))

print("input data:", args.data)

credit_df = pd.read_csv(args.data, header=1, index_col=0)

mlflow.log_metric("num_samples", credit_df.shape[0])

mlflow.log_metric("num_features", credit_df.shape[1] - 1)

train_df, test_df = train_test_split(

credit_df,

test_size=args.test_train_ratio,

)

####################

#</prepare the data>

####################

##################

#<train the model>

##################

# Extracting the label column

y_train = train_df.pop("default payment next month")

# convert the dataframe values to array

X_train = train_df.values

# Extracting the label column

y_test = test_df.pop("default payment next month")

# convert the dataframe values to array

X_test = test_df.values

print(f"Training with data of shape {X_train.shape}")

clf = GradientBoostingClassifier(

n_estimators=args.n_estimators, learning_rate=args.learning_rate

)

clf.fit(X_train, y_train)

y_pred = clf.predict(X_test)

print(classification_report(y_test, y_pred))

###################

#</train the model>

###################

##########################

#<save and register model>

##########################

# Registering the model to the workspace

print("Registering the model via MLFlow")

# pin numpy

conda_env = {

'name': 'mlflow-env',

'channels': ['conda-forge'],

'dependencies': [

'python=3.10.15',

'pip<=21.3.1',

{

'pip': [

'mlflow==2.17.0',

'cloudpickle==2.2.1',

'pandas==1.5.3',

'psutil==5.8.0',

'scikit-learn==1.5.2',

'numpy==1.26.4',

]

}

],

}

mlflow.sklearn.log_model(

sk_model=clf,

registered_model_name=args.registered_model_name,

artifact_path=args.registered_model_name,

conda_env=conda_env,

)

# Saving the model to a file

mlflow.sklearn.save_model(

sk_model=clf,

path=os.path.join(args.registered_model_name, "trained_model"),

)

###########################

#</save and register model>

###########################

# Stop Logging

mlflow.end_run()

if __name__ == "__main__":

main()

训练模型后,脚本会将模型文件保存到工作区并注册。 可以在推理终结点中使用已注册的模型。

可能需要选择“刷新”才能在“文件”中看到新文件夹和脚本。

配置命令

现在,你有一个可以执行所需任务的脚本,以及运行脚本的计算群集。 使用可以运行命令行操作的常规用途 命令。 此命令行操作可以直接调用系统命令或运行脚本。

创建输入变量以指定输入数据、拆分比率、学习速率和已注册的模型名称。 命令脚本:

- 使用定义训练脚本所需的软件和运行时库 的环境 。 Azure 机器学习提供了许多特选或现成的环境,这些环境对于常见训练和推理方案非常有用。 你将在此处使用这些环境之一。 在教程:在 Azure 机器学习中训练模型中,你将了解如何创建自定义环境。

- 配置命令行操作本身 -

python main.py在本例中。 可以通过使用表示法${{ ... }}在命令中访问输入和输出。 - 从 Internet 上的文件访问数据。

- 由于未指定计算资源,因此脚本在自动创建的 无服务器计算群集 上运行。

from azure.ai.ml import command

from azure.ai.ml import Input

registered_model_name = "credit_defaults_model"

job = command(

inputs=dict(

data=Input(

type="uri_file",

path="https://azuremlexamples.blob.core.chinacloudapi.cn/datasets/credit_card/default_of_credit_card_clients.csv",

),

test_train_ratio=0.2,

learning_rate=0.25,

registered_model_name=registered_model_name,

),

code="./src/", # location of source code

command="python main.py --data ${{inputs.data}} --test_train_ratio ${{inputs.test_train_ratio}} --learning_rate ${{inputs.learning_rate}} --registered_model_name ${{inputs.registered_model_name}}",

environment="azureml://registries/azureml/environments/sklearn-1.5/labels/latest",

display_name="credit_default_prediction",

)

提交作业

提交要在 Azure 机器学习中运行的作业。 这次在 create_or_update 上使用 ml_client。

ml_client.create_or_update(job)

查看作业输出并等待作业完成

选择上一个单元格的输出中的链接,以在 Azure 机器学习工作室中查看作业。

此作业的输出在 Azure 机器学习工作室中如下所示。 浏览选项卡以获取各种详细信息,例如指标、输出等。 完成训练后,作业会在工作区中注册一个模型。

重要

等待作业状态显示 “已完成 ”,然后继续- 通常为 2-3 分钟。 如果计算群集缩放到零,则在启动时可能需要等待最多 10 分钟。

等待时,浏览工作室中的作业详细信息:

- “指标 ”选项卡:查看 MLflow 记录的训练指标

- “输出 + 日志 ”选项卡:检查训练日志

- “模型 ”选项卡:查看已注册的模型(完成后)

将模型部署为联机终结点

使用 online endpoint 将机器学习模型部署为 Azure 云中的 Web 服务。

若要部署机器学习服务,请使用已注册的模型。

创建新的联机终结点

注册模型后,请创建联机终结点。 终结点名称需在整个 Azure 区域中唯一。 对于本教程,请使用 UUID.

import uuid

# Creating a unique name for the endpoint

online_endpoint_name = "credit-endpoint-" + str(uuid.uuid4())[:8]

创建终结点。

# Expect the endpoint creation to take a few minutes

from azure.ai.ml.entities import (

ManagedOnlineEndpoint,

ManagedOnlineDeployment,

Model,

Environment,

)

# create an online endpoint

endpoint = ManagedOnlineEndpoint(

name=online_endpoint_name,

description="this is an online endpoint",

auth_mode="key",

tags={

"training_dataset": "credit_defaults",

"model_type": "sklearn.GradientBoostingClassifier",

},

)

endpoint = ml_client.online_endpoints.begin_create_or_update(endpoint).result()

print(f"Endpoint {endpoint.name} provisioning state: {endpoint.provisioning_state}")

注意

预计创建终结点需要几分钟时间。

创建终结点后,按以下代码所示检索该终结点:

endpoint = ml_client.online_endpoints.get(name=online_endpoint_name)

print(

f'Endpoint "{endpoint.name}" with provisioning state "{endpoint.provisioning_state}" is retrieved'

)

将模型部署到终结点

创建终结点后,使用入口脚本部署模型。 每个终结点可以有多个部署。 可以指定规则以将流量定向到这些部署。 在此示例中,将创建一个处理传入流量的 100% 的单个部署。 为部署选择颜色名称,例如 蓝色、 绿色或 红色。 选择是任意的。

若要查找已注册模型的最新版本,请查看 Azure 机器学习工作室中的 “模型 ”页。 或者,使用以下代码检索最新的版本号。

# Let's pick the latest version of the model

latest_model_version = max(

[int(m.version) for m in ml_client.models.list(name=registered_model_name)]

)

print(f'Latest model is version "{latest_model_version}" ')

部署模型的最新版本。

# picking the model to deploy. Here we use the latest version of our registered model

model = ml_client.models.get(name=registered_model_name, version=latest_model_version)

# Expect this deployment to take approximately 6 to 8 minutes.

# create an online deployment.

# if you run into an out of quota error, change the instance_type to a comparable VM that is available.

# Learn more on https://www.azure.cn/pricing/details/machine-learning/.

blue_deployment = ManagedOnlineDeployment(

name="blue",

endpoint_name=online_endpoint_name,

model=model,

instance_type="Standard_DS3_v2",

instance_count=1,

)

blue_deployment = ml_client.begin_create_or_update(blue_deployment).result()

注意

此部署预计需要大约 6 到 8 分钟。

部署完成后,即可对其进行测试。

使用示例查询进行测试

将模型部署到终结点后,使用模型运行推理。

创建一个示例请求文件,该文件遵循评分脚本中 run 方法的预期设计。

deploy_dir = "./deploy"

os.makedirs(deploy_dir, exist_ok=True)

%%writefile {deploy_dir}/sample-request.json

{

"input_data": {

"columns": [0,1,2,3,4,5,6,7,8,9,10,11,12,13,14,15,16,17,18,19,20,21,22],

"index": [0, 1],

"data": [

[20000,2,2,1,24,2,2,-1,-1,-2,-2,3913,3102,689,0,0,0,0,689,0,0,0,0],

[10, 9, 8, 7, 6, 5, 4, 3, 2, 1, 10, 9, 8, 7, 6, 5, 4, 3, 2, 1, 10, 9, 8]

]

}

}

# test the blue deployment with some sample data

ml_client.online_endpoints.invoke(

endpoint_name=online_endpoint_name,

request_file="./deploy/sample-request.json",

deployment_name="blue",

)

清理资源

如果不需要终结点,请将其删除以停止使用资源。 在删除端点之前,请确保没有其他部署使用该端点。

注意

预计完成删除操作大约需要 20 分钟。

ml_client.online_endpoints.begin_delete(name=online_endpoint_name)

停止计算实例

如果现在不需要,请停止计算实例:

- 在工作室的左窗格中,选择 “计算”。

- 在顶部选项卡中,选择“计算实例”。

- 在列表中选择该计算实例。

- 在顶部工具栏中,选择“停止”。

删除所有资源

重要

已创建的资源可用作其他 Azure 机器学习教程和操作方法文章的先决条件。

如果你不打算使用已创建的任何资源,请删除它们,以免产生任何费用:

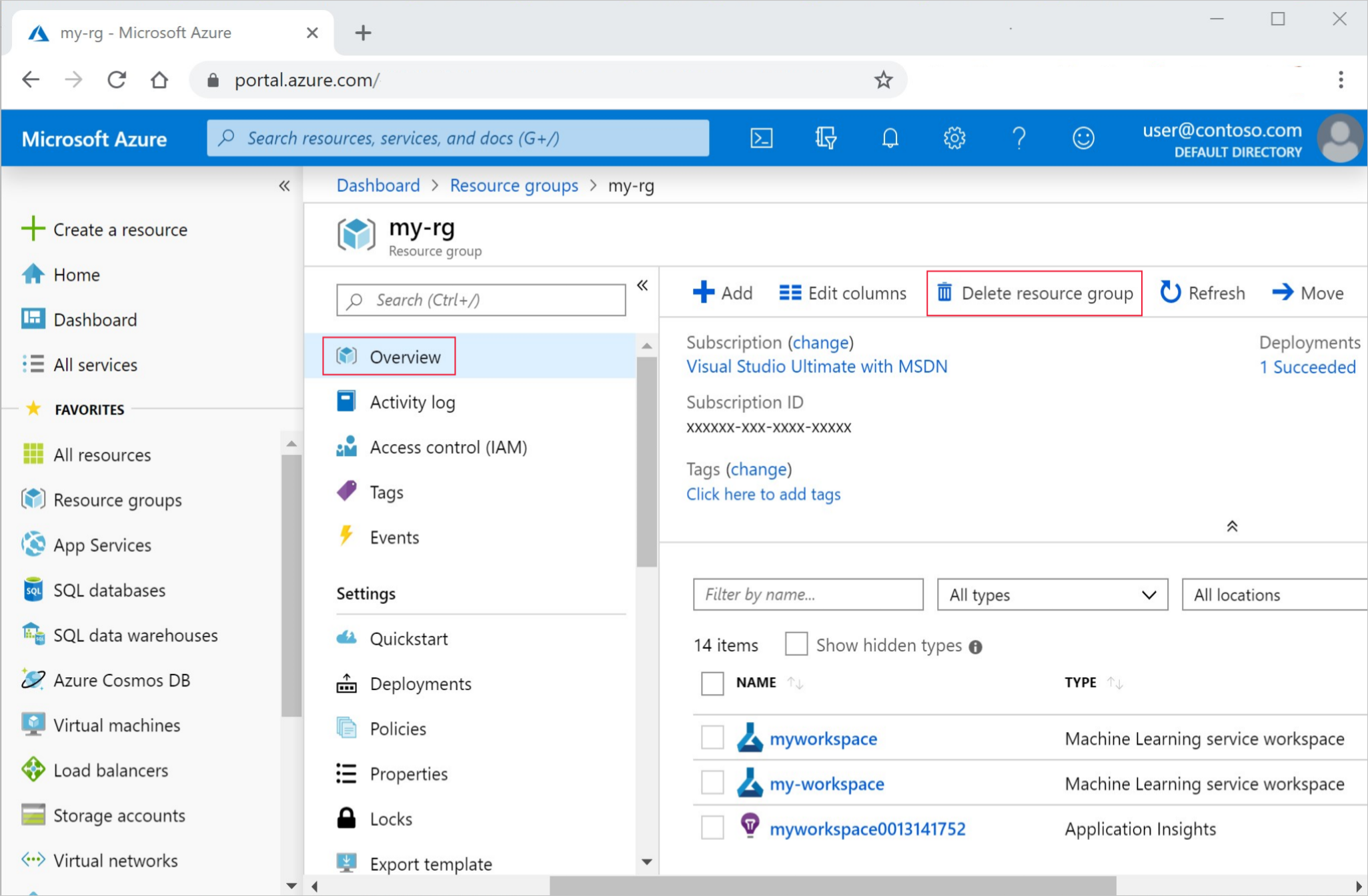

在 Azure 门户的搜索框中输入“资源组”,然后从结果中选择它。

从列表中选择你创建的资源组。

在“概述”页面上,选择“删除资源组”。

输入资源组名称。 然后选择“删除”。

后续步骤

探索使用 Azure 机器学习进行构建的更多方法:

| 教程 | 说明 |

|---|---|

| 上传、访问和浏览数据 | 在云中存储大型数据并从笔记本访问它 |

| 将模型部署为联机终结点 | 了解高级部署配置 |

| 创建生产管道 | 生成自动化的可重用 ML 工作流 |