Azure Stream Analytics on IoT Edge 使开发人员能够将近乎实时的分析智能部署到更靠近 IoT 设备的地方,以释放设备生成数据的全部价值。 Azure Stream Analytics专为低延迟、复原能力、高效使用带宽和合规性而设计。 企业可以部署靠近工业运营的控制逻辑,并补充在云中完成的大数据分析。

IoT Edge上的Azure Stream Analytics在 Azure IoT Edge 框架中运行。 在流分析中创建作业后,可以使用IoT Hub部署和管理该作业。

常见应用场景

本部分介绍有关IoT Edge流分析的常见方案。 下图显示了 IoT 设备与 Azure 云之间的数据流。

低延迟命令和控制

制造安全系统必须以超低延迟响应运营数据。 使用流分析IoT Edge,可以近乎实时地分析传感器数据,并在检测到异常时发出命令来停止计算机或触发警报。

与云的连接有限

任务关键型系统(如远程采矿设备、连接的船只或近海钻探)需要分析和响应数据,即使云连接是间歇性的。 使用流分析,流式处理逻辑独立于网络连接运行,可以选择发送到云的内容进行进一步处理或存储。

有限带宽

喷气引擎或联网汽车生成的数据量可能非常大,因此在将数据发送到云之前,必须对其进行筛选或预处理。 使用流分析,可以筛选或聚合需要发送到云的数据。

合规

在发送到云之前,法规合规性可能需要在本地匿名或聚合某些数据。

Azure Stream Analytics中的边缘作业

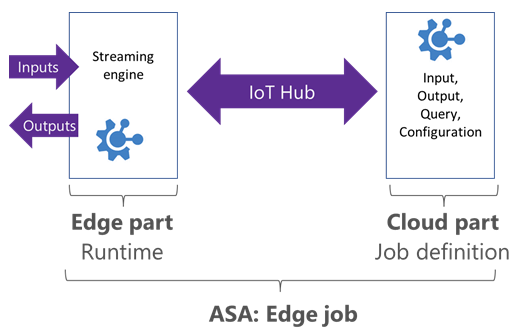

流分析 Edge 作业在部署到 Azure IoT Edge 设备的容器中运行。 边缘作业由两个部分组成:

负责作业定义的云部件:用户在云中定义输入、输出、查询和其他设置,例如无序事件。

在 IoT 设备上运行的模块。 该模块包含流分析引擎,并从云接收作业定义。

Stream Analytics使用IoT Hub将边缘任务部署到设备中。 有关详细信息,请参阅 IoT Edge 部署。

Edge 作业限制

目标是在IoT Edge作业和云作业之间实现等效。 边缘和云都支持大多数 SQL 查询语言功能。 但是,边缘作业不支持以下功能:

- JavaScript 中的用户定义的函数 (UDF)。

- 用户定义的聚合(UDA)。

- Azure ML 函数。

- 输入/输出的 AVRO 格式。 目前仅支持 CSV 和 JSON。

- 以下 SQL 运算符:

- 划分

- GetMetadataPropertyValue

- 延迟到达策略

运行时和硬件要求

若要在 IoT Edge 上运行流分析,需要可以运行 Azure IoT Edge 的设备。

流分析和Azure IoT Edge使用 Docker 容器提供可在多个主机操作系统(Windows Linux)上运行的可移植解决方案。

IoT Edge上的流分析作为Windows和 Linux 映像提供,在 x86-64 或 ARM(高级 RISC 计算机)体系结构上运行。

输入和输出

流分析 Edge 作业可以从IoT Edge设备上运行的其他模块获取输入和输出。 若要从特定模块进行连接和连接到特定模块,可以在部署时设置路由配置。 有关详细信息,请参阅 IoT Edge 模块组合文档。

对于输入和输出,支持 CSV 和 JSON 格式。

对于在流分析作业中创建的每个输入和输出流,将在部署的模块上创建相应的终结点。 这些终结点可以用于部署的路由。

支持的流输入类型包括:

- 事件中心。

- IoT Hub。

支持的流输出类型包括:

- SQL Database

- 事件中心

- Blob Storage/ADLS Gen2

引用输入支持引用文件类型。 可以使用下游的云作业访问其他输出。

Azure Stream Analytics模块映像信息

此版本信息上次更新于 2020-09-21:

映像:

mcr.microsoft.com/azure-stream-analytics/azureiotedge:1.0.9-linux-amd64- 基础映像:mcr.microsoft.com/dotnet/core/runtime:2.1.13-alpine

- 平台:

- 体系结构:amd64

- os: linux

映像:

mcr.microsoft.com/azure-stream-analytics/azureiotedge:1.0.9-linux-arm32v7- 基础映像:mcr.microsoft.com/dotnet/core/runtime:2.1.13-bionic-arm32v7

- 平台:

- 体系结构:arm

- os: linux

映像:

mcr.microsoft.com/azure-stream-analytics/azureiotedge:1.0.9-linux-arm64- 基础映像:mcr.microsoft.com/dotnet/core/runtime:3.0-bionic-arm64v8

- 平台:

- 体系结构:arm64

- os: linux

后续步骤

更多有关 Azure IoT Edge - IoT Edge 的 Stream Analytics 教程

- 使用 API 实现流分析的 CI/CD