开始使用适用于 Node.js 的 Azure Blob 存储客户端库来管理 Blob 和容器。

在本文中,你将按照步骤安装软件包,并试用基本任务的示例代码。 API 参考 | 库源代码 | 包 (npm) | 示例

先决条件

- 具有有效订阅的 Azure 帐户 - 创建试用帐户

- Azure 存储帐户 - 创建存储帐户

- Node.js LTS

设置

本部分逐步指导如何准备一个项目,使其与适用于 Node.js 的 Azure Blob 存储客户端库配合使用。

创建 Node.js 项目

创建名为 blob-quickstart 的 JavaScript 应用程序。

在控制台窗口(例如 cmd、PowerShell 或 Bash)中,为项目创建新目录:

mkdir blob-quickstart切换到新创建的 blob-quickstart 目录:

cd blob-quickstart创建 package.json 文件:

npm init -y在 Visual Studio Code 中打开项目:

code .

安装包

从项目目录中,使用 npm install 命令安装以下包。

安装 Azure 存储 npm 包:

npm install @azure/storage-blob安装用于无密码连接的 Azure 标识 npm 包:

npm install @azure/identity安装本快速入门中使用的其他依赖项:

npm install uuid dotenv

设置应用框架

从项目目录中执行以下操作:

- 创建名为

index.js的新文件 - 将以下代码复制到文件中:

const { BlobServiceClient } = require("@azure/storage-blob");

const { v1: uuidv1 } = require("uuid");

require("dotenv").config();

async function main() {

try {

console.log("Azure Blob storage v12 - JavaScript quickstart sample");

// Quick start code goes here

} catch (err) {

console.err(`Error: ${err.message}`);

}

}

main()

.then(() => console.log("Done"))

.catch((ex) => console.log(ex.message));

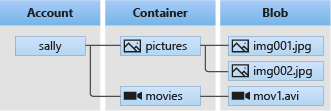

对象模型

Azure Blob 存储最适合存储巨量的非结构化数据。 非结构化数据是不遵循特定数据模型或定义的数据(如文本或二进制数据)。 Blob 存储提供了三种类型的资源:

- 存储帐户

- 存储帐户中的容器

- 容器中的 blob

以下图示显示了这些资源之间的关系。

使用以下 JavaScript 类与这些资源进行交互:

-

BlobServiceClient:

BlobServiceClient类可用于操纵 Azure 存储资源和 blob 容器。 -

ContainerClient:

ContainerClient类可用于操纵 Azure 存储容器及其 blob。 -

BlobClient:

BlobClient类可用于操纵 Azure 存储 blob。

代码示例

这些示例代码片段演示了如何使用适用于 JavaScript 的 Azure Blob 存储客户端库执行以下任务:

此外,GitHub 上也会提供示例代码。

向 Azure 进行身份验证并授权访问 Blob 数据

对 Azure Blob 存储的应用程序请求必须获得授权。 要在代码中实现与 Azure 服务(包括 Blob 存储)的无密码连接,推荐使用 azure-identity 客户端库提供的 DefaultAzureCredential 类。

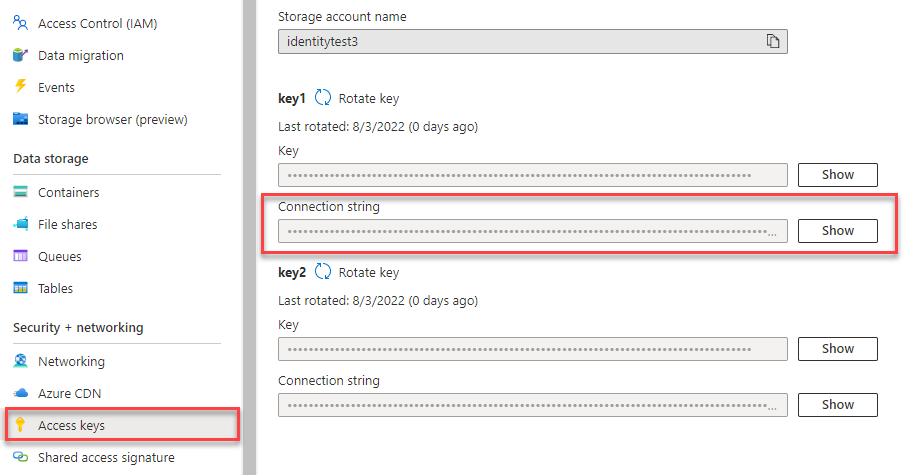

你还可以使用帐户访问密钥授权对 Azure Blob 存储的请求。 但是,应谨慎使用此方法。 开发人员必须尽量避免在不安全的位置公开访问密钥。 具有访问密钥的任何人都可以授权针对存储帐户的请求,并且实际上有权访问所有数据。

DefaultAzureCredential 提供比帐户密钥更好的管理和安全优势,来实现无密码身份验证。 以下示例演示了这两个选项。

DefaultAzureCredential 支持多种身份验证方法,并确定应在运行时使用哪种方法。 通过这种方法,你的应用可在不同环境(本地与生产)中使用不同的身份验证方法,而无需实现特定于环境的代码。

有关 DefaultAzureCredential 查找凭据的顺序和位置,可查看 Azure 标识库概述。

例如,应用可在本地开发时使用 Azure CLI 登录凭据进行身份验证。 一旦部署到 Azure,你的应用就可以使用托管标识。 此转换不需要进行任何代码更改。

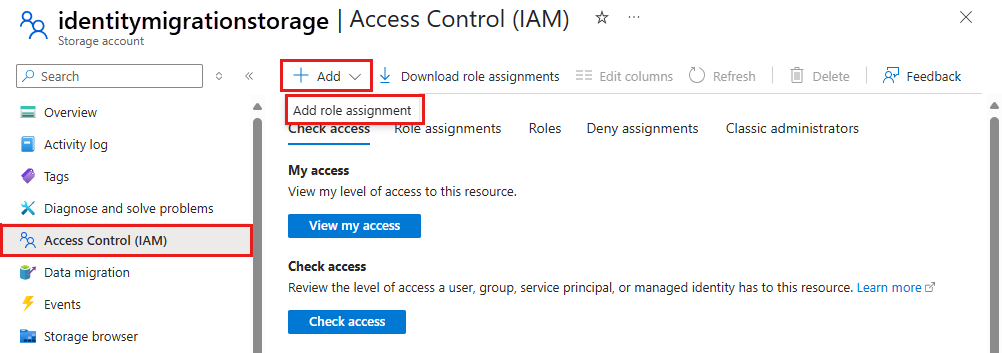

将角色分配至 Microsoft Entra 用户帐户

在本地开发时,请确保访问 Blob 数据的用户帐户具有正确的权限。 需有“存储 Blob 数据参与者”角色才能读取和写入 Blob 数据。 若要为你自己分配此角色,需要具有“用户访问管理员”角色,或者具有包含 Microsoft.Authorization/roleAssignments/write 操作的其他角色。 可使用 Azure 门户、Azure CLI 或 Azure PowerShell 向用户分配 Azure RBAC 角色。 有关 存储 Blob 数据参与者 角色的详细信息,请参阅 存储 Blob 数据参与者。 有关角色分配的可用范围的详细信息,请参阅 了解 Azure RBAC 的范围。

在此方案中,你将为用户帐户分配权限(范围为存储帐户)以遵循最低权限原则。 这种做法仅为用户提供所需的最低权限,并创建更安全的生产环境。

以下示例将“存储 Blob 数据参与者”角色分配给用户帐户,该角色提供对存储帐户中 Blob 数据的读取和写入访问权限。

重要

在大多数情况下,角色分配在 Azure 中传播需要一两分钟的时间,但极少数情况下最多可能需要 8 分钟。 如果在首次运行代码时收到身份验证错误,请稍等片刻再试。

在 Azure 门户中,使用主搜索栏或左侧导航找到存储帐户。

在存储帐户概述页的左侧菜单中选择“访问控制 (IAM)”。

在“访问控制 (IAM)”页上,选择“角色分配”选项卡。

从顶部菜单中选择“+ 添加”,然后从出现的下拉菜单中选择“添加角色分配”。

使用搜索框将结果筛选为所需角色。 在此示例中,搜索“存储 Blob 数据参与者”并选择匹配的结果,然后选择“下一步”。

在“访问权限分配对象”下,选择“用户、组或服务主体”,然后选择“+ 选择成员”。

在对话框中,搜索 Microsoft Entra ID 用户名(通常是 user@domain 电子邮件地址),然后选中对话框底部的“选择”。

选择“查看 + 分配”转到最后一页,然后再次选择“查看 + 分配”完成该过程。

使用 DefaultAzureCredential 登录并将应用代码连接到 Azure

可按照以下步骤授权访问存储帐户中的数据:

确保在存储帐户上向将角色分配到的同一 Microsoft Entra 帐户进行身份验证。 可通过 Azure CLI、Visual Studio Code 或 Azure PowerShell 进行身份验证。

使用以下命令通过 Azure CLI 登录到 Azure:

az login要使用

DefaultAzureCredential,请确保已安装 @azure\identity 包,并导入类:const { DefaultAzureCredential } = require('@azure/identity');在

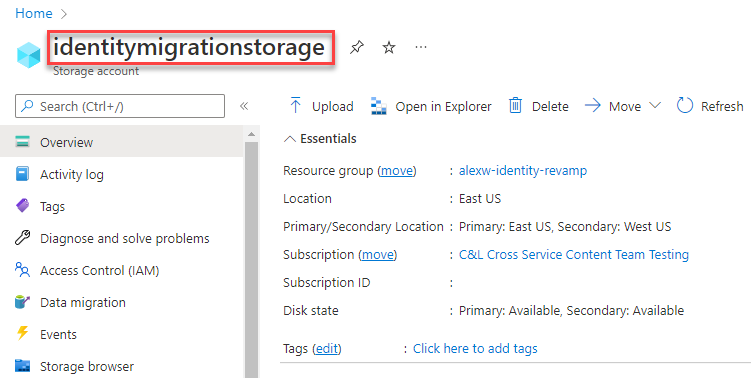

try块内添加此代码。 当代码在本地工作站上运行时,DefaultAzureCredential使用你登录的优先工具的开发人员凭据向 Azure 进行身份验证。 这些工具的示例包括 Azure CLI 或 Visual Studio Code。const accountName = process.env.AZURE_STORAGE_ACCOUNT_NAME; if (!accountName) throw Error('Azure Storage accountName not found'); const blobServiceClient = new BlobServiceClient( `https://${accountName}.blob.core.chinacloudapi.cn`, new DefaultAzureCredential() );确保更新

AZURE_STORAGE_ACCOUNT_NAME文件或环境变量中的存储帐户名称.env。 可以在 Azure 门户的概述页上找到存储帐户名称。

注意

部署到 Azure 时,同样的代码可用于授权从 Azure 中运行的应用程序对 Azure 存储的请求。 但是,需要在 Azure 中的应用上启用托管标识。 然后,配置你的存储帐户以允许该托管标识进行连接。 有关在 Azure 服务之间配置此连接的详细说明,请参阅 Azure 托管应用中的身份验证教程。

创建容器

在存储帐户中创建新容器。 以下代码示例采用 BlobServiceClient 对象,并调用 getContainerClient 方法来获取对容器的引用。 然后,代码调用 create 方法在存储帐户中实际创建容器。

将此代码添加到 try 块的末尾:

// Create a unique name for the container

const containerName = 'quickstart' + uuidv1();

console.log('\nCreating container...');

console.log('\t', containerName);

// Get a reference to a container

const containerClient = blobServiceClient.getContainerClient(containerName);

// Create the container

const createContainerResponse = await containerClient.create();

console.log(

`Container was created successfully.\n\trequestId:${createContainerResponse.requestId}\n\tURL: ${containerClient.url}`

);

若要详细了解如何创建容器并浏览更多代码示例,请参阅使用 JavaScript 创建 Blob 容器。

重要

容器名称必须为小写。 有关命名容器和 Blob 的详细信息,请参阅命名和引用容器、Blob 和元数据。

将 blob 上传到容器中

将 blob 上传到容器。 以下代码通过从创建容器部分调用 ContainerClient 上的 getBlockBlobClient 方法,获取对 BlockBlobClient 对象的引用。

该代码通过调用 upload 方法,将文本字符串数据上传到 blob。

将此代码添加到 try 块的末尾:

// Create a unique name for the blob

const blobName = 'quickstart' + uuidv1() + '.txt';

// Get a block blob client

const blockBlobClient = containerClient.getBlockBlobClient(blobName);

// Display blob name and url

console.log(

`\nUploading to Azure storage as blob\n\tname: ${blobName}:\n\tURL: ${blockBlobClient.url}`

);

// Upload data to the blob

const data = 'Hello, World!';

const uploadBlobResponse = await blockBlobClient.upload(data, data.length);

console.log(

`Blob was uploaded successfully. requestId: ${uploadBlobResponse.requestId}`

);

上面的代码通过从创建容器部分调用 ContainerClient 上的 getBlockBlobClient 方法,获取对 BlockBlobClient 对象的引用。 该代码通过调用 upload 方法,将文本字符串数据上传到 blob。

若要详细了解如何上传 Blob 并浏览更多代码示例,请参阅使用 JavaScript 上传 Blob。

列出容器中的 Blob

列出容器中的 Blob。 以下代码调用 listBlobsFlat 方法。 在这种情况下,容器中只有一个 Blob,因此列表操作只返回那个 Blob。

将此代码添加到 try 块的末尾:

console.log('\nListing blobs...');

// List the blob(s) in the container.

for await (const blob of containerClient.listBlobsFlat()) {

// Get Blob Client from name, to get the URL

const tempBlockBlobClient = containerClient.getBlockBlobClient(blob.name);

// Display blob name and URL

console.log(

`\n\tname: ${blob.name}\n\tURL: ${tempBlockBlobClient.url}\n`

);

}

上面的代码调用 listBlobsFlat 方法。 在这种情况下,只向容器添加了一个 blob,因此列表操作只返回那个 blob。

若要详细了解如何列出 Blob 并浏览更多代码示例,请参阅使用 JavaScript 列出 Blob。

下载 Blob

下载 Blob 并显示内容。 以下代码调用 download 方法以下载 Blob。

将此代码添加到 try 块的末尾:

// Get blob content from position 0 to the end

// In Node.js, get downloaded data by accessing downloadBlockBlobResponse.readableStreamBody

// In browsers, get downloaded data by accessing downloadBlockBlobResponse.blobBody

const downloadBlockBlobResponse = await blockBlobClient.download(0);

console.log('\nDownloaded blob content...');

console.log(

'\t',

await streamToText(downloadBlockBlobResponse.readableStreamBody)

);

以下代码将流转换回字符串以显示内容。

将此代码添加到 函数之后main:

// Convert stream to text

async function streamToText(readable) {

readable.setEncoding('utf8');

let data = '';

for await (const chunk of readable) {

data += chunk;

}

return data;

}

若要详细了解如何下载 Blob 并浏览更多代码示例,请参阅使用 JavaScript 下载 Blob。

删除容器

删除容器以及容器内的所有 Blob。 以下代码使用 delete 方法删除整个容器,从而清除该应用所创建的资源。

将此代码添加到 try 块的末尾:

// Delete container

console.log('\nDeleting container...');

const deleteContainerResponse = await containerClient.delete();

console.log(

'Container was deleted successfully. requestId: ',

deleteContainerResponse.requestId

);

若要详细了解如何删除容器并浏览更多代码示例,请参阅使用 JavaScript 删除和还原 Blob 容器。

运行代码

从 Visual Studio Code 终端运行应用。

node index.js

应用的输出类似于以下示例:

Azure Blob storage - JavaScript quickstart sample

Creating container...

quickstart4a0780c0-fb72-11e9-b7b9-b387d3c488da

Uploading to Azure Storage as blob:

quickstart4a3128d0-fb72-11e9-b7b9-b387d3c488da.txt

Listing blobs...

quickstart4a3128d0-fb72-11e9-b7b9-b387d3c488da.txt

Downloaded blob content...

Hello, World!

Deleting container...

Done

在调试器中逐步执行代码,并在执行过程中反复检查 Azure 门户。 检查是否正在创建容器。 可以在容器中打开 blob 并查看内容。

清理资源

- 完成本快速入门后,请删除

blob-quickstart目录。 - 如果你已使用完 Azure 存储资源,请使用 Azure CLI 删除存储资源。