Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

In this quickstart, you use the Import data wizard in the Azure portal to get started with integrated vectorization. The wizard chunks your content and calls an embedding model to vectorize the chunks at indexing and query time.

This quickstart uses text-based PDFs and simple images from the azure-search-sample-data repo. However, you can use different files and still complete this quickstart.

Tip

Have image-rich documents? See Quickstart: Multimodal search in the Azure portal to extract, store, and search images alongside text.

Prerequisites

An Azure account with an active subscription. Create a trial subscription.

An Azure AI Search service. This quickstart requires the Basic tier or higher for managed identity support.

Familiarity with the wizard. See Import data wizard in the Azure portal.

Supported data sources

The wizard supports several Azure data sources. However, this quickstart only covers the data sources that work with whole files, which are described in the following table.

| Data source | Description |

|---|---|

| Azure Blob Storage | This data source works with blobs and tables. You must use a standard performance (general-purpose v2) account. Access tiers can be hot, cool, or cold. |

| Azure Data Lake Storage (ADLS) Gen2 | This is an Azure Storage account with a hierarchical namespace enabled. To confirm that you have Data Lake Storage, check the Properties tab on the Overview page. |

Supported embedding models

The portal supports the following embedding models for integrated vectorization. Deployment instructions are provided in a later section.

| Provider | Supported models |

|---|---|

| Azure AI services multi-service resource | For text and images: Azure AI Vision multimodal 4 |

1 The endpoint of your Azure AI services resource must have a custom subdomain. If you created your resource in the Azure portal, this subdomain was automatically generated during resource setup.

4 For billing purposes, you must attach your AI service resource to the skillset in your Azure AI Search service. Unless you use a keyless connection (preview) to create the skillset, both resources must be in the same region.

Public endpoint requirements

All of the preceding resources must have public access enabled so that the wizard can access them. Otherwise, the wizard fails. After the wizard runs, you can enable firewalls and private endpoints on the integration components for security. For more information, see Secure connections in the import wizard.

If private endpoints are already present and you can't disable them, the alternative option is to run the respective end-to-end flow from a script or program on a virtual machine. The virtual machine must be on the same virtual network as the private endpoint. Here's a Python code sample for integrated vectorization. The same GitHub repo has samples in other programming languages.

Configure access

Before you begin, make sure you have permissions to access content and operations. This quickstart uses Microsoft Entra ID for authentication and role-based access for authorization. You must be an Owner or User Access Administrator to assign roles. If roles aren't feasible, use key-based authentication instead.

Configure the required roles and conditional roles identified in this section.

Required roles

Azure AI Search provides the vector search pipeline. Configure access for yourself and your search service to read data, run the pipeline, and interact with other Azure resources.

On your Azure AI Search service:

Assign the following roles to yourself.

Search Service Contributor

Search Index Data Contributor

Search Index Data Reader

Conditional roles

The following tabs cover all wizard-compatible resources for vector search. Select only the tabs that apply to your chosen data source and embedding model.

Azure Blob Storage and Azure Data Lake Storage Gen2 require your search service to have read access to storage containers.

On your Azure Storage account:

- Assign Storage Blob Data Reader to the managed identity of your search service.

Prepare sample data

In this section, you prepare sample data for your chosen data source.

Go to your Azure Storage account in the Azure portal.

From the left pane, select Data storage > Containers.

Create a container, and then upload the health-plan PDF documents used for this quickstart.

(Optional) Synchronize deletions in your container with deletions in the search index. To configure your indexer for deletion detection:

Enable soft delete on your storage account. If you're using native soft delete, the next step isn't required.

Add custom metadata that an indexer can scan to determine which blobs are marked for deletion. Give your custom property a descriptive name. For example, name the property "IsDeleted" and set it to false. Repeat this step for every blob in the container. When you want to delete the blob, change the property to true. For more information, see Change and delete detection when indexing from Azure Storage.

Prepare embedding model

Note

If you're using Azure Vision, skip this step. The multimodal embeddings are built into your multi-service account and don't require model deployment.

The wizard supports several embedding models from Azure AI services and the Azure AI services model catalog.

Start the wizard

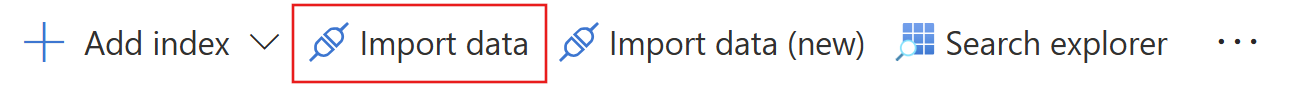

Go to your search service in the Azure portal.

On the Overview page, select Import data.

Select your data source: Azure Blob Storage, ADLS Gen2.

Select RAG.

Run the wizard

The wizard walks you through several configuration steps. This section covers each step in sequence.

Connect to your data

In this step, you connect Azure AI Search to your chosen data source for content ingestion and indexing.

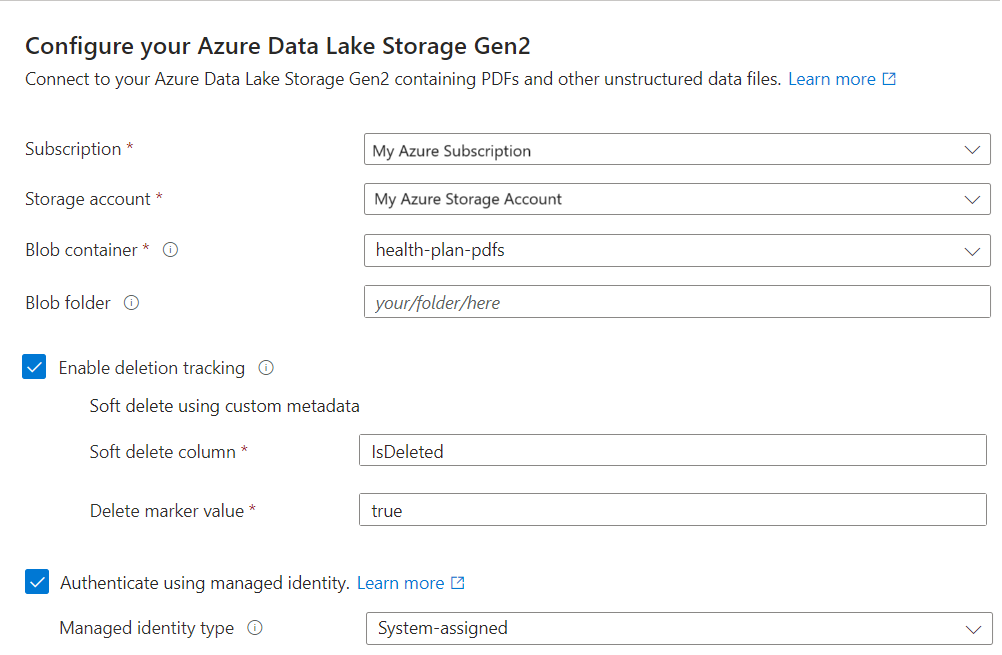

On the Connect to your data page, select your Azure subscription.

Select the storage account and container that provide the sample data.

If you enabled soft delete and added custom metadata in Prepare sample data, select the Enable deletion tracking checkbox.

On subsequent indexing runs, the search index is updated to remove any search documents based on soft-deleted blobs on Azure Storage.

Blobs support either Native blob soft delete or Soft delete using custom metadata.

If you configured your blobs for soft delete, provide the metadata property name-value pair. We recommend IsDeleted. If IsDeleted is set to true on a blob, the indexer drops the corresponding search document on the next indexer run.

The wizard doesn't check Azure Storage for valid settings or throw an error if the requirements aren't met. Instead, deletion detection doesn't work, and your search index is likely to collect orphaned documents over time.

Select the Authenticate using managed identity checkbox. Leave the identity type as System-assigned.

Select Next.

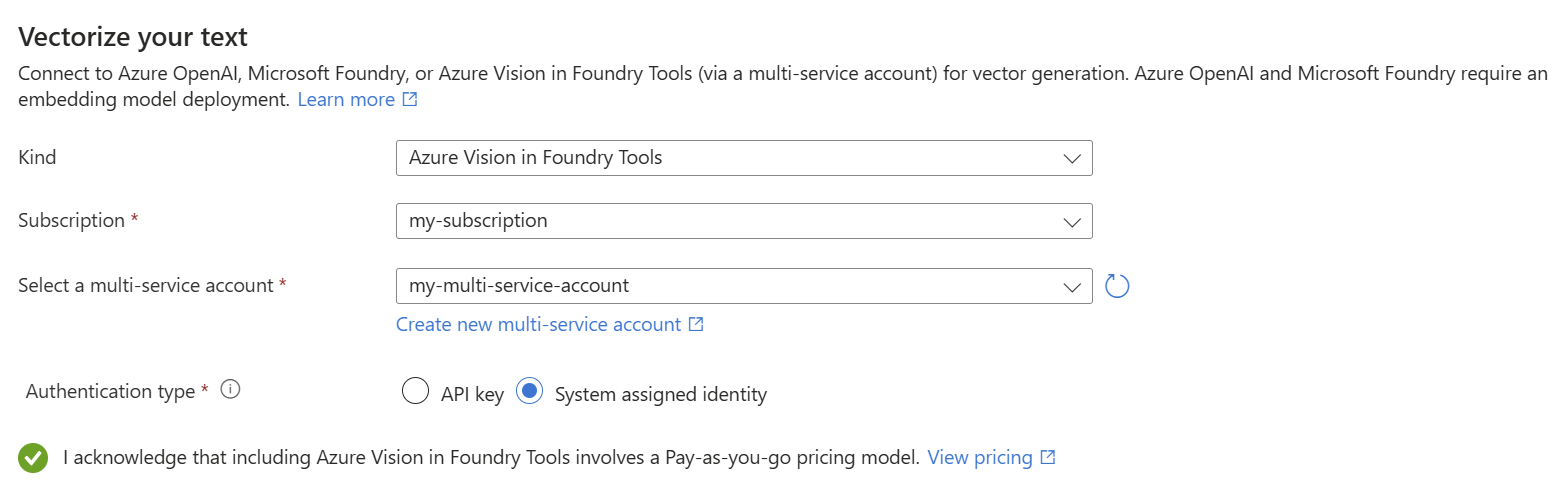

Vectorize your text

During this step, the wizard uses your chosen embedding model to vectorize chunked data. Chunking is built in and nonconfigurable. The effective settings are:

"textSplitMode": "pages",

"maximumPageLength": 2000,

"pageOverlapLength": 500,

"maximumPagesToTake": 0, #unlimited

"unit": "characters"

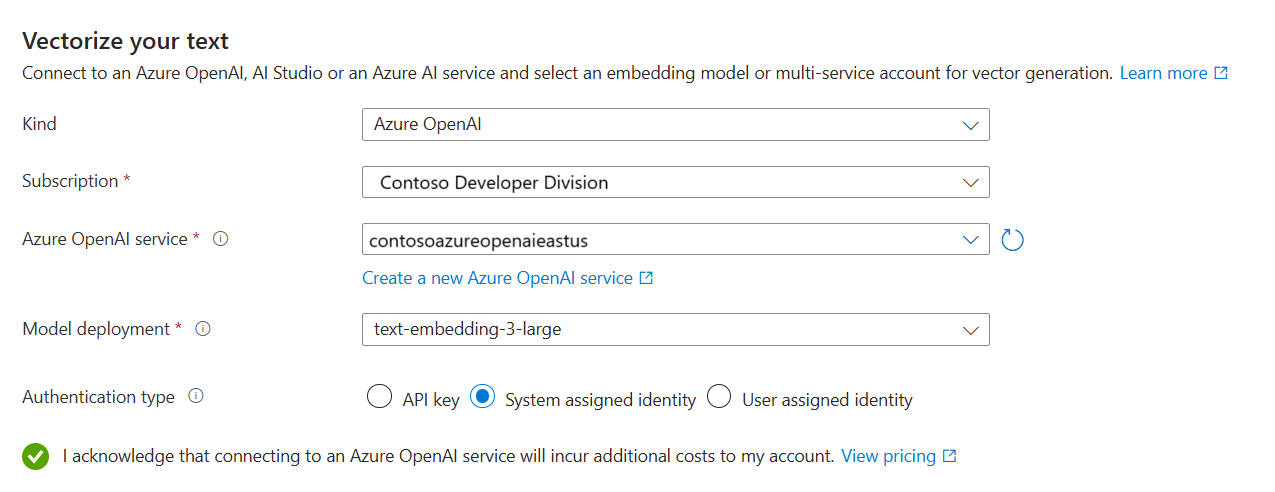

On the Vectorize your text page, select Azure AI services for the kind.

Select your Azure subscription.

Select your Azure AI services resource, and then select the model you deployed in Prepare embedding model.

For the authentication type, select System assigned identity.

Select the checkbox that acknowledges the billing effects of using these resources.

Select Next.

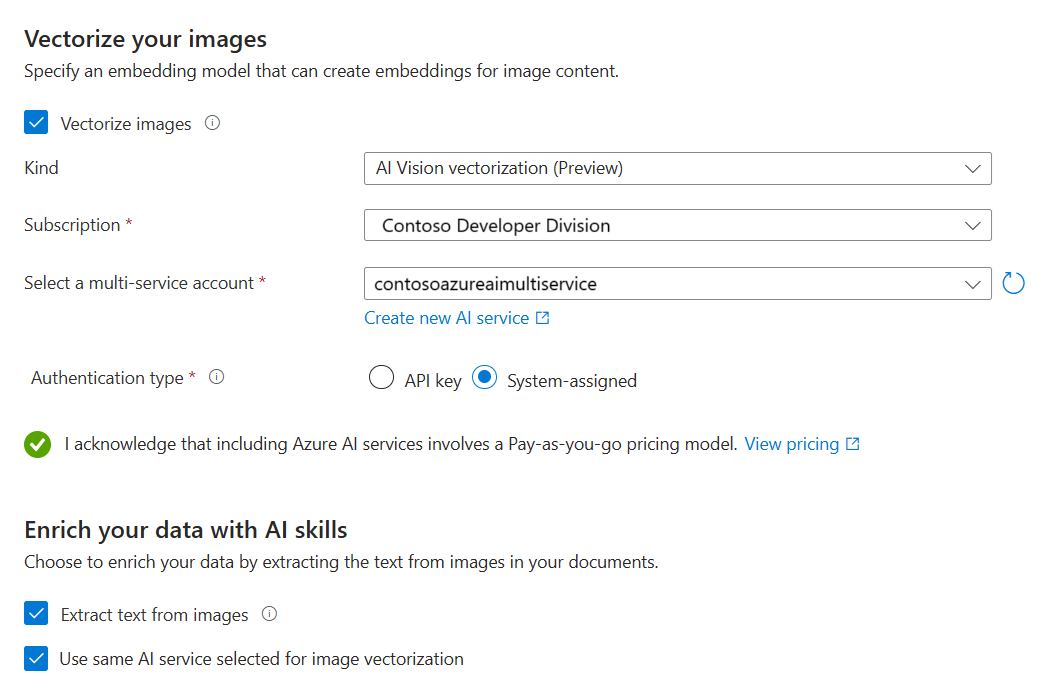

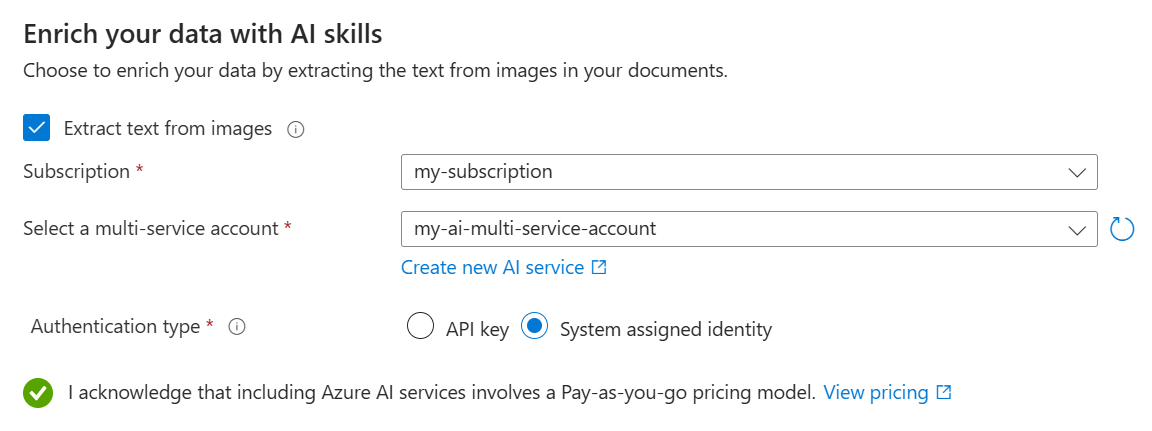

Vectorize and enrich your images

The health-plan PDFs include a corporate logo, but otherwise, there are no images. You can skip this step if you're using the sample documents.

However, if your content includes useful images, you can apply AI in one or both of the following ways:

Use a supported image embedding model from the Azure AI services model catalog or the Azure Vision multimodal embeddings API (via a multi-service account) to vectorize images.

Use optical character recognition (OCR) to extract text from images. This option invokes the OCR skill.

On the Vectorize and enrich your images page, select the Vectorize images checkbox.

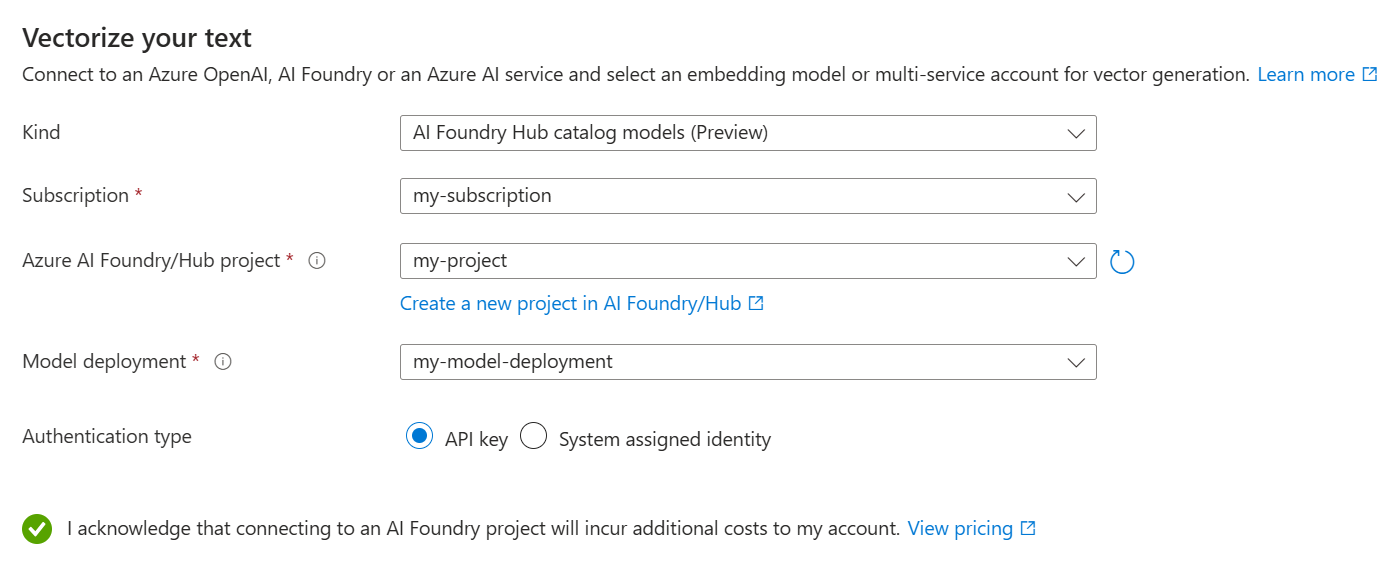

For the kind, select your model provider: AI services Hub catalog models or AI Vision vectorization.

If Azure Vision is unavailable, make sure your search service and AI service resource are both in a region that supports the Azure Vision multimodal APIs.

Select your Azure subscription, resource, and embedding model deployment (if applicable).

For the authentication type, select System assigned identity if you're not using a hub-based project. Otherwise, leave it as API key.

Select the checkbox that acknowledges the billing effects of using these resources.

Select Next.

Add semantic ranking

On the Advanced settings page, you can optionally add semantic ranking to rerank results at the end of query execution. Reranking promotes the most semantically relevant matches to the top.

Map new fields

On the Advanced settings page, you can optionally add new fields, assuming the data source provides metadata or fields that aren't picked up on the first pass. By default, the wizard generates the fields described in the following table.

| Field | Applies to | Description |

|---|---|---|

| chunk_id | Text and image vectors | Generated string field. Searchable, retrievable, and sortable. This is the document key for the index. |

| parent_id | Text vectors | Generated string field. Retrievable and filterable. Identifies the parent document from which the chunk originates. |

| chunk | Text and image vectors | String field. Human readable version of the data chunk. Searchable and retrievable, but not filterable, facetable, or sortable. |

| title | Text and image vectors | String field. Human readable document title or page title or page number. Searchable and retrievable, but not filterable, facetable, or sortable. |

| text_vector | Text vectors | Collection(Edm.single). Vector representation of the chunk. Searchable and retrievable, but not filterable, facetable, or sortable. |

You can't modify the generated fields or their attributes, but you can add new fields if your data source provides them. For example, Azure Blob Storage provides a collection of metadata fields.

To add fields to the index schema:

On the Advanced settings page, under Index fields, select Preview and edit.

Select Add field.

Select a source field from the available fields, enter a field name for the index, and accept (or override) the default data type.

If you want to restore the schema to its original version, select Reset.

Key points about this step:

The index schema provides vector and nonvector fields for chunked data.

Document parsing mode creates chunks (one search document per chunk).

Schedule indexing

For data sources where the underlying data is volatile, you can schedule indexing to capture changes at specific intervals or specific dates and times.

To schedule indexing:

On the Advanced settings page, under Schedule indexing, specify a run schedule for the indexer. We recommend Once for this quickstart.

Select Next.

Finish the wizard

The final step is to review your configuration and create the necessary objects for vector search. If necessary, return to the previous pages in the wizard to adjust your configuration.

To finish the wizard:

On the Review and create page, specify a prefix for the objects that the wizard creates. A common prefix helps you stay organized.

Select Create.

Wizard-created objects

When the wizard completes the configuration, it creates the following objects:

| Object | Description |

|---|---|

| Data source | Represents a connection to your chosen data source. |

| Index | Contains vector fields, vectorizers, vector profiles, and vector algorithms. You can't modify the default index during the wizard workflow. Indexes conform to the latest preview REST API so that you can use preview features. |

| Skillset | Contains the following skills and configuration:

|

| Indexer | Drives the indexing pipeline, with field mappings and output field mappings (if applicable). |

Tip

Wizard-created objects have configurable JSON definitions. To view or modify these definitions, select Search management from the left pane, where you can view your indexes, indexers, data sources, and skillsets.

Check results

Search Explorer accepts text strings as input and then vectorizes the text for vector query execution.

To query your vector index:

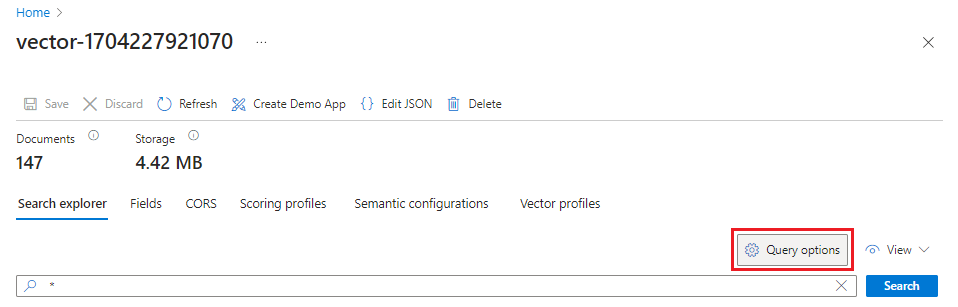

In the Azure portal, go to Search Management > Indexes, and then select your index.

Select Query options, and then select Hide vector values in search results. This step makes the results more readable.

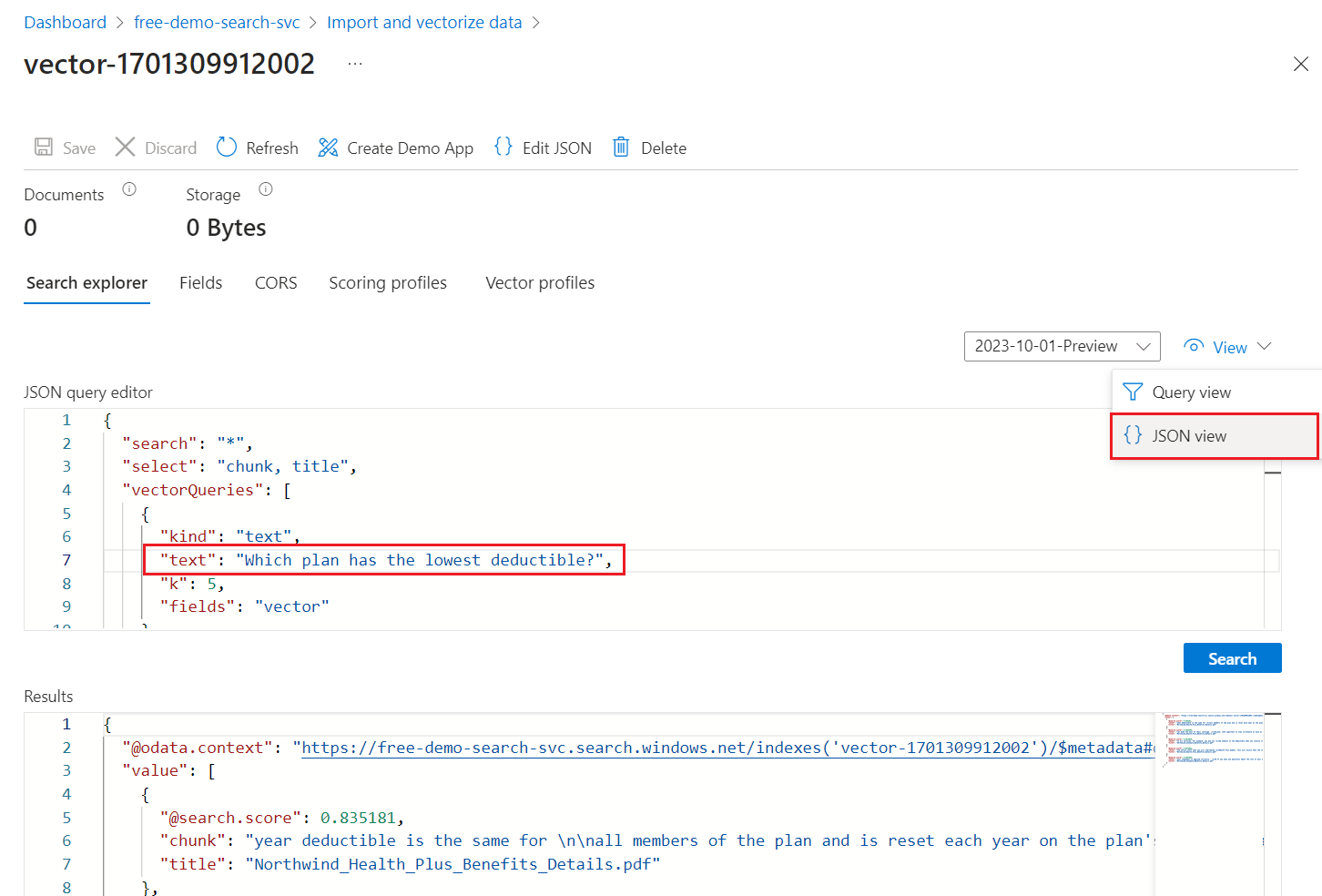

From the View menu, select JSON view so you can enter text for your vector query in the

textvector query parameter.The default query is an empty search (

"*") but includes parameters for returning the number matches. It's a hybrid query that runs text and vector queries in parallel. It also includes semantic ranking and specifies which fields to return in the results through theselectstatement.{ "search": "*", "count": true, "vectorQueries": [ { "kind": "text", "text": "*", "fields": "text_vector,image_vector" } ], "queryType": "semantic", "semanticConfiguration": "my-demo-semantic-configuration", "captions": "extractive", "answers": "extractive|count-3", "queryLanguage": "en-us", "select": "chunk_id,text_parent_id,chunk,title,image_parent_id" }Replace both asterisk (

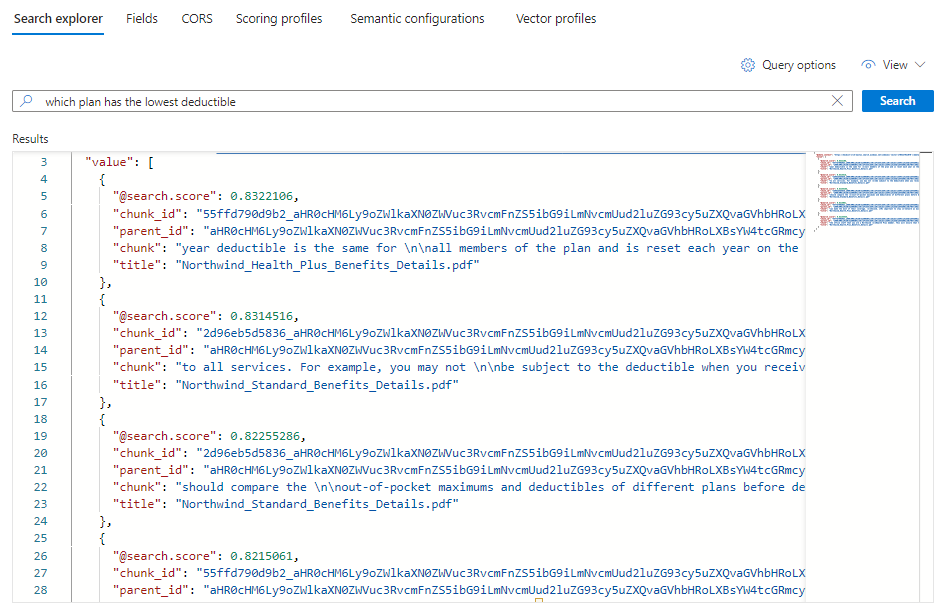

*) placeholders with a question related to health plans, such asWhich plan has the lowest deductible?.{ "search": "Which plan has the lowest deductible?", "count": true, "vectorQueries": [ { "kind": "text", "text": "Which plan has the lowest deductible?", "fields": "text_vector,image_vector" } ], "queryType": "semantic", "semanticConfiguration": "my-demo-semantic-configuration", "captions": "extractive", "answers": "extractive|count-3", "queryLanguage": "en-us", "select": "chunk_id,text_parent_id,chunk,title" }To run the query, select Search.

Each document is a chunk of the original PDF. The

titlefield shows which PDF the chunk comes from. Eachchunkis long. You can copy and paste one into a text editor to read the entire value.To see all of the chunks from a specific document, add a filter for the

title_parent_idfield for a specific PDF. You can check the Fields tab of your index to confirm the field is filterable.{ "select": "chunk_id,text_parent_id,chunk,title", "filter": "text_parent_id eq 'aHR0cHM6Ly9oZWlkaXN0c3RvcmFnZWRlbW9lYXN0dXMuYmxvYi5jb3JlLndpbmRvd3MubmV0L2hlYWx0aC1wbGFuLXBkZnMvTm9ydGh3aW5kX1N0YW5kYXJkX0JlbmVmaXRzX0RldGFpbHMucGRm0'", "count": true, "vectorQueries": [ { "kind": "text", "text": "*", "k": 5, "fields": "text_vector" } ] }

Clean up resources

When you work in your own subscription, it's a good idea to finish a project by removing the resources you no longer need. Resources that are left running can cost you money.

In the Azure portal, select All resources or Resource groups from the left pane to find and manage resources. You can delete resources individually or delete the resource group to remove all resources at once.

Next step

This quickstart introduced you to the Import data wizard, which creates all of the necessary objects for integrated vectorization. To explore each step in detail, see Set up integrated vectorization in Azure AI Search.