Azure Data Explorer提供来自 Event Hubs(大数据流式处理平台和事件引入服务)的引入。 事件中心每秒可以准实时处理数百万个事件。

在本文中,将连接到事件中心并将数据引入Azure Data Explorer。 关于从事件中心摄取的概览,请参阅 Azure Event Hubs 数据连接。

若要了解如何使用 Kusto 软件开发人员工具包(SDK)创建连接,请参阅 创建与 SDK 的事件中心数据连接。

有关基于早期 SDK 版本的代码示例,请参阅 archived 文章。

警告

获取数据向导不支持通过 专用终结点 或 托管专用终结点创建到事件中心的数据连接。 若要从Azure portal创建数据连接,请按照 Portal - Azure Event Hubs 页选项卡中的说明进行作。

创建事件中心数据连接

在本部分中,将在事件中心和Azure Data Explorer表之间建立连接。 只要建立了此连接,数据就会从事件中心传输到目标表。 如果将事件中心移动到了其他资源或订阅,则需要更新或重新创建连接。

先决条件

- Microsoft 帐户或 Microsoft Entra 用户标识。 不需要Azure订阅。

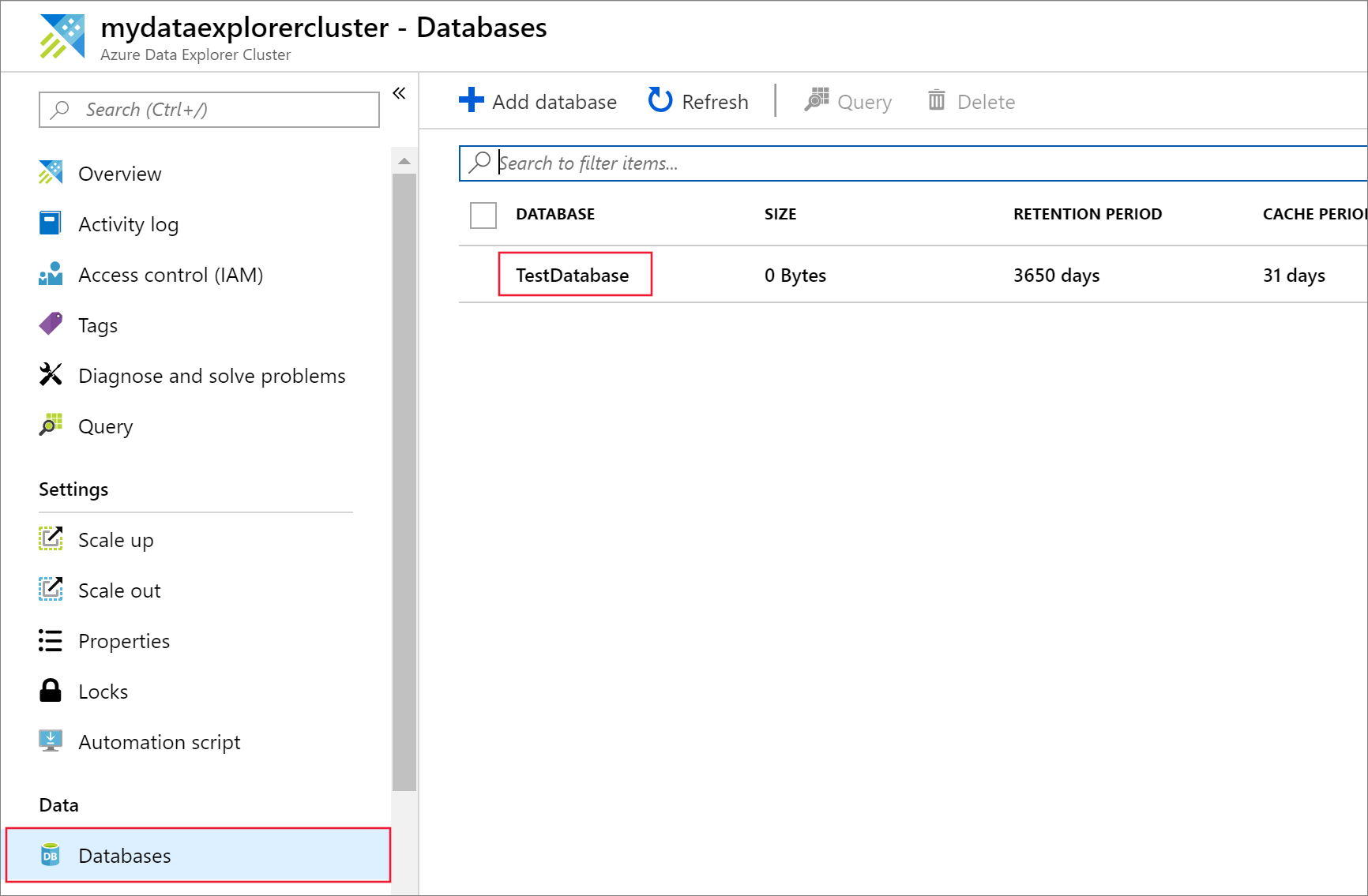

- Azure Data Explorer群集和数据库。 创建群集和数据库。

- 流式引入必须配置在您的 Azure Data Explorer 群集上。

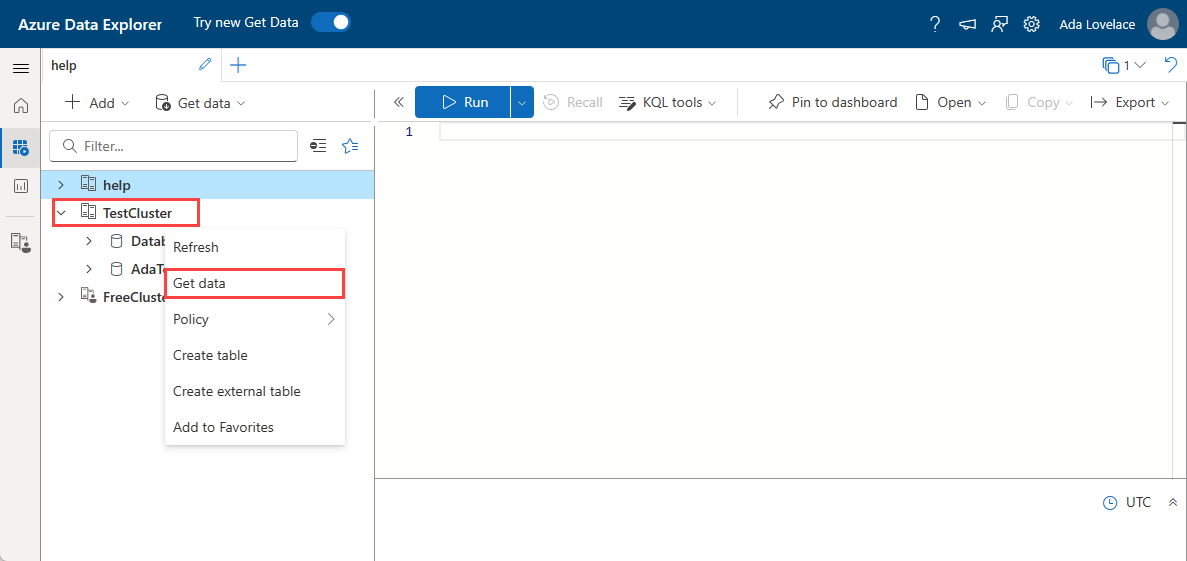

获取数据

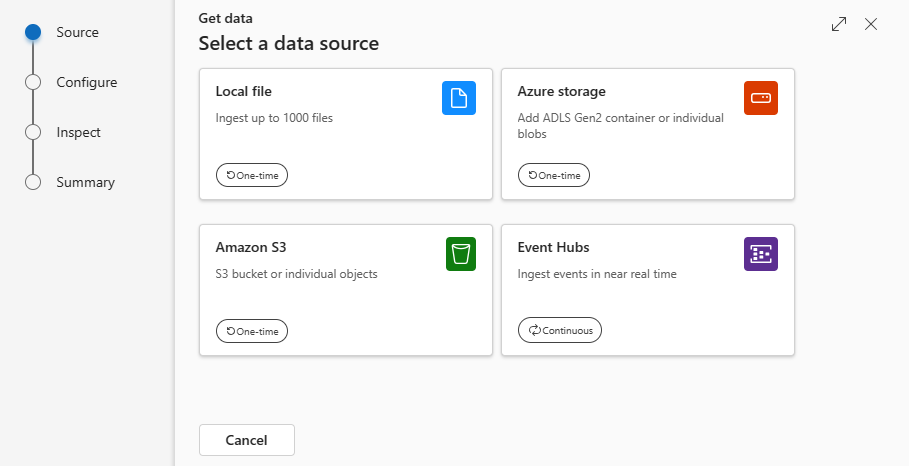

来源

在“获取数据”窗口中,“源”选项卡处于选中状态。

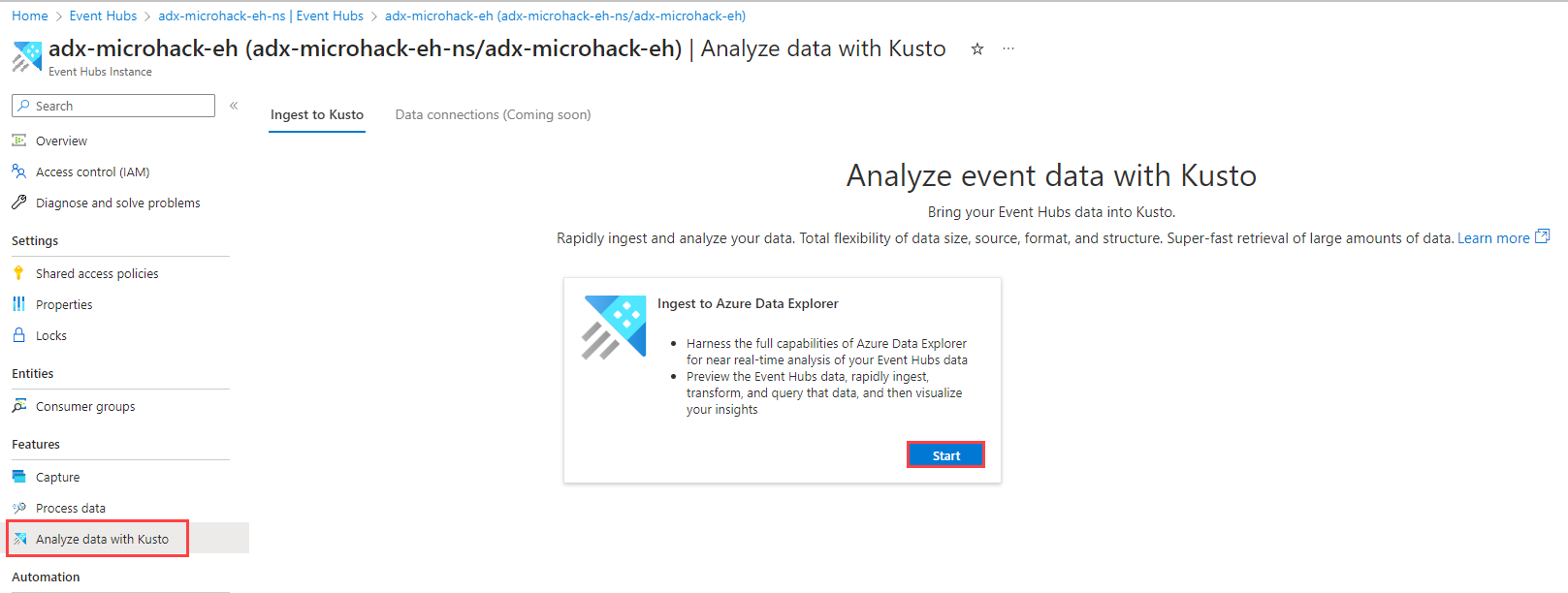

从可用列表中选择数据源。 在此示例中,你将从事件中心引入数据。

配置

选择目标数据库和表。 如果要将数据引入新表,请选择“+ 新建表”并输入表名称。

注意事项

表名最多可包含 1,024 个字符,包括空格、字母数字、连字符和下划线。 不支持特殊字符。

在 Azure 数据资源管理器中用于配置事件中心数据源的配置选项卡截图。 填写以下字段:

设置 字段说明 订阅 事件中心资源所在的订阅 ID。 事件中心命名空间 标识你的命名空间的名称。 事件中心 您希望的事件中心 消费者组 事件中定义的消费者组 数据连接名称 标识你的数据连接的名称。 高级筛选器 压缩 事件中心消息有效负载的压缩类型。 事件系统属性 事件中心系统属性。 如果每个事件消息有多个记录,则系统属性将添加到第一个记录中。 添加系统属性时,需要create或update表格模式和mapping,以包括所选属性。 事件检索开始日期 数据连接将检索在“事件检索开始日期”之后创建的事件中心事件。 只能检索在“事件中心”保留期限内保留的事件。 如果未指定“事件检索开始日期”,则默认时间是创建数据连接的时间。 选择“下一步”

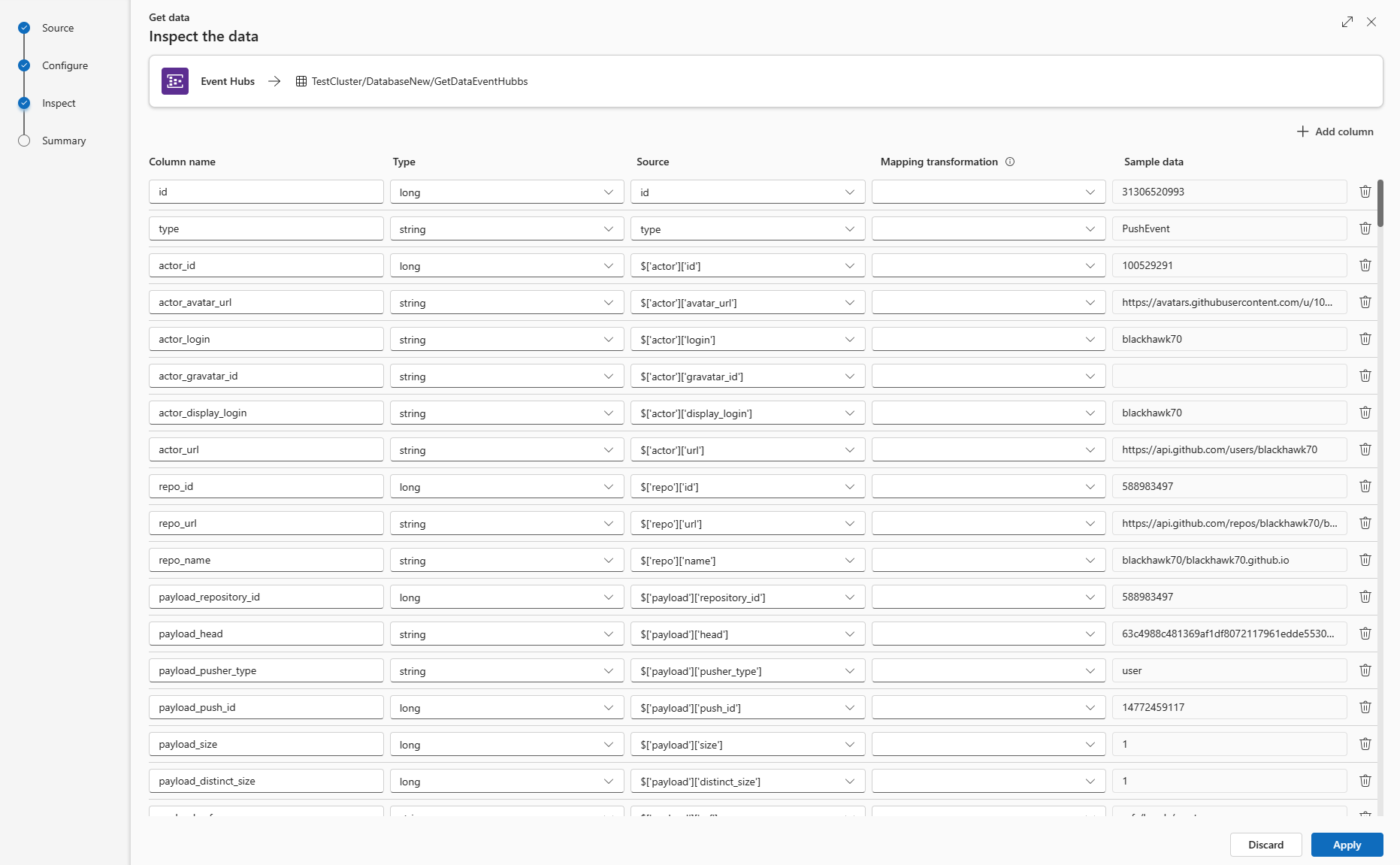

检查

“检查”选项卡将打开,并显示数据预览。

要完成引入过程,请选择“完成”。

可选:

如果预览窗口中显示的数据不完整,可能需要更多数据来创建包含所有必要数据字段的表。 使用以下命令从事件中心提取新数据:

“丢弃显示的数据并提取新数据”:丢弃显示的数据并搜索新事件。

提取更多数据:除已找到的事件外,还搜索更多事件。

注意事项

若要查看数据的预览,事件中心必须处于发送事件状态。

选择“命令查看器”以查看和复制基于输入生成的自动命令。

使用“架构定义文件”下拉列表更改从中推断架构的文件。

通过从下拉列表中选择所需格式来更改自动推断的数据格式。 请参阅 Azure Data Explorer 支持的用于数据引入的数据格式。

编辑列。

浏览基于数据类型的高级选项。

编辑列

注意事项

- 对于表格格式(CSV、TSV、PSV),无法将列映射两次。 若要映射到现有列,请先删除新列。

- 不能更改已有列类型。 如果尝试映射到其他格式的列,结果可能出现空列。

以下参数决定了你可在表中进行的更改:

- 表类型为“新”或“现有”

- 映射类型为新建或现有

| 表类型 | 映射类型 | 可进行的调整 |

|---|---|---|

| 新建表 | 新映射 | 重命名列、更改数据类型、更改数据源、映射转换、添加列、删除列 |

| 现有表 | 新映射 | 新建列(随后可在其上更改数据类型、进行重命名和更新) |

| 现有表 | 现有映射 | 无 |

映射转换

某些数据格式映射(Parquet、JSON 和 Avro)支持简单的引入时间转换。 若要应用映射转换,请在“编辑列”窗口中创建或更新列。

可对具有 string 或 datetime 类型且源的数据类型为 int 或 long 的列执行映射转换。 支持的映射转换包括:

- 从Unix秒获取日期时间

- 从Unix毫秒获取日期时间

- DateTimeFromUnixMicroseconds (从Unix微秒获取日期时间)

- 从Unix毫微秒转换的日期时间

基于数据类型的高级选项

表格(CSV、TSV、PSV):

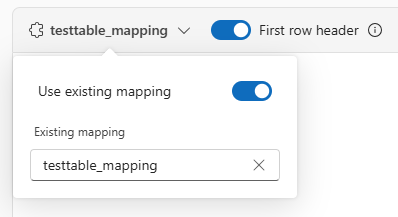

如果要在现有表中引入表格格式,可以选择表映射下拉列表,然后选择使用现有映射。 表格数据不一定要包括用于将源数据映射到现有列的列名称。 选中此选项后,映射将按顺序完成,表架构保持不变。

否则,请创建新的映射。

若要将第一行用作列名,请选择 “第一行”标题。

JSON:

- 若要确定 JSON 数据的列除法,请选择“ 嵌套级别”,从 1 到 100。

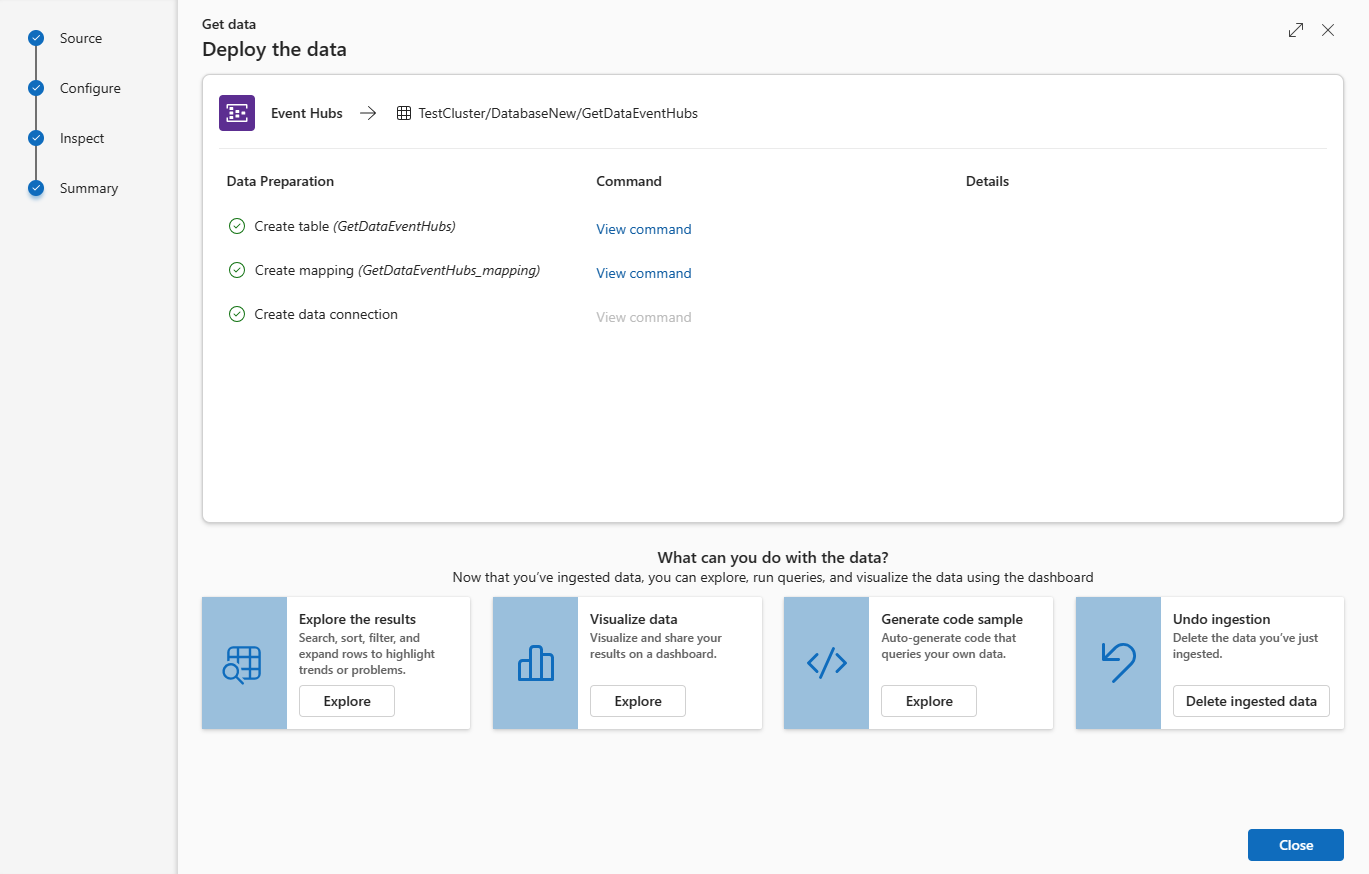

总结

如果数据引入成功完成,则“数据准备”窗口中的所有三个步骤都会带有绿色的对勾标记。 可以查看每个步骤所使用的命令,或选择一张卡片进行查询、可视化或删除已导入的数据。