适用于: Azure Data Factory

Azure Data Factory  Azure Synapse Analytics

Azure Synapse Analytics

本文介绍如何使用数据工厂将数据从 Microsoft 365 (Office 365) 加载到 Azure Blob 存储。 可以按照类似的步骤将数据复制到 Azure Data Lake Gen2。 请参阅 Microsoft 365 (Office 365) 连接器文章,了解如何从Microsoft 365(Office 365)复制数据。

创建数据工厂

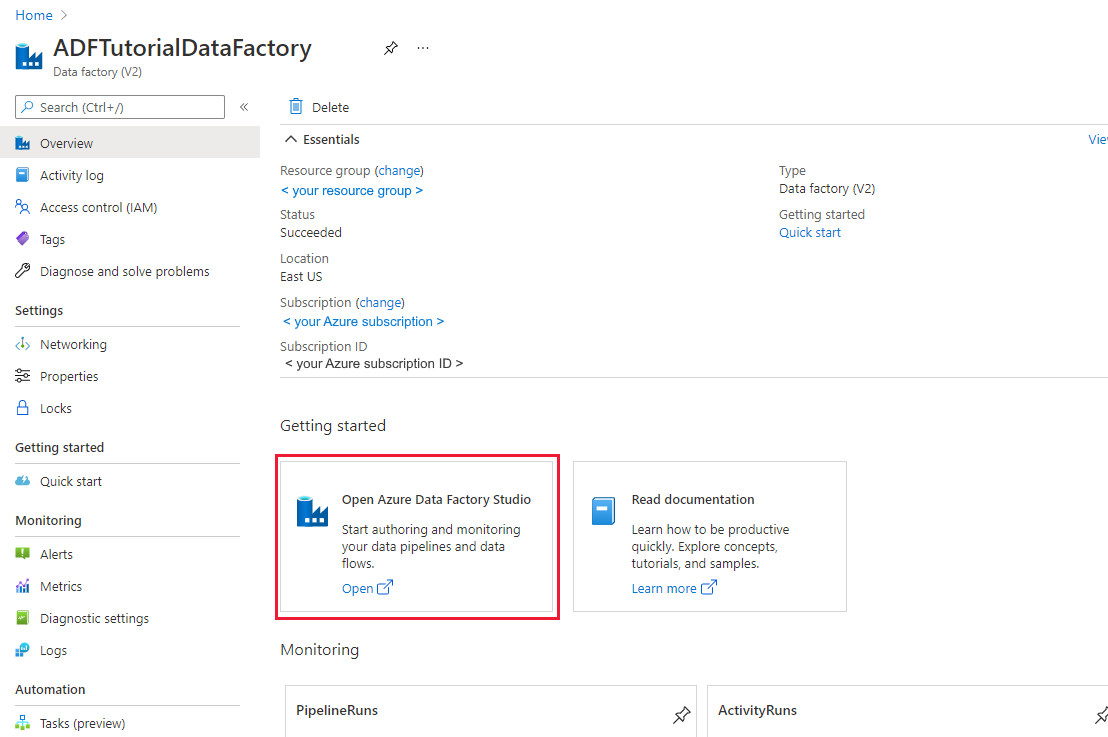

如果尚未创建数据工厂,请按照 Quickstart:使用 Azure 门户和 Azure Data Factory Studio 创建数据工厂创建一个数据工厂。 创建后,导航到 Azure 门户中的数据工厂。

在 Open Azure Data Factory Studio 磁贴上选择 Open,以在单独的选项卡中启动数据集成应用程序。

创建管道

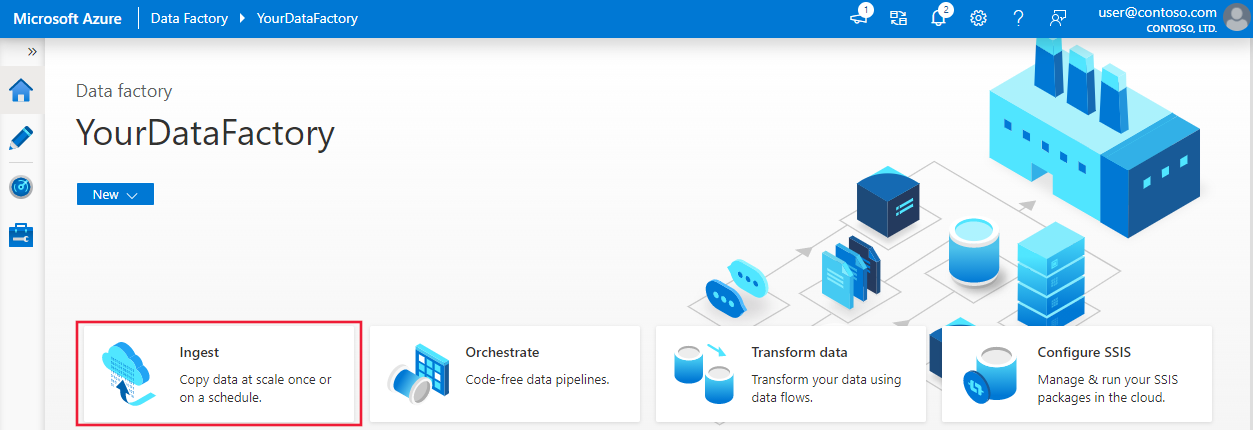

在主页上,选择编排。

在管道的“常规” 选项卡中,输入“CopyPipeline”作为管道的名称。

在 “活动” 工具箱的 > “移动和转换”类别 > 中,将 复制活动 从工具箱拖放到管道设计器图面。 指定“CopyFromOffice365ToBlob”作为活动名称。

注意

请在源和接收器链接服务中使用Azure集成运行时。 不支持自承载集成运行时和托管的虚拟网络集成运行时。

配置源

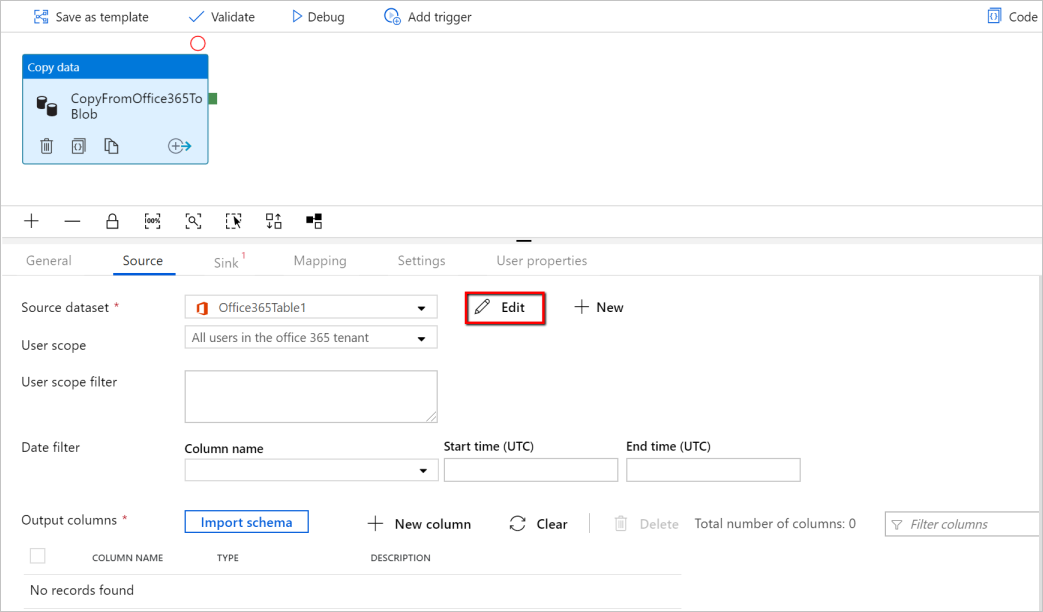

转到管道 >“源”选项卡,选择“+ 新建”创建源数据集。

在“新建数据集”窗口中,选择Microsoft 365(Office 365),然后选择Continue。

现在位于“复制活动配置”选项卡中。在 Edit 按钮上选择Microsoft 365 (Office 365) 数据集旁边的按钮以继续执行数据配置。

会看到为Microsoft 365(Office 365)数据集打开的新选项卡。 在General 选项卡的属性窗口底部,输入“SourceOffice365Dataset”作为名称。

转到Properties window的 Connection 选项卡。 在“链接服务”文本框旁边,选择“+ 新建”。

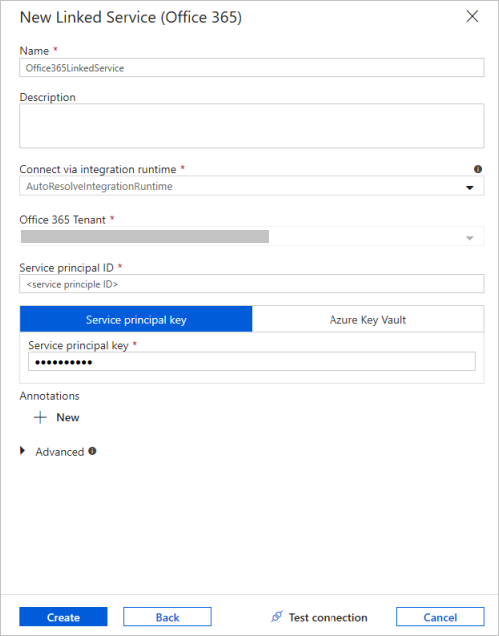

在“新建链接服务”窗口中,输入“Office365LinkedService”作为名称,输入服务主体 ID 和服务主体密钥,然后测试连接并选择“创建” 以部署链接服务。

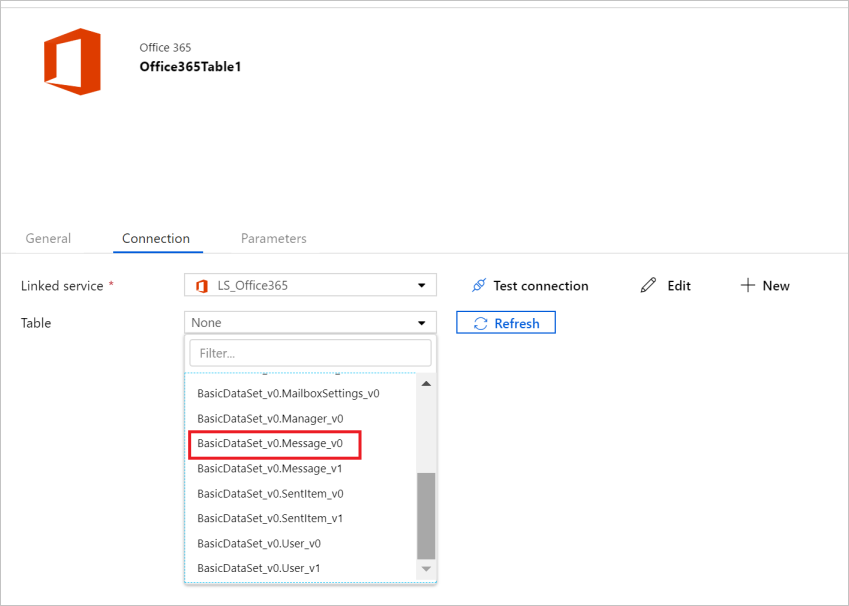

在创建链接服务后,会返回到数据集设置。 在 Table 旁边,选择向下箭头以展开可用Microsoft 365(Office 365)数据集的列表,然后选择“BasicDataSet_v0”。下拉列表中的“Message_v0” :

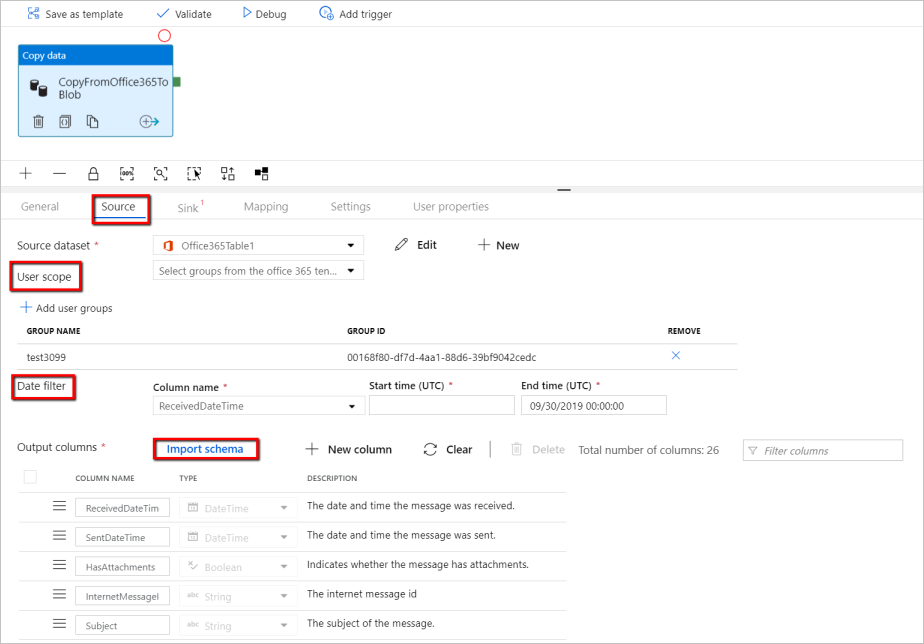

现在返回到 pipeline>Source 选项卡继续配置Microsoft 365(Office 365)数据提取的其他属性。 用户范围和用户范围筛选器是可选的谓词,可以定义这些谓词来限制要从Microsoft 365中提取的数据(Office 365)。 有关如何配置这些设置,请参阅 Microsoft 365 (Office 365) 数据集属性部分。

需要选择一个日期筛选器,并提供开始时间和结束时间值。

选择“导入架构”选项卡以导入消息数据集的架构。

配置接收器

转到管道的“汇”选项卡,然后选择“+ 新建”以创建一个汇数据集。

在“新建数据集”窗口中,请注意,从Microsoft 365(Office 365)复制时仅选择受支持的目标。 选择 Azure Blob Storage,选择二进制格式,然后选择 Continue。 在本教程中,将Microsoft 365(Office 365)数据复制到Azure Blob Storage。

在Edit按钮上选择Azure Blob Storage数据集旁边的按钮以继续数据配置。

在属性窗口的常规选项卡中,在名称中输入“OutputBlobDataset”。

转到Properties window的 Connection 选项卡。 在“链接服务”文本框旁边,选择“+ 新建”。

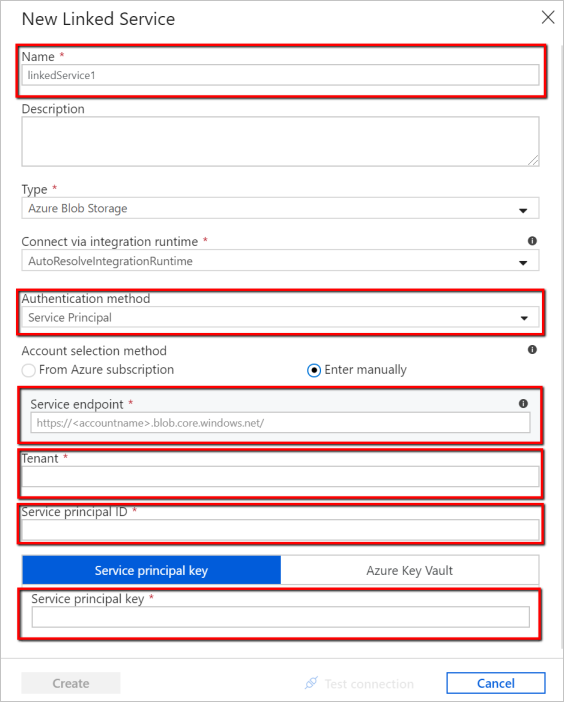

在“新建链接服务”窗口中,输入“AzureStorageLinkedService”作为名称,从身份验证方法下拉列表中选择“服务主体”,填写“服务终结点”、“租户”、“服务主体 ID”和“服务主体密钥”,然后选择“保存”以部署链接服务。 有关如何为 Azure Blob Storage 设置服务主体身份验证,请参阅 here。

验证流水线

若要验证管道,请从工具栏中选择“验证” 。

还可以通过单击右上角的“代码”来查看与管道关联的 JSON 代码。

发布流水线

在顶部工具栏中,选择“全部发布” 。 此操作将所创建的实体(数据集和管道)发布到数据工厂。

手动触发管道

选择工具栏中的“添加触发器”,然后选择“立即触发”。 在“管道运行”页上选择“完成”。

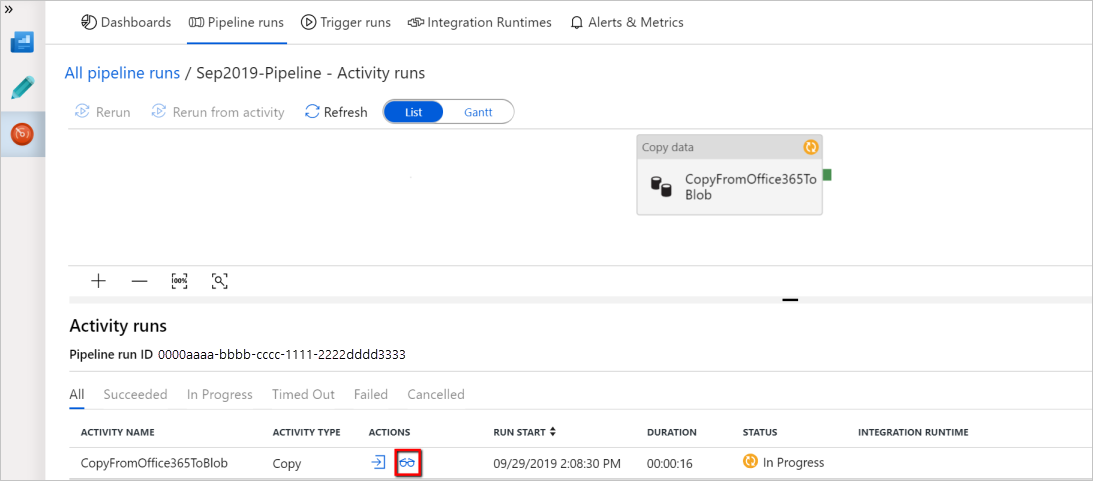

监视管道

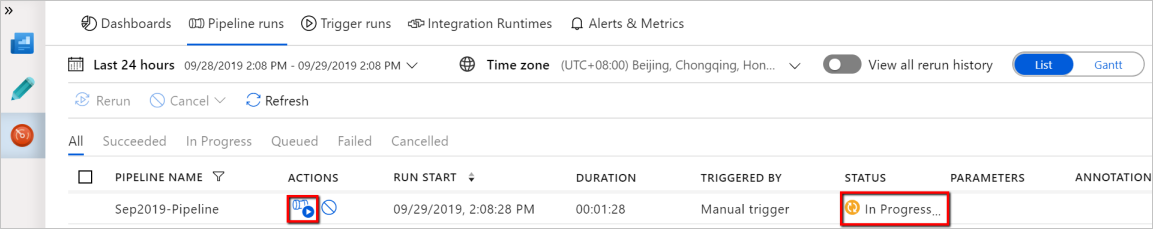

转到左侧的“监控”选项卡。 此时会看到由手动触发器触发的管道运行。 可以使用“操作”列中的链接来查看活动详细信息以及重新运行该管道。

若要查看与管道运行关联的活动运行,请选择“操作”列中的“查看活动运行”链接。 此示例中只有一个活动,因此列表中只看到一个条目。 有关复制操作的详细信息,请选择“操作”列中的“详细信息”链接(眼镜图标)。

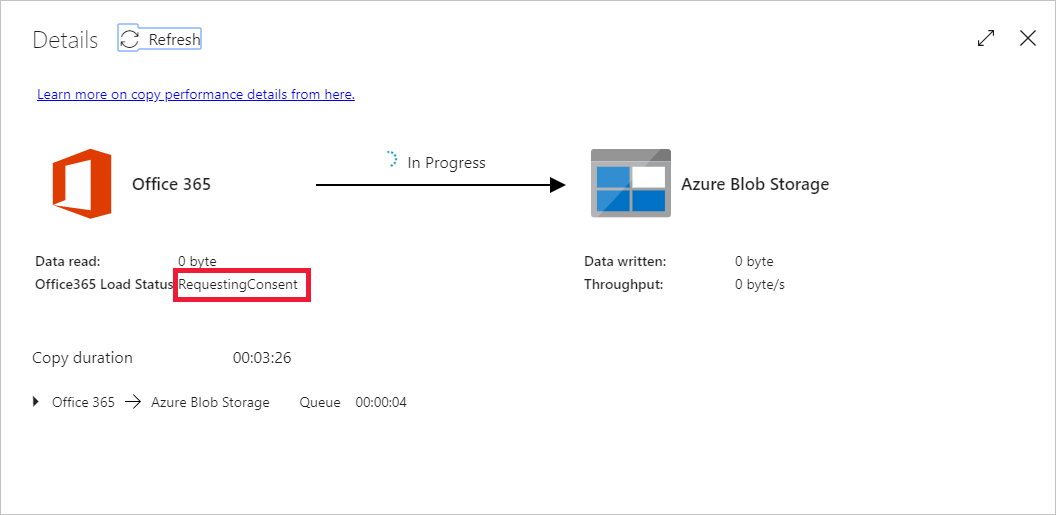

如果这是你首次请求此上下文(要访问的数据表、要将数据加载到的目标帐户和发出数据访问请求的用户标识的组合)的数据,则复制活动状态将显示为“正在进行”,并且仅当选择“操作”下的“详细信息”链接时,才会看到状态显示为“RequestingConsent”。 在继续执行数据提取之前,数据访问审批者组的成员需要在 Privileged Access Management 中审批该请求。

正在请求许可状态:

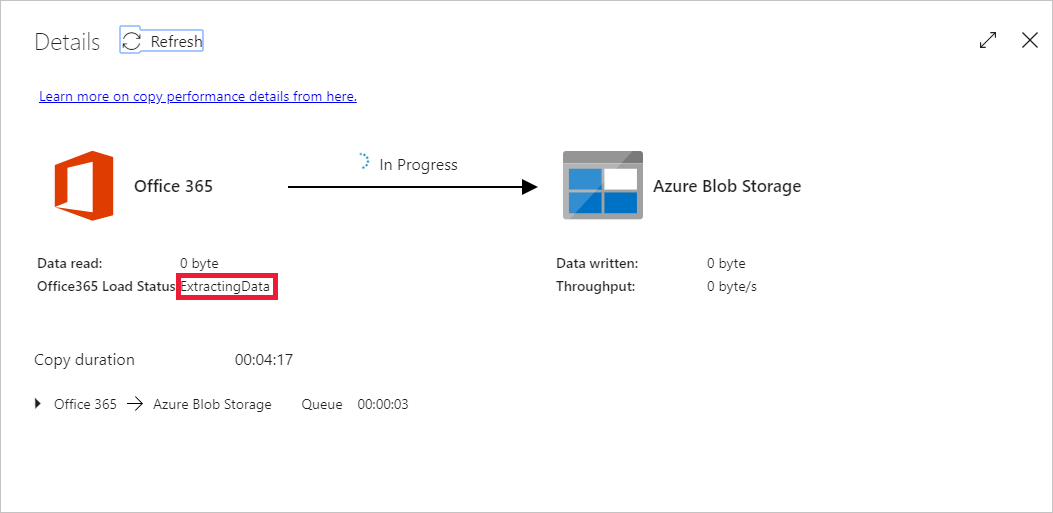

正在提取数据状态:

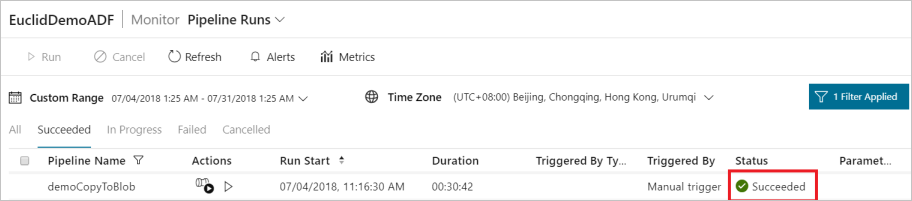

提供许可后,数据提取将会继续,一段时间后,管道运行将显示为“成功”。

现在转到目标Azure Blob Storage,并验证是否已以二进制格式提取Microsoft 365(Office 365)数据。

相关内容

请继续学习以下文章,了解Azure Synapse Analytics支持: