适用于: Azure 数据工厂

Azure 数据工厂  Azure Synapse Analytics

Azure Synapse Analytics

本文概述了如何从 Google Cloud Storage (GCS) 复制数据。 有关详细信息,请阅读 Azure 数据工厂和 Synapse Analytics 的简介文章。

支持的功能

此 Google Cloud Storage 连接器支持以下功能:

| 支持的功能 | IR |

|---|---|

| 复制活动(源/-) | (1) (2) |

| Lookup 活动 | (1) (2) |

| GetMetadata 活动 | (1) (2) |

| Delete 活动 | (1) (2) |

① Azure 集成运行时 ② 自承载集成运行时

具体而言,此 Google 云存储连接器支持按原样复制文件,或者使用受支持的文件格式和压缩编解码器分析文件。 它利用 GCS 的 S3 兼容互操作性。

先决条件

Google 云存储帐户需要进行以下设置:

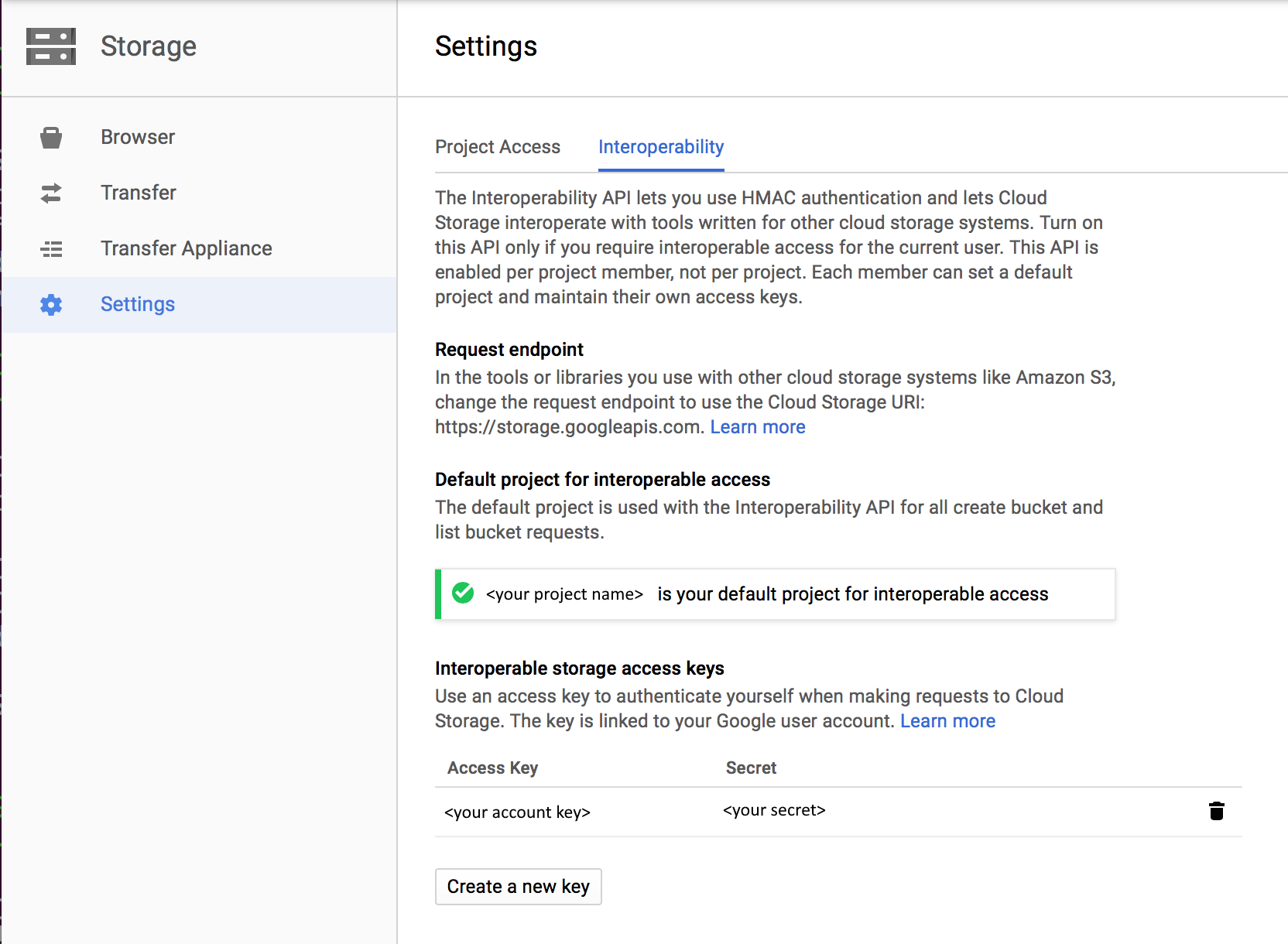

- 为 Google 云存储帐户启用互操作性

- 设置默认项目,使其包含要从目标 GCS Bucket 复制的数据。

- 使用 GCP 上的云标识和访问管理创建服务帐户并定义适当的权限级别。

- 为此服务帐户生成访问密钥。

所需的权限

若要从 Google 云存储复制数据,请确保你已被授予以下对象操作权限: storage.objects.get 和 storage.objects.list。

如果使用 UI 进行创作,则需要 storage.buckets.list 权限用于测试连接到链接服务以及从根目录开始的浏览操作。 如果不想授予此权限,则可以选择 UI 中的“测试与文件路径的连接”或“从指定路径浏览”选项。

有关 Google 云存储角色和相关权限的完整列表,请参阅 Google 云站点上的云存储的 IAM 角色。

入门

若要使用管道执行复制活动,可以使用以下工具或 SDK 之一:

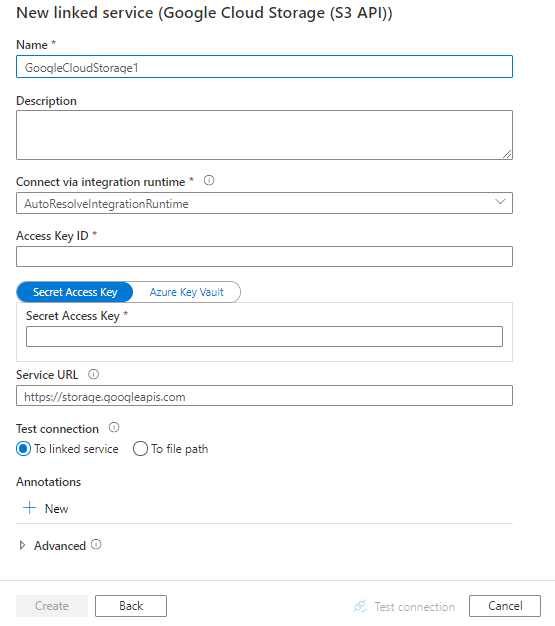

使用 UI 创建到 Google Cloud Storage 的链接服务

使用以下步骤在 Azure 门户 UI 中创建到 Google Cloud Storage 的链接服务。

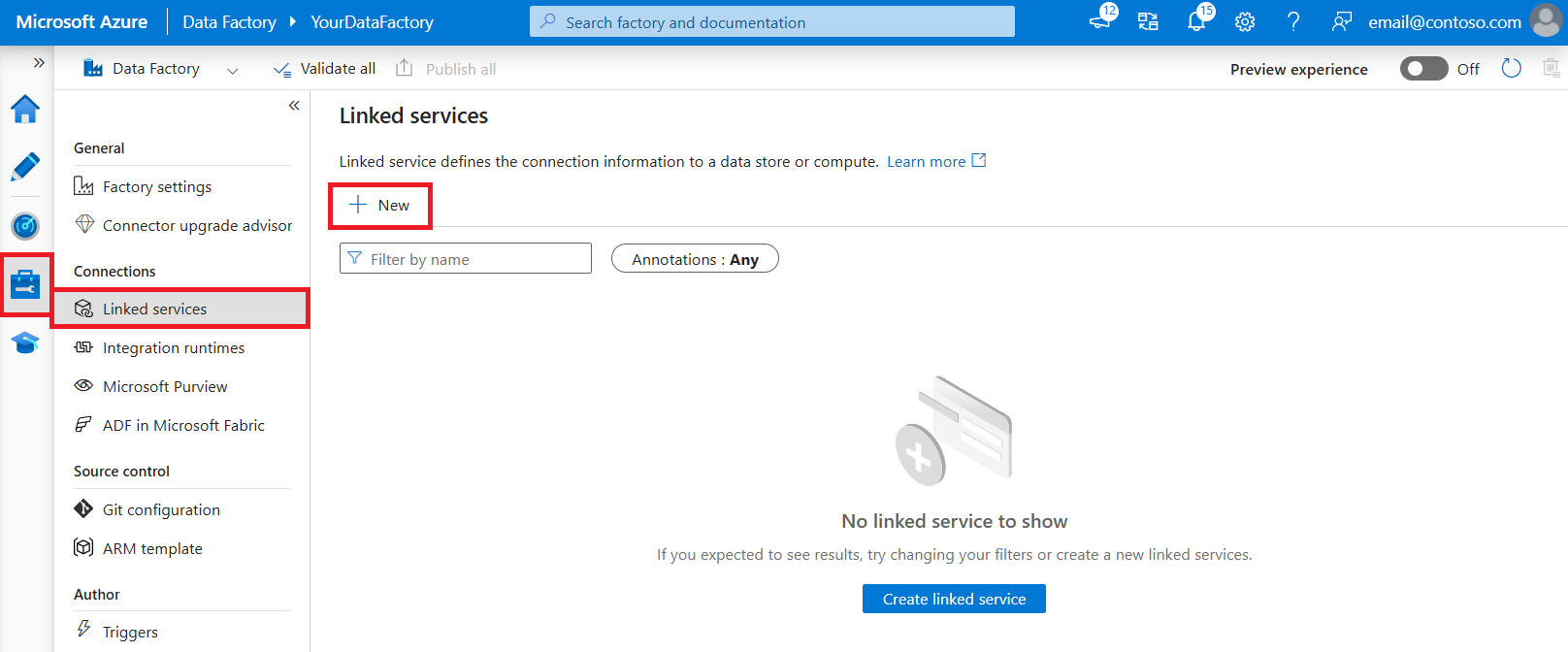

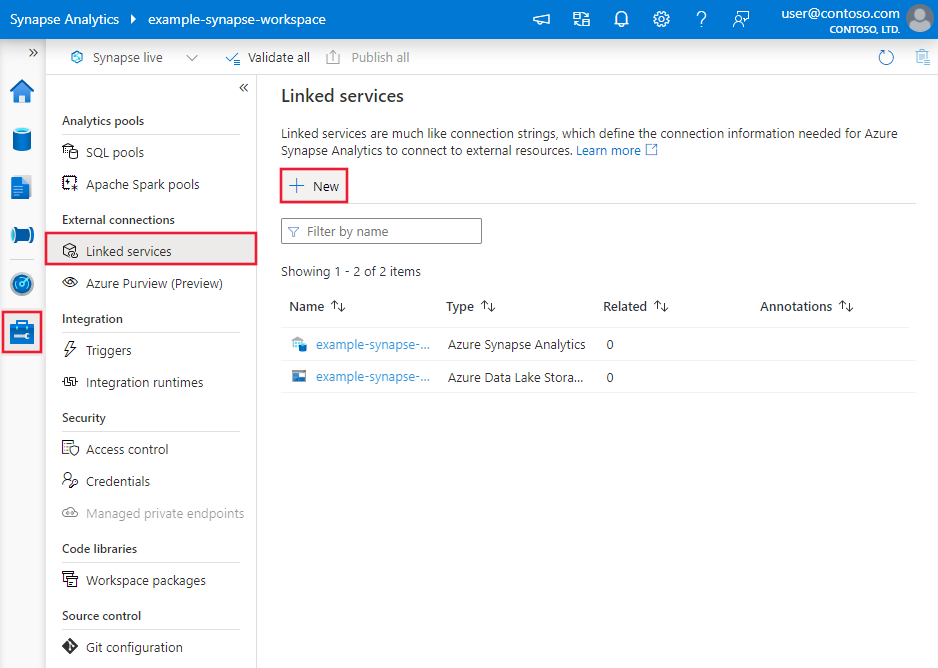

浏览到 Azure 数据工厂或 Synapse 工作区中的“管理”选项卡,并选择“链接服务”,然后单击“新建”:

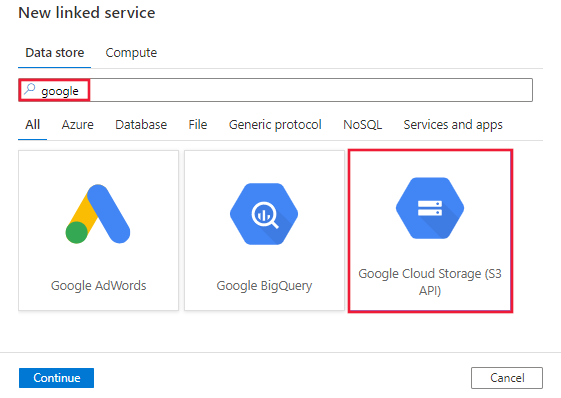

搜索“Google”并选择“Google Cloud Storage (S3 API)连接器”。

配置服务详细信息、测试连接并创建新的链接服务。

连接器配置详细信息

以下各部分详细介绍了定义特定于 Google 云存储的数据工厂实体时使用的属性。

链接服务属性

Google 云存储链接服务支持以下属性:

| 属性 | 描述 | 必需 |

|---|---|---|

| 类型 | type 属性必须设置为 GoogleCloudStorage。 | 是 |

| accessKeyId | 机密访问键 ID。 若要查找访问密钥和机密,请参阅先决条件。 | 是 |

| secretAccessKey | 机密访问键本身。 将此字段标记为 SecureString 以安全地存储它,或引用存储在 Azure Key Vault 中的机密。 | 是 |

| serviceUrl | 将自定义 GCS 终结点指定为 https://storage.googleapis.com。 |

是 |

| connectVia | 用于连接到数据存储的集成运行时。 可使用 Azure Integration Runtime 或自承载集成运行时(如果数据存储位于专用网络中)。 如果未指定此属性,服务会使用默认的 Azure Integration Runtime。 | 否 |

下面是一个示例:

{

"name": "GoogleCloudStorageLinkedService",

"properties": {

"type": "GoogleCloudStorage",

"typeProperties": {

"accessKeyId": "<access key id>",

"secretAccessKey": {

"type": "SecureString",

"value": "<secret access key>"

},

"serviceUrl": "https://storage.googleapis.com"

},

"connectVia": {

"referenceName": "<name of Integration Runtime>",

"type": "IntegrationRuntimeReference"

}

}

}

数据集属性

Azure 数据工厂支持以下文件格式。 请参阅每一篇介绍基于格式的设置的文章。

Google 云存储支持基于格式的数据集中 location 设置下的以下属性:

| 属性 | 描述 | 必需 |

|---|---|---|

| 类型 | 数据集中 下的 type 属性必须设置为 GoogleCloudStorageLocation。 | 是 |

| bucketName | GCS 桶名称。 | 是 |

| folderPath | 给定存储桶下的文件夹路径。 如果要使用通配符来筛选文件夹,请跳过此设置并在活动源设置中进行相应的指定。 | 否 |

| fileName | 给定 Bucket 和文件夹路径下的文件名。 如果要使用通配符来筛选文件,请跳过此设置并在活动源设置中进行相应的指定。 | 否 |

示例:

{

"name": "DelimitedTextDataset",

"properties": {

"type": "DelimitedText",

"linkedServiceName": {

"referenceName": "<Google Cloud Storage linked service name>",

"type": "LinkedServiceReference"

},

"schema": [ < physical schema, optional, auto retrieved during authoring > ],

"typeProperties": {

"location": {

"type": "GoogleCloudStorageLocation",

"bucketName": "bucketname",

"folderPath": "folder/subfolder"

},

"columnDelimiter": ",",

"quoteChar": "\"",

"firstRowAsHeader": true,

"compressionCodec": "gzip"

}

}

}

复制活动属性

有关可用于定义活动的各部分和属性的完整列表,请参阅管道一文。 本部分提供了 Google 云存储源支持的属性列表。

Google 云存储作为源类型

Azure 数据工厂支持以下文件格式。 请参阅每一篇介绍基于格式的设置的文章。

Google 云存储支持基于格式的复制源中 storeSettings 设置下的以下属性:

| 属性 | 描述 | 必需 |

|---|---|---|

| 类型 | 下的 type 属性必须设置为 GoogleCloudStorageReadSettings。 | 是 |

| 找到要复制的文件: | ||

| 选项 1:静态路径 |

从数据集中指定的给定存储桶或文件夹/文件路径复制。 若要复制 Bucket 或文件夹中的所有文件,请另外将 wildcardFileName 指定为 *。 |

|

| 选项 2:GCS 前缀 -前缀 |

数据集中配置的给定 Bucket 下的 GCS 密钥名称的前缀,用于筛选源 GCS 文件。 选择名称以 bucket_in_dataset/this_prefix 开头的 GCS 密钥。 它利用 GCS 的服务端筛选器,相较于通配符筛选器,该筛选器可提供更好的性能。 |

否 |

| 选项 3:通配符 - wildcardFolderPath |

数据集中配置的给定 Bucket 下包含通配符的文件夹路径,用于筛选源文件夹。 允许的通配符为: *(匹配零个或更多字符)和 ?(匹配零个或单个字符)。 如果文件夹名内包含通配符或此转义字符,请使用 ^ 进行转义。 请参阅文件夹和文件筛选器示例中的更多示例。 |

否 |

| 选项 3:通配符 - 通配符文件名 |

给定 Bucket 和文件夹路径(或通配符文件夹路径)下包含通配符的文件名,用于筛选源文件。 允许的通配符为: *(匹配零个或更多字符)和 ?(匹配零个或单个字符)。 如果文件名包含通配符或此转义字符,请使用 ^ 进行转义。 请参阅文件夹和文件筛选器示例中的更多示例。 |

是 |

| 选项 3:文件列表 - fileListPath |

指明复制给定文件集。 指向包含要复制的文件列表的文本文件,每行一个文件(即数据集中所配置路径的相对路径)。 使用此选项时,请勿在数据集中指定文件名。 请参阅文件列表示例中的更多示例。 |

否 |

| 其他设置: | ||

| recursive | 指示是要从子文件夹中以递归方式读取数据,还是只从指定的文件夹中读取数据。 请注意,当 recursive 设置为 true 且接收器是基于文件的存储时,将不会在接收器上复制或创建空的文件夹或子文件夹。 允许的值为 true(默认值)和 false。 如果配置 fileListPath,则此属性不适用。 |

否 |

| deleteFilesAfterCompletion | 指示是否会在二进制文件成功移到目标存储后将其从源存储中删除。 文件删除按文件进行。因此,当复制活动失败时,你会看到一些文件已经复制到目标并从源中删除,而另一些文件仍保留在源存储中。 此属性仅在二进制文件复制方案中有效。 默认值:false。 |

否 |

| modifiedDatetimeStart | 文件根据“上次修改时间”属性进行筛选。 如果文件的上次修改时间大于或等于 modifiedDatetimeStart 并且小于 modifiedDatetimeEnd,将选择这些文件。 该时间应用于 UTC 时区,格式为“2018-12-01T05:00:00Z”。 属性可以为 NULL,这意味着不向数据集应用任何文件属性筛选器。 如果 modifiedDatetimeStart 具有日期/时间值,但 modifiedDatetimeEnd 为 NULL,则会选中“上次修改时间”属性大于或等于该日期/时间值的文件。 如果 modifiedDatetimeEnd 具有日期/时间值,但 modifiedDatetimeStart 为 NULL,则会选中“上次修改时间”属性小于该日期/时间值的文件。如果配置 fileListPath,则此属性不适用。 |

否 |

| modifiedDatetimeEnd | 同上。 | 否 |

| enablePartitionDiscovery | 对于已分区的文件,请指定是否分析文件路径中的分区,并将其添加为更多源列。 允许的值为 false(默认)和 true 。 |

否 |

| partitionRootPath | 启用分区发现时,请指定绝对根路径,以便将已分区文件夹读取为数据列。 如果未指定,则默认情况下, - 在数据集或源的文件列表中使用文件路径时,分区根路径是在数据集中配置的路径。 - 使用通配符文件夹筛选器时,分区根路径是第一个通配符前的子路径。 例如,假设你将数据集中的路径配置为“root/folder/year=2020/month=08/day=27”: - 如果将分区根路径指定为“root/folder/year=2020”,则除了文件内的列外,复制活动还将生成另外两个列 month 和 day,其值分别为“08”和“27”。- 如果未指定分区根路径,则不会生成额外的列。 |

否 |

| maxConcurrentConnections | 活动运行期间与数据存储建立的并发连接的上限。 仅在要限制并发连接时指定一个值。 | 否 |

示例:

"activities":[

{

"name": "CopyFromGoogleCloudStorage",

"type": "Copy",

"inputs": [

{

"referenceName": "<Delimited text input dataset name>",

"type": "DatasetReference"

}

],

"outputs": [

{

"referenceName": "<output dataset name>",

"type": "DatasetReference"

}

],

"typeProperties": {

"source": {

"type": "DelimitedTextSource",

"formatSettings":{

"type": "DelimitedTextReadSettings",

"skipLineCount": 10

},

"storeSettings":{

"type": "GoogleCloudStorageReadSettings",

"recursive": true,

"wildcardFolderPath": "myfolder*A",

"wildcardFileName": "*.csv"

}

},

"sink": {

"type": "<sink type>"

}

}

}

]

文件夹和文件筛选器示例

本部分介绍使用通配符筛选器生成文件夹路径和文件名的行为。

| Bucket | 关键值 | recursive | 源文件夹结构和筛选器结果(用粗体表示的文件已检索) |

|---|---|---|---|

| Bucket | Folder*/* |

false | Bucket FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv AnotherFolderB File6.csv |

| Bucket | Folder*/* |

true | Bucket FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv AnotherFolderB File6.csv |

| Bucket | Folder*/*.csv |

false | Bucket FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv AnotherFolderB File6.csv |

| Bucket | Folder*/*.csv |

true | Bucket FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv AnotherFolderB File6.csv |

文件列表示例

本部分介绍了在复制活动源中使用文件列表路径时产生的行为。

假设有以下源文件夹结构,并且要复制以粗体显示的文件:

| 示例源结构 | FileListToCopy.txt 中的内容 | 配置 |

|---|---|---|

| Bucket FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv 元数据 FileListToCopy.txt |

File1.csv Subfolder1/File3.csv Subfolder1/File5.csv |

在数据集中: - 桶: bucket- 文件夹路径: FolderA在复制活动源中: - 文件列表路径: bucket/Metadata/FileListToCopy.txt 文件列表路径指向同一数据存储中的一个文本文件,该文件包含要复制的文件列表(每行一个文件,使用数据集中所配置路径的相对路径)。 |

查找活动属性

若要了解有关属性的详细信息,请查看 Lookup 活动。

GetMetadata 活动属性

若要了解有关属性的详细信息,请查看 GetMetadata 活动。

Delete 活动属性

若要了解有关属性的详细信息,请查看删除活动。

旧模型

如果你过去使用 Amazon S3 连接器从 Google 云存储复制数据,为了后向兼容,我们现在仍按原样支持此操作。 建议使用前面提到的新模型。 创作 UI 已切换为生成新模型。

相关内容

有关复制活动支持作为源和接收器的数据存储的列表,请参阅支持的数据存储。