适用于: Azure 数据工厂

Azure 数据工厂  Azure Synapse Analytics

Azure Synapse Analytics

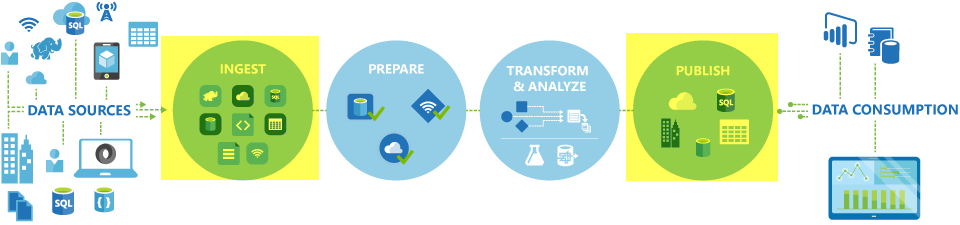

在 Azure 数据工厂和 Synapse 管道中,可使用复制活动在本地与云数据存储之间复制数据。 复制数据后,可以使用其他活动进一步转换和分析数据。 还可使用复制操作发布转换和分析结果,以供商业智能 (BI) 和应用程序使用。

复制活动在集成运行时上执行。 对于不同的数据复制方案,可以使用不同类型的集成运行时:

- 在两个通过互联网从任何 IP 公开访问的数据存储之间复制数据时,可以使用 Azure 集成运行时进行复制活动。 此集成运行时较为安全可靠、可缩放并全局可用。

- 向/从本地数据存储或位于具有访问控制的网络(如 Azure 虚拟网络)中的数据存储复制数据时,需要安装自承载集成运行时。

集成运行时需要与每个源数据存储和接收器数据存储相关联。 有关复制活动如何确定要使用的集成运行时的信息,请参阅确定要使用哪个 IR。

注意

不能在同一复制活动中使用多个自承载集成运行时。 活动的源和汇必须通过相同的自承载集成运行时进行连接。

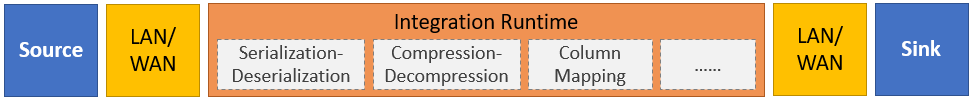

若要将数据从源复制到接收器,运行复制活动的服务将执行以下步骤:

- 读取源数据存储中的数据。

- 执行序列化/反序列化、压缩/解压缩、列映射等。 此服务基于输入数据集、输出数据集和复制活动的配置执行这些操作。

- 将数据写入汇聚点或目标数据存储。

注意

如果在复制活动的源数据存储或接收器数据存储中使用了自承载集成运行时,则必须可从托管集成运行时的服务器同时访问源和接收器,这样复制活动才能成功。

支持的数据存储和格式

注意

如果连接器标记为“预览”,可以试用它并给我们反馈。 若要在解决方案中使用预览版连接器的依赖项,请联系 Azure 支持。

支持的文件格式

Azure 数据工厂支持以下文件格式。 请参阅每一篇介绍基于格式的设置的文章。

- Avro 格式

- 二进制格式

- 带分隔符的文本格式

- Excel 格式

- Iceberg 格式(仅适用于 Azure Data Lake Storage Gen2)

- JSON 格式

- ORC 格式

- Parquet 格式

- XML 格式

可以使用复制活动在两个基于文件的数据存储之间按原样复制文件,在这种情况下,无需任何序列化或反序列化即可高效复制数据。 此外,还可以分析或生成给定格式的文件。例如,可以执行以下操作:

- 从 SQL Server 数据库复制数据,并将数据以 Parquet 格式写入 Azure Data Lake Storage Gen2。

- 从本地文件系统中复制文本 (CSV) 格式文件,并将其以 Avro 格式写入 Azure Blob 存储。

- 从本地文件系统复制压缩文件,动态解压缩,然后将提取的文件写入 Azure Data Lake Storage Gen2。

- 从 Azure Blob 存储复制 Gzip 压缩文本 (CSV) 格式的数据,并将其写入 Azure SQL 数据库。

- 需要序列化/反序列化或压缩/解压缩的其他许多活动。

支持的区域

支持复制活动的服务在Azure Integration Runtime 位置中所列的区域和地理位置上全球可用。 全局可用拓扑可确保高效的数据移动,此类移动通常避免跨区域跃点。 请参阅 “按区域分类的产品 ”,以检查特定区域中数据工厂、Synapse 工作区和数据移动的可用性。

配置

若要使用管道执行复制活动,可以使用以下工具或 SDK 之一:

通常,若要使用 Azure 数据工厂或 Synapse 管道中的复制活动,需要执行以下操作:

- 创建用于源数据存储和接收器数据存储的链接服务。 在本文的支持的数据存储和格式部分可以找到支持的连接器列表。 有关配置信息和支持的属性,请参阅连接器文章的“链接服务属性”部分。

- 为源和接收器创建数据集。 有关配置信息和支持的属性,请参阅源和接收器连接器文章的“数据集属性”部分。

- 创建包含复制活动的管道。 接下来的部分将提供示例。

语法

以下复制活动模板包含受支持属性的完整列表。 指定符合你的情境的选项。

"activities":[

{

"name": "CopyActivityTemplate",

"type": "Copy",

"inputs": [

{

"referenceName": "<source dataset name>",

"type": "DatasetReference"

}

],

"outputs": [

{

"referenceName": "<sink dataset name>",

"type": "DatasetReference"

}

],

"typeProperties": {

"source": {

"type": "<source type>",

<properties>

},

"sink": {

"type": "<sink type>"

<properties>

},

"translator":

{

"type": "TabularTranslator",

"columnMappings": "<column mapping>"

},

"dataIntegrationUnits": <number>,

"parallelCopies": <number>,

"enableStaging": true/false,

"stagingSettings": {

<properties>

},

"enableSkipIncompatibleRow": true/false,

"redirectIncompatibleRowSettings": {

<properties>

}

}

}

]

语法详细信息

| 属性 | 说明 | 必需? |

|---|---|---|

| 类型 | 对于复制活动,请设置为 Copy |

是 |

| 输入 | 指定您创建的用于指向源数据的数据集。 复制活动仅支持单个输入。 | 是 |

| 输出 | 指定您创建的指向目标数据的数据集。 复制活动仅支持单个输出。 | 是 |

| type属性 | 指定用于配置复制活动的属性。 | 是 |

| 源 | 指定复制源类型以及用于检索数据的相应属性。 有关详细信息,请参阅受支持的数据存储和格式中所列的连接器文章中的“复制活动属性”部分。 |

是 |

| 接收器 | 指定复制汇聚点类型以及用于写入数据的相应属性。 有关详细信息,请参阅受支持的数据存储和格式中所列的连接器文章中的“复制活动属性”部分。 |

是 |

| 翻译器 | 指定从源到接收器的显式列映射。 当默认复制行为无法满足需求时,此属性适用。 有关详细信息,请参阅复制活动中的架构映射。 |

否 |

| 数据集成单元 | 指定一个度量值,用于表示 Azure Integration Runtime 在复制数据时的算力。 这些单位以前称为云数据移动单位 (DMU)。 有关详细信息,请参阅数据集成单位。 |

否 |

| 并行副本 | 指定从源读取数据和向接收器写入数据时想要复制活动使用的并行度。 有关详细信息,请参阅并行复制。 |

否 |

| 保存 | 指定在数据复制期间是否保留元数据/ACL。 有关详细信息,请参阅保留元数据。 |

否 |

| enableStaging stagingSettings |

指定是否将临时数据分阶段存储在 Blob 存储中,而不是将数据直接从源复制到接收器。 有关有用的方案和配置详细信息,请参阅分阶段复制。 |

否 |

| 启用跳过不兼容行 重定向不兼容的行设置 |

选择将数据从源复制到接收器时如何处理不兼容的行。 有关详细信息,请参阅容错。 |

否 |

监视

可以通过直观的用户界面和编程方式监控在 Azure 数据工厂和 Synapse 管道中运行的复制活动。 有关详细信息,请参阅监控复制活动。

增量复制

通过数据工厂和 Synapse 管道,可以递增方式将增量数据从源数据存储复制到目标数据存储。 有关详细信息,请参阅教程:以增量方式复制数据。

性能和优化

复制活动监视功能向你显示每个活动执行的复制性能统计信息。 复制活动性能和可伸缩性指南介绍通过复制活动影响数据移动性能的关键因素。 其中还列出了在测试期间观测到的性能值,并介绍了如何优化复制活动的性能。

从上次失败的运行恢复

复制活动支持在基于文件的存储之间复制具有二进制格式的文件 as-is,并选择保留从源到接收器的文件夹/文件层次结构(例如,将数据从 Amazon S3 迁移到 Azure Data Lake Storage Gen2)时,复制活动支持从上次失败运行恢复。 它适用于以下基于文件的连接器: Amazon S3、Amazon S3兼容存储Azure Blob、 Azure Data Lake Storage Gen2、 Azure 文件存储、 文件系统、 FTP、 Google 云存储、 HDFS、 Oracle 云存储和SFTP。

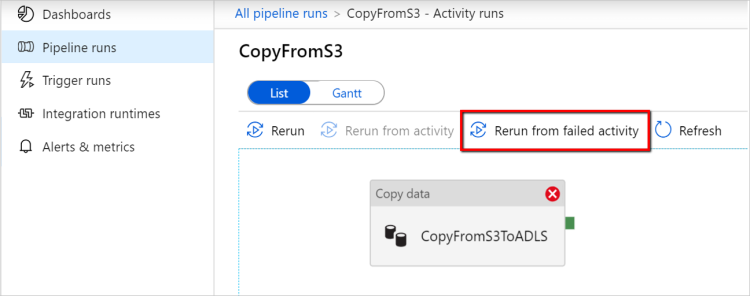

可以通过以下两种方式使用复制活动恢复进度:

活动级别重试: 你可以在复制活动中设置重试次数。 在管道执行期间,如果此复制活动运行失败,则下一次自动重试从上次试用的失败点开始。

从失败的活动重新运行: 管道执行完成后,还可以通过 ADF UI 监视视图或编程方式触发从失败的活动重新运行的操作。 如果失败的活动是复制活动,则管道不仅会从此活动重新运行,而且还会从上一个运行的故障点恢复。

要注意的几点:

- 恢复发生在文件级别。 如果复制活动在复制文件时失败,下一次运行时会重新复制此特定文件。

- 若要继续正常工作,请勿更改复制活动在重新运行时的设置。

- 从 Amazon S3、Azure Blob、Azure Data Lake Storage Gen2 和 Google Cloud Storage 复制数据时,复制活动可以从任意数量已复制的文件恢复。 尽管对于其他基于文件的连接器作为源,复制活动目前只支持从有限数量的文件中恢复,通常为数万个,并且根据文件路径的长度有所不同;超过此数量的文件将在重新运行时被重新复制。

对于二进制文件复制以外的其他情况,将从头开始重新运行复制活动。

注意

目前,只有自承载集成运行时版本 5.43.8935.2 或更高版本支持通过自承载集成运行时从上次失败的运行中恢复。

保留元数据和数据

在将数据从源复制到目标接收器时,例如在数据湖迁移的场景中,还可以选择使用复制活动来同时保存元数据和访问控制列表(ACL),以及相关的数据。 有关详细信息,请参阅保留元数据。

将元数据标记添加到基于文件的接收器

当接收器基于 Azure 存储(Azure 数据湖存储或 Azure Blob 存储)时,我们可以选择向文件添加一些元数据。 这些元数据将以键值对的形式作为文件属性的一部分出现。 对于所有类型的基于文件的接收器,可以使用管道参数、系统变量、函数和变量添加涉及动态内容的元数据。 除此之外,对于基于二进制文件的接收器,可以选择使用关键字 $$LASTMODIFIED 添加上次修改日期/时间,并将自定义值作为元数据添加到接收器文件。

架构和数据类型映射

请参阅架构和数据类型映射,以了解复制活动如何将源数据映射到接收器。

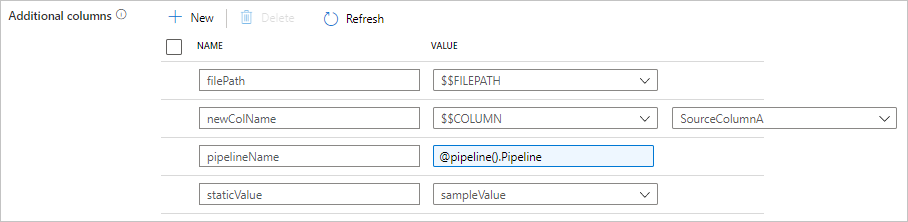

在复制过程中添加其他列

除了将数据从源数据存储复制到接收器外,还可以进行配置,以便添加要一起复制到接收器的其他数据列。 例如:

- 从基于文件的源进行复制时,将相对文件路径存储为字符串类型的附加列,以便跟踪数据的来源文件。

- 将指定的源列复制为另一列。

- 添加包含 ADF 表达式的列,以附加 ADF 系统变量(例如管道名称/管道 ID),或存储来自上游活动输出的其他动态值。

- 添加一个包含静态值的列以满足下游消耗需求。

可以在“复制活动源”选项卡上找到以下配置。也可以照常使用定义的列名在复制活动架构映射中映射这些附加列。

提示

此功能适用于最新的数据集模型。 如果在 UI 中未看到此选项,请尝试创建一个新数据集。

若要以编程方式对其进行配置,请在复制活动源中添加 additionalColumns 属性:

| 属性 | 说明 | 必填 |

|---|---|---|

| 附加列 | 添加要复制到接收器的其他数据列。additionalColumns 数组下的每个对象都表示一个额外的列。

name 定义列名称,value 表示该列的数据值。允许的数据值为: - $$FILEPATH - 一个保留变量,指示将源文件的相对路径存储在数据集中指定的文件夹路径。 应用于基于文件的源。- $$COLUMN:<source_column_name> - 保留变量模式指示将指定的源列复制为另一个列- 表达式 - 静态值 |

否 |

示例:

"activities":[

{

"name": "CopyWithAdditionalColumns",

"type": "Copy",

"inputs": [...],

"outputs": [...],

"typeProperties": {

"source": {

"type": "<source type>",

"additionalColumns": [

{

"name": "filePath",

"value": "$$FILEPATH"

},

{

"name": "newColName",

"value": "$$COLUMN:SourceColumnA"

},

{

"name": "pipelineName",

"value": {

"value": "@pipeline().Pipeline",

"type": "Expression"

}

},

{

"name": "staticValue",

"value": "sampleValue"

}

],

...

},

"sink": {

"type": "<sink type>"

}

}

}

]

提示

配置其他列后,请记得在“映射”选项卡中将其映射到你的目标接收器。

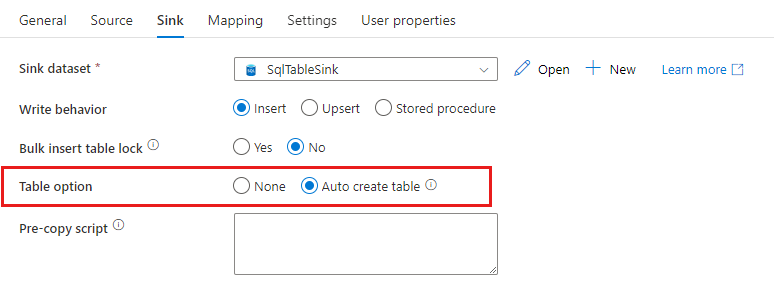

自动创建接收器表

将数据复制到 SQL 数据库/Azure Synapse Analytics 时,如果目标表不存在,复制活动支持基于源数据自动创建它。 它旨在帮助快速开始加载数据并评估 SQL 数据库/Azure Synapse Analytics。 在数据导入后,可以根据需要查看和调整汇聚表的架构。

将数据从任何源复制到以下接收器数据存储时,支持此功能。 可以在“ADF 创作 UI”–>“复制活动接收器”–>“表选项”–>“自动创建表”上,或通过复制活动接收器有效负载中的 tableOption 属性找到该选项。

容错

默认情况下,如果源数据行与接收器数据行不兼容,复制活动将停止复制数据,并返回失败结果。 要使复制成功,可将复制活动配置为跳过并记录不兼容的行,仅复制兼容的数据。 有关详细信息,请参阅数据复制容错机制。

数据一致性验证

将数据从源移动到目标存储时,复制活动提供了一个选项,用于执行额外的数据一致性验证,以确保数据不仅成功地从源存储复制到目标存储,而且验证在源存储与目标存储之间保持一致。 在数据移动过程中发现不一致的文件后,可以中止复制活动,或者通过启用容错设置跳过不一致的文件来继续复制其余文件。 通过在复制活动中启用会话日志设置,可以获取跳过的文件名称。 有关详细信息,请参阅复制活动中的数据一致性验证。

会话日志

可以记录复制的文件名,这有助于进一步确保数据不仅从源存储成功复制到目标存储,还可以通过查看复制活动会话日志在源和目标存储之间保持一致。 有关详细信息,请参阅复制活动中的会话日志。

相关内容

请参阅以下快速入门、教程和示例: