Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

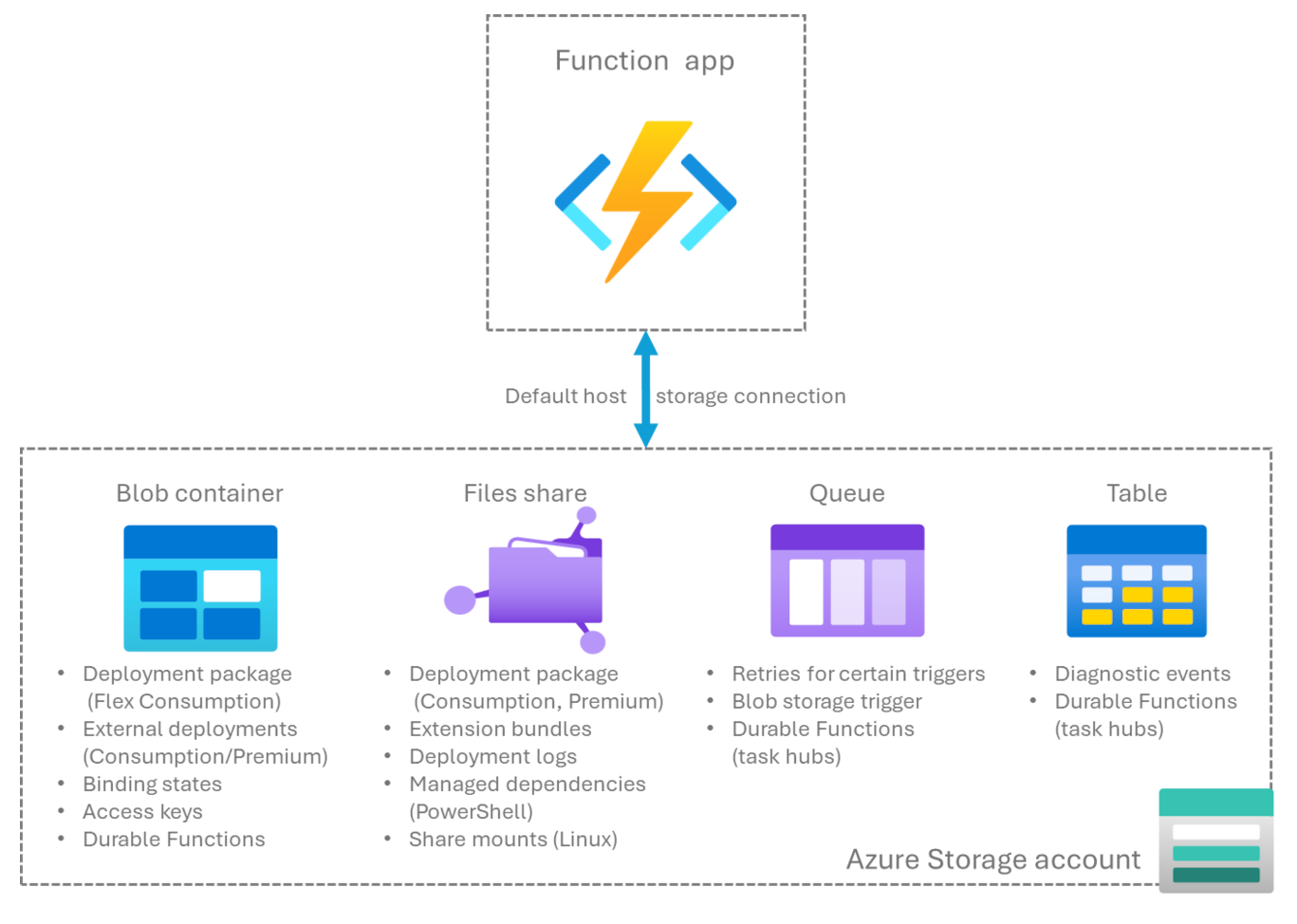

When you create a function app instance in Azure, you must provide access to a default Azure Storage account. The following diagram and table detail how Azure Functions uses services in the default storage account:

| Storage service | Functions usage |

|---|---|

| Azure Blob storage | Maintain bindings state and function keys1. Used by default for task hubs in Durable Functions. Can be used to store function app code for Linux Consumption remote build or as part of external package URL deployments. |

| Azure Files2 | File share used to store and run your function app code in a Consumption Plan and Premium Plan. Maintain extension bundles. Store deployment logs. Supports Managed dependencies in PowerShell. |

| Azure Queue storage | Used by default for task hubs in Durable Functions. Used for failure and retry handling in specific Azure Functions triggers. Used for object tracking by the Blob storage trigger. |

| Azure Table storage | Used by default for task hubs in Durable Functions. |

- Blob storage is the default store for function keys, but you can configure an alternate store.

- Azure Files is set up by default, but you can create an app without Azure Files under certain conditions.

Important considerations

You must strongly consider the following facts regarding the storage accounts used by your function apps:

When your function app is hosted on the Consumption plan or Premium plan, your function code and configuration files are stored in Azure Files in the linked storage account. When you delete this storage account, the content is deleted and can't be recovered. For more information, see Storage account was deleted.

Important data, such as function code, access keys, and other important service-related data, persist in the storage account. You must carefully manage access to the storage accounts used by function apps in the following ways:

Audit and limit the access of apps and users to the storage account based on a least-privilege model. Permissions to the storage account can come from data actions in the assigned role or through permission to perform the listKeys operation.

Monitor both control plane activity (such as retrieving keys) and data plane operations (such as writing to a blob) in your storage account. Consider maintaining storage logs in a location other than Azure Storage. For more information, see Storage logs.

Storage account requirements

Storage accounts that you create during the function app creation process in the Azure portal work with the new function app. When you choose to use an existing storage account, the list provided doesn't include certain unsupported storage accounts. The following restrictions apply to storage accounts used by your function app. Make sure an existing storage account meets these requirements:

The account type must support Blob, Queue, and Table storage. Some storage accounts don't support queues and tables. These accounts include blob-only storage accounts and Azure Premium Storage. To learn more about storage account types, see Storage account overview.

You can't use a network-secured storage account when your function app is hosted in the Consumption plan.

When you create your function app in the Azure portal, you can only choose an existing storage account in the same region as the function app that you create. This requirement is a performance optimization and not a strict limitation. To learn more, see Storage account location.

When you create your function app on a plan with availability zone support enabled, only zone-redundant storage accounts are supported.

When you use deployment automation to create your function app with a network-secured storage account, you must include specific networking configurations in your ARM template or Bicep file. If you don't include these settings and resources, your automated deployment might fail in validation. For ARM template and Bicep guidance, see Secured deployments. For an overview on configuring storage accounts with networking, see How to use a secured storage account with Azure Functions.

Storage account guidance

Every function app requires a storage account to operate. When you delete that account, your function app stops running. To troubleshoot storage-related issues, see How to troubleshoot storage-related issues. The following considerations apply to the storage account used by function apps.

Storage account location

For best performance, your function app should use a storage account in the same region, which reduces latency. The Azure portal enforces this best practice. If you need to use a storage account in a region different from your function app, you must create your function app outside of the Azure portal.

The storage account must be accessible to the function app. If you need to use a secured storage account, consider restricting your storage account to a virtual network.

Storage account connection setting

By default, function apps configure the AzureWebJobsStorage connection as a connection string stored in the AzureWebJobsStorage application setting. You can also configure AzureWebJobsStorage to use an identity-based connection without a secret.

Function apps running in a Consumption plan (Windows only) or an Elastic Premium plan (Windows or Linux) can use Azure Files to store the images required to enable dynamic scaling. For these plans, set the connection string for the storage account in the WEBSITE_CONTENTAZUREFILECONNECTIONSTRING setting and the name of the file share in the WEBSITE_CONTENTSHARE setting. This value is usually the same account used for AzureWebJobsStorage. You can also create a function app that doesn't use Azure Files, but scaling might be limited.

Note

You must update a storage account connection string when you regenerate storage keys. For more information, see Create an Azure storage account.

Shared storage accounts

Multiple function apps can share the same storage account without any problems. For example, in Visual Studio, you can develop multiple apps by using the Azurite storage emulator. In this case, the emulator acts like a single storage account. The same storage account that your function app uses can also store your application data. However, this approach isn't always a good idea in a production environment.

You might need to use separate storage accounts to avoid host ID collisions.

Lifecycle management policy considerations

Don't apply lifecycle management policies to your Blob Storage account used by your function app. Functions uses Blob storage to persist important information, such as function access keys. Policies could remove blobs, such as keys, needed by the Functions host. If you must use policies, exclude containers used by Functions, which are prefixed with azure-webjobs or scm.

Storage logs

Because function code and keys might be persisted in the storage account, logging of activity against the storage account is a good way to monitor for unauthorized access. Azure Monitor resource logs can be used to track events against the storage data plane. See Monitoring Azure Storage for details on how to configure and examine these logs.

The Azure Monitor activity log shows control plane events, including the listKeys operation. However, you should also configure resource logs for the storage account to track subsequent use of keys or other identity-based data plane operations. You should have at least the StorageWrite log category enabled to be able to identify modifications to the data outside of normal Functions operations.

To limit the potential impact of any broadly scoped storage permissions, consider using a nonstorage destination for these logs, such as Log Analytics. For more information, see Monitoring Azure Blob Storage.

Optimize storage performance

To maximize performance, use a separate storage account for each function app. This approach is particularly important when you have Durable Functions or Event Hubs triggered functions, which both generate a high volume of storage transactions. When your application logic interacts with Azure Storage, either directly (using the Storage SDK) or through one of the storage bindings, you should use a dedicated storage account. For example, if you have an event hub-triggered function writing some data to blob storage, use two storage accounts: one for the function app and another for the blobs that the function stores.

Consistent routing through virtual networks

Multiple function apps hosted in the same plan can also use the same storage account for the Azure Files content share, defined by WEBSITE_CONTENTAZUREFILECONNECTIONSTRING. When you secure this storage account by using a virtual network, all of these apps (including slots) should use the same value for vnetContentShareEnabled (formerly WEBSITE_CONTENTOVERVNET) and the same virtual network integration configuration to ensure that traffic routes consistently through the intended virtual network. A mismatch in this setting between apps that use the same Azure Files storage account might result in traffic routing through public networks. In this configuration, storage account network rules block access.

Working with blobs

A key scenario for Functions is file processing of files in a blob container, such as for image processing or sentiment analysis. To learn more, see Process file uploads.

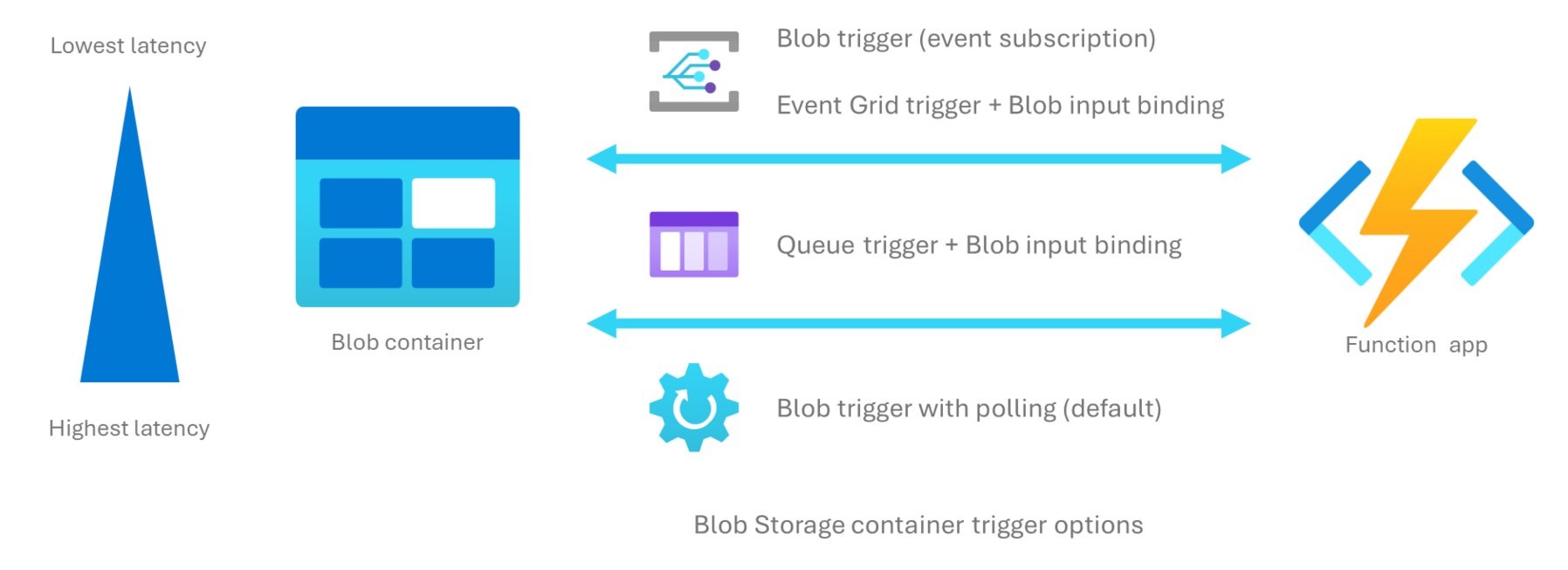

Trigger on a blob container

There are several ways to run your function code based on changes to blobs in a storage container, as indicated by this diagram:

Use the following table to determine which function trigger best fits your needs for processing added or updated blobs in a container:

| Strategy | Blob trigger (polling) | Blob trigger (event-driven) | Queue trigger | Event Grid trigger |

|---|---|---|---|---|

| Latency | High (up to 10 min) | Low | Medium | Low |

| Storage account limitations | Blob-only accounts not supported¹ | general purpose v1 not supported | none | general purpose v1 not supported |

| Trigger type | Blob storage | Blob storage | Queue storage | Event Grid |

| Extension version | Any | Storage v5.x+ | Any | Any |

| Processes existing blobs | Yes | No | No | No |

| Filters | Blob name pattern | Event filters | n/a | Event filters |

| Requires event subscription | No | Yes | No | Yes |

| Supports high-scale² | No | Yes | Yes | Yes |

| Works with inbound access restrictions | Yes | No | Yes | Yes3 |

| Description | Default trigger behavior, which relies on polling the container for updates. For more information, see the examples in the Blob storage trigger reference. | Consumes blob storage events from an event subscription. Requires a Source parameter value of EventGrid. For more information, see Tutorial: Trigger Azure Functions on blob containers using an event subscription. |

Blob name string is manually added to a storage queue when a blob is added to the container. A Queue storage trigger passes this value directly to a Blob storage input binding on the same function. | Provides the flexibility of triggering on events besides those events that come from a storage container. Use when need to also have nonstorage events trigger your function. For more information, see How to work with Event Grid triggers and bindings in Azure Functions. |

- Blob storage input and output bindings support blob-only accounts.

- High scale can be loosely defined as containers that have more than 100,000 blobs in them or storage accounts that have more than 100 blob updates per second.

- You can work around inbound access restrictions by having the event subscription deliver events over an encrypted channel in public IP space using a known user identity.

Storage data encryption

Azure Storage encrypts all data in a storage account at rest. For more information, see Azure Storage encryption for data at rest.

By default, data is encrypted with Microsoft-managed keys. For more control over encryption keys, you can supply customer-managed keys to use for encryption of blob and file data. These keys must be present in Azure Key Vault for Functions to be able to access the storage account. To learn more, see Encrypt your application data at rest using customer-managed keys.

In-region data residency

When all customer data must remain within a single region, the storage account associated with the function app must be one with in-region redundancy. An in-region redundant storage account also must be used with Azure Durable Functions.

Host ID considerations

Functions uses a host ID value as a way to uniquely identify a particular function app in stored artifacts. By default, this ID is autogenerated from the name of the function app, truncated to the first 32 characters. This ID is then used when storing per-app correlation and tracking information in the linked storage account. When you have function apps with names longer than 32 characters and when the first 32 characters are identical, this truncation can result in duplicate host ID values. When two function apps with identical host IDs use the same storage account, you get a host ID collision because stored data can't be uniquely linked to the correct function app.

Note

This same kind of host ID collision can occur between a function app in a production slot and the same function app in a staging slot, when both slots use the same storage account.

In version 4.x of the Functions runtime, an error is logged and the host is stopped, resulting in a hard failure. For more information, see HostID Truncation can cause collisions.

Avoiding host ID collisions

You can use the following strategies to avoid host ID collisions:

- Use a separate storage account for each function app or slot involved in the collision.

- Rename one of your function apps to a value fewer than 32 characters in length, which changes the computed host ID for the app and removes the collision.

- Set an explicit host ID for one or more of the colliding apps. To learn more, see Override the host ID.

Important

Changing the storage account associated with an existing function app or changing the app's host ID can affect the behavior of existing functions. For example, a Blob storage trigger tracks whether it's processed individual blobs by writing receipts under a specific host ID path in storage. When the host ID changes or you point to a new storage account, previously processed blobs could be reprocessed.

Override the host ID

You can explicitly set a specific host ID for your function app in the application settings by using the AzureFunctionsWebHost__hostid setting. For more information, see AzureFunctionsWebHost__hostid.

When the collision occurs between slots, you must set a specific host ID for each slot, including the production slot. You must also mark these settings as deployment settings so they don't get swapped. To learn how to create app settings, see Work with application settings.

Create an app without Azure Files

The Azure Files service provides a shared file system that supports high-scale scenarios. When your function app runs in an Elastic Premium plan or on Windows in a Consumption plan, an Azure Files share is created by default in your storage account. This share is used by Functions to enable certain features, like log streaming. It's also used as a shared package deployment location, which guarantees the consistency of your deployed function code across all instances.

By default, function apps hosted in Premium and Consumption plans use zip deployment, with deployment packages stored in this Azure file share. This section is only relevant to these hosting plans.

Using Azure Files requires the use of a connection string, which is stored in your app settings as WEBSITE_CONTENTAZUREFILECONNECTIONSTRING. Azure Files doesn't currently support identity-based connections. If your scenario requires you to not store any secrets in app settings, you must remove your app's dependency on Azure Files. You can avoid this dependency by creating your app without the default Azure Files dependency.

To run your app without the Azure file share, you must meet the following requirements:

- You must deploy your package to a remote Azure Blob storage container and then set the URL that provides access to that package as the

WEBSITE_RUN_FROM_PACKAGEapp setting. This approach lets you store your app content in Blob storage instead of Azure Files, which does support managed identities.

You must manually update the deployment package and maintain the deployment package URL, which likely contains a shared access signature (SAS).

You should also note the following considerations:

- The app can't use version 1.x of the Functions runtime.

- Your app can't rely on a shared writeable file system.

- Portal editing isn't supported.

- Log streaming experiences in clients such as the Azure portal default to file system logs. You should instead rely on Application Insights logs.

If the preceding requirements suit your scenario, you can proceed to create a function app without Azure Files. Create an app without the WEBSITE_CONTENTAZUREFILECONNECTIONSTRING and WEBSITE_CONTENTSHARE app settings in one of these ways:

- Bicep/ARM templates: remove the two app settings from the ARM template or Bicep file and then deploy the app using the modified template.

- The Azure portal: unselect Add an Azure Files connection in the Storage tab when you create the app in the Azure portal.

Azure Files is used to enable dynamic scale-out for Functions. Scaling could be limited when you run your app without Azure Files in the Elastic Premium plan and Consumption plans running on Windows.

Mount file shares

This functionality is currently only available when running on Linux.

You can mount Azure Files shares to your Linux function apps, which lets you access existing files, machine learning models, or large binaries in your functions. Storage mounts aren't supported on the Consumption plan. For conceptual guidance on choosing between storage mounts, bindings, and external databases, see Choose a file access strategy for Azure Functions.

Important

After 30 September 2028, the option to host your function app on Linux in a Consumption plan is retired. Apps running on Windows in a Consumption plan aren't affected by this change.

You can use the following command to mount an existing share to your Linux function app.

az webapp config storage-account add

In this command, share-name is the name of the existing Azure Files share. custom-id can be any string that uniquely defines the share when mounted to the function app. Also, mount-path is the path from which the share is accessed in your function app. mount-path must be in the format /dir-name, and it can't start with /home.

For a complete example, see Create a Python function app and mount an Azure Files share.

Related article

Learn more about Azure Functions hosting options.