了解流单元和流节点

流单元(SU)表示执行流分析作业的计算资源。 SU 数越高,为作业分配的 CPU 和内存资源越多。 此容量使你能够专注于查询逻辑,并且无需管理及时运行流分析作业所需的硬件。

Azure 流分析支持两种流单元结构:SU V1(即将弃用)和 SU V2(推荐)。

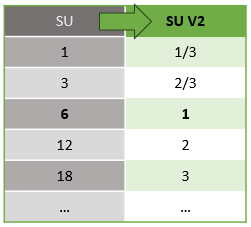

SU V1 模型是 Azure 流分析首次推出的产品,其中每 6 个 SU 对应于作业的单个流式处理节点。 作业也可以使用 1 和 3 个 SU 运行,它们与小数流式处理节点相对应。 在超过 6 个 SU 作业时,通过增量为 6 的扩展来进行缩放,即扩展到 12、18、24 个及更多,并通过添加流式处理节点提供分布式计算资源。

SU V2 模型(建议)是一种简化的结构,具有相同的计算资源的优惠定价。 在 SU V2 模型中,1 个 SU V2 与作业中一个流节点对应。 2 个 SU V2 对应于 2 个流节点、3 到 3 等。 使用 1/3 和 2/3 SU V2 的作业也可用于一个流节点,但计算资源仅为一部分。 1/3 SU 和 2/3 SU V2 作业为需要较小规模的工作负载提供了一种经济高效的选项。

下表显示了 V1 和 V2 流单元的基础计算能力:

有关 SU 定价的信息,请访问 Azure 流分析定价页。

了解流单元转换及其应用场景

系统会自动将流数据单元从 REST API 层转换为 UI 层(包括 Azure 门户和 Visual Studio Code)。 还可以在 活动日志 中看到此转换,其中流式处理单元值与 UI 上的值不同。 此行为是设计造成的。 REST API 字段仅限于整数值,但流分析作业支持小数节点(1/3 和 2/3 流单元)。 Azure 流分析 UI 显示节点值为 1/3、2/3、1、2、3 等,而后端(活动日志、REST API 层)显示的值分别为 3、7、10、20 和 30。

| 标准 | 标准 V2 (UI) | 标准 V2(后端,例如日志、Rest API 等) |

|---|---|---|

| 1 | 1/3 | 3 |

| 3 | 2/3 | 7 |

| 6 | 1 | 10 |

| 12 | 2 | 20 |

| 18 | 3 | 30 |

| ... | ... | ... |

此转换传达相同的粒度,并消除 V2 库存保留单位(SKU)的 API 层的小数点。 此转换是自动的,不会影响作业的性能。

了解消耗量和内存利用率

为了实现低延迟流式处理,Azure 流分析作业将执行内存中的所有处理。 当作业内存不足时,流式处理作业将失败。 因此,对于生产任务,要密切监测流式处理任务的资源使用情况,并确保分配了足够的资源以保持任务全天候运行。

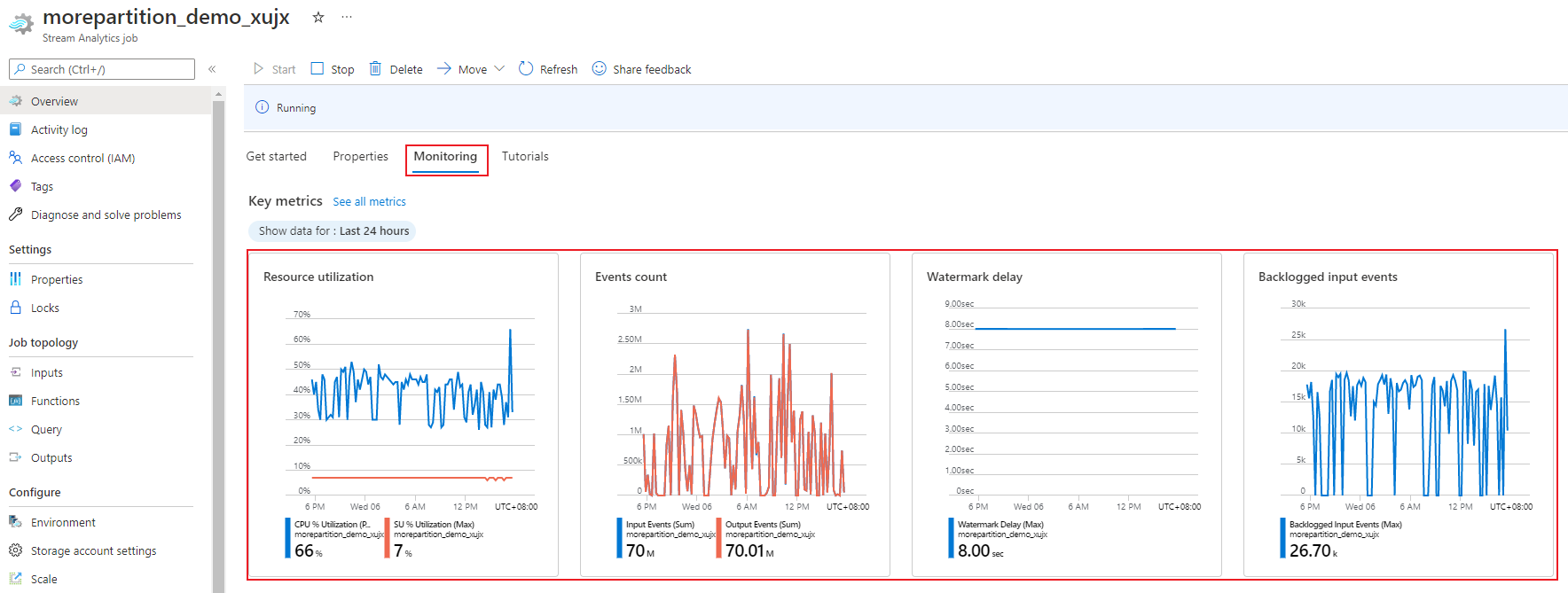

SU 利用率指标的范围为 0% 到 100%,描述工作负荷的内存消耗量。 对于占用最小内存的流式处理作业,此指标通常介于 10% 到 20%。 如果 SU 利用率较高(超过 80%)或者输入事件积压(即使 SU 利用率很低,因为它不显示 CPU 使用率),则可能表示工作负载需要更多的计算资源,这就需要增加流单元的数目。 最好保持低于 80% 的 SU 指标,以应对偶发的峰值。 为了应对工作负荷的增加和流媒体单元的增加,考虑设置一个警报,在 SU 利用率指标为 80% 时发出。 此外,可以使用水印延迟和积压的事件指标来查看是否有影响。

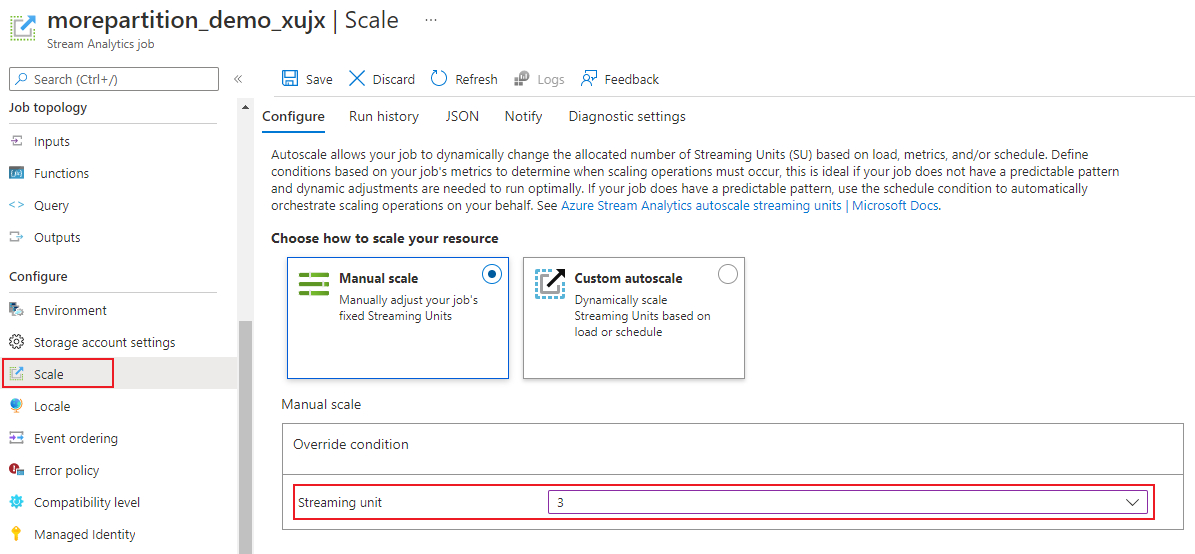

配置流分析流单元 (SU)

登录到 Azure 门户。

在资源列表中,找到要缩放的流分析作业,然后将其打开。

在作业页中的“配置”标题下,选择“缩放”。 创建作业时,默认的 SU 数为 1。

在下拉列表中选择 SU 选项以设置作业的 SU。 您被限定在特定的 SU 范围内。

在作业运行时,可以更改分配给它的 SU 数量。 如果作业使用 非分区输出 或 具有具有不同 PARTITION BY 值的多步骤查询,则可能只能从一组 SU 值中进行选择。

监视作业性能

使用 Azure 门户,可以跟踪作业的性能相关指标。 有关指标定义的详细信息,请参阅 Azure 流分析作业指标。 有关门户中的指标监视的详细信息,请参阅 使用 Azure 门户监视流分析作业。

计算工作负荷的预期吞吐量。 如果吞吐量低于预期,则可调整输入分区和查询,并为作业添加 SU。

作业需要多少 SU?

所需的 SU 数取决于输入和作业中定义的查询的分区配置。 “Scale”页面允许您设置适当的SU数量。 分配的 SU 数应比你预期的要多。 流分析处理引擎以分配额外内存为代价优化延迟和吞吐量。

一般情况下,对于不使用 PARTITION BY 的查询,请从 1 SU V2 开始。 然后,通过反复试验找到最佳数字。 在传递具有代表性的数据量并检查 SU% 利用率指标后,修改 SU 数。 流分析作业可以使用的最大流单元数取决于为作业定义的查询中的步骤数以及每个步骤中的分区数。 可在此处了解更多有关限制的信息。

有关选择适当数量的 SU 的详细信息,请参阅 缩放 Azure 流分析作业以提高吞吐量。

注意

作业所需的 SU 数取决于输入的分区配置以及为作业定义的查询。 可为作业选择的最大数目为 SU 配额。 有关 Azure 流分析订阅配额的信息,请访问流分析限制。 若要增加订阅的 SU 数,使其超过此配额,请联系 Microsoft 支持部门。 每个作业的 SU 的有效值为 1/3、2/3、1、2、3 等。

可以提高 SU% 利用率的因素

时态(时间导向)的查询元素是流分析提供的有状态运算符的核心集。 Stream Analytics 内部为你管理这些操作的状态。 它在服务升级期间管理内存消耗、复原检查点和状态恢复。 尽管流分析完全管理状态,但请考虑许多最佳实践建议。

具有复杂查询逻辑的作业可以具有较高的 SU% 利用率,即使它不连续接收输入事件也是如此。 这可能发生在输入和输出事件突然激增之后。 如果查询很复杂,作业可能会继续在内存中维护状态。

暂时性错误或系统启动的升级可能会导致 SU% 利用率在短时间内突然下降到 0,然后再恢复到预期级别。 如果查询未完全并行,增加作业的流单元数量可能不会降低 SU% 利用率。

比较一段时间内的利用率时,请使用 事件速率指标。 InputEvents 和 OutputEvents 指标显示读取和处理的事件数。 反序列化错误等指标指示错误事件数。 当每个时间单位的事件数增加时,大多数情况下 SU% 会增加。

时态元素中的有状态查询逻辑

Azure 流分析作业的独特功能之一是有状态处理,例如窗口聚合、临时联接和临时分析函数。 其中的每个运算符都会保存状态信息。 这些查询元素的最大窗口大小为 7 天。

多个流分析查询元素中都出现了时态窗口的概念:

窗口聚合:

GROUP BY翻转、跳跃和滑动窗口时态联接:

JOIN使用DATEDIFF函数时态分析函数:

ISFIRST、LAST、LAG和LIMIT DURATION

以下因素影响流分析作业使用的内存(流单元指标部分):

窗口聚合

开窗聚合的消耗内存(状态大小)并不始终与窗口大小成正比。 所消耗的内存与数据的基数或每个时间窗口内的组数成正比。

例如,在以下查询中,与 clusterid 关联的数字就是查询的基数。

SELECT count(*)

FROM input

GROUP BY clusterid, tumblingwindow (minutes, 5)

若要缓解上一查询中基数过高导致的问题,请将事件发送到按 clusterid分区的事件中心。 通过允许系统使用 PARTITION BY 单独处理每个输入分区来横向扩展查询,如以下示例所示:

SELECT count(*)

FROM input PARTITION BY PartitionId

GROUP BY PartitionId, clusterid, tumblingwindow (minutes, 5)

对查询进行分区后,它会分散到多个节点上。 因此,传入每个节点的值数 clusterid 会减少,从而减少运算符的 GROUP BY 基数。

按分组键对事件中心进行分区,以避免需要减少步骤。 有关详细信息,请参阅事件中心概述。

时间联接

临时联接使用的内存(状态大小)与联接的临时摇摆室中的事件数成正比。 此数字等于事件输入速率乘以摇摆室大小。 换句话说,联接消耗的内存与 DateDiff 时间范围成正比乘以平均事件速率。

联接中不匹配的事件数会影响查询的内存利用率。 以下查询查找生成点击的展示次数:

SELECT clicks.id

FROM clicks

INNER JOIN impressions ON impressions.id = clicks.id AND DATEDIFF(hour, impressions, clicks) between 0 AND 10.

在此示例中,可能会显示大量广告,很少有人点击它们。 您需要在时间范围内保存所有事件。 内存消耗量与时间范围大小和事件发生速率成比例。

若要纠正此行为,请将事件发送到按照联接键(此情况下为 ID)进行分区的 Event Hubs,并通过允许系统使用 PARTITION BY 分别处理每个输入分区来横向扩展查询,如下所示:

SELECT clicks.id

FROM clicks PARTITION BY PartitionId

INNER JOIN impressions PARTITION BY PartitionId

ON impression.PartitionId = clicks.PartitionId AND impressions.id = clicks.id AND DATEDIFF(hour, impressions, clicks) between 0 AND 10

对查询进行分区后,将其分散到多个节点上。 因此,可以减少传入每个节点的事件数,并减小联接窗口中保留的状态大小。

时态分析函数

临时分析函数消耗的内存(状态大小)与事件速率乘以持续时间成正比。 分析函数消耗的内存与窗口大小不成正比,而是与每个时间窗口中的分区计数成正比。

修正的方法类似于临时联接。 可以使用 PARTITION BY 横向扩展查询。

无序缓冲区

可以在“事件排序配置”窗格中配置无序缓冲区大小。 缓冲区保存窗口持续时间的输入,并重新排序它们。 缓冲区的大小与事件输入速率乘以无序窗口大小成正比。 默认窗口大小为 0。

若要修正失序缓冲区溢出,请使用 PARTITION BY 横向扩展查询。 将查询分区后,它会分散到多个节点中。 因此,可以通过减少每个重新排序缓冲区中的事件数来减少传入每个节点的事件数。

输入分区计数

每个作业输入分区都有一个缓冲区。 输入分区数越大,作业消耗的资源越多。 对于每个流单元,Azure 流分析大致可以处理 7 MB/秒的输入。 因此,可以通过将流分析流单元数与事件中心内的分区数进行匹配来进行优化。

通常,配置了三分之一流单元的作业足以用于具有两个分区的事件中心(这是事件中心的最低分区)。 如果事件中心具有更多分区,则流分析作业消耗更多资源,但不一定使用事件中心提供的额外吞吐量。

对于具有一个 V2 流单元的作业,可能需要来自事件中心的 4 或 8 个分区。 但是,请避免过多不必要的分区,因为它们会导致资源过度使用。 例如,在包含 1 个流单元的 Stream Analytics 作业中,使用具有 16 个或更多分区的事件中心。

引用数据

Azure 流分析将引用数据加载到内存中,以便快速查找。 在当前的实现中,每个带有引用数据的联接操作都在内存中保留有一份引用数据,即使你多次使用相同的引用数据进行联接也是如此。 对于使用 PARTITION BY 的查询,每个分区都有一份引用数据,因此,这些分区是完全分离的。 通过倍增效应,如果多次使用多个分区联接引用数据,内存使用率很快就会变得非常高。

使用 UDF 函数

当你添加 UDF 函数时,Azure 流分析会将 JavaScript 运行时加载到内存中,这会影响 SU 百分比。